- [2020/12/6] Release the information of light field datasets. [Jump].

- [2021/1/21] Add more description notes for the evaluation table.

- [2021/1/23] Release all models' saliency maps in our evaluation table. [Jump].

- [2021/3/6] Add a new light field dataset [Jump] as well as a light field salient object detection work [Jump].

- [2021/7/22] Update: Supplement and improve the discussion in review and open direction sections. Benchmark models with normalized (max-min normalization) depth maps and update saliency results [Jump]. Add one traditional model (RDFD) and one deep learning-based model (LFNet) for comparison in our benchmark. Add an experiment retraining one light field SOD model and seven RGB-D SOD models to eliminate training discrepancy [Jump]. The names of datasets are slightly changed.

- [2021/8/6] Add a new light field salient object detection work [Jump].

- [2021/10/3] The paper is accepted by Computational Visual Media journal (CVMJ)! [Jump to the formal journal version]

- [2021/11/11] The Chinese version of the paper is released! [中文版].

- [2022/3/22] Add several new light field salient object detection works [Jump] and a new dataset [Jump].

- [2023/6/1] Add several new light field salient object detection works [Jump].

- Light Field

i. Multi-view Images and Focal Stacks - Light Field SOD

i. Traditional Models

ii. Deep Learning-based Models

iii. Other Review Works - Light Field SOD Datasets

- Benchmarking Results

i. RGB-D SOD Models in Our Tests

ii. Quantitative Comparison

iii. All Models' Saliency Maps

iv. Qualitative Comparison - Citation

- A GIF animation of multi-view images in Lytro Illum.

- A GIF animation of a focal stack in Lytro Illum.

Table I: Overview of traditional LFSOD models.

| No. | Year | Model | pub. | Title | Links |

|---|---|---|---|---|---|

| 1 | 2014 | LFS | CVPR | Saliency Detection on Light Field | Paper/Project |

| 2 | 2015 | WSC | CVPR | A Weighted Sparse Coding Framework for Saliency Detection | Paper/Project |

| 3 | 2015 | DILF | IJCAI | Saliency Detection with a Deeper Investigation of Light Field | Paper/Project |

| 4 | 2016 | RL | ICASSP | Relative location for light field saliency detection | Paper/Project |

| 5 | 2017 | BIF | NPL | A Two-Stage Bayesian Integration Framework for Salient Object Detection on Light Field | Paper/Project |

| 6 | 2017 | LFS | TPAMI | Saliency Detection on Light Field | Paper/Project |

| 7 | 2017 | MA | TOMM | Saliency Detection on Light Field: A Multi-Cue Approach | Paper/Project |

| 8 | 2018 | SDDF | MTAP | Accurate saliency detection based on depth feature of 3D images | Paper/Project |

| 9 | 2018 | SGDC | CVPR | Salience Guided Depth Calibration for Perceptually Optimized Compressive Light Field 3D Display | Paper/Project |

| 10 | 2020 | RDFD | MTAP | Region-based depth feature descriptor for saliency detection on light field | Paper/Project |

| 11 | 2020 | DCA | TIP | Saliency Detection via Depth-Induced Cellular Automata on Light Field | Paper/Project |

| 12 | 2023 | CDCA | ENTROPY | Exploring Focus and Depth-Induced Saliency Detection for Light Field | Paper/Project |

| 13 | 2023 | TFSF | AO | Two-way focal stack fusion for light field saliency detection | Paper/Project |

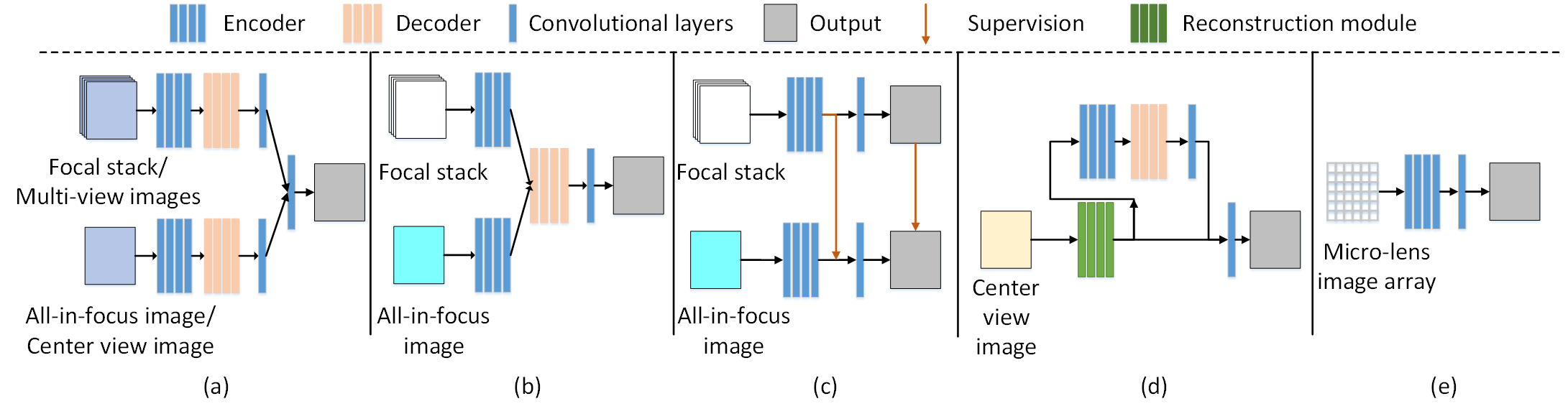

Fig. 1 Frameworks of deep light field SOD models. (a) Late-fusion (DLLF, MTCNet). (b) Middle-fusion (MoLF, LFNet). (c) Knowledge distillation-based (ERNet). (d) Reconstruction-based (DLSD). (e) Single-stream (MAC). Note that (a) utilizes the focal stack/multi-view images and all-in-focus/center view image, while (b)-(c) utilize the focal stack and all-in-focus image. (d)-(e) utilize the center-view image and micro-lens image array.

Table II: Overview of deep learning-based LFSOD models.

| No. | Year | Model | pub. | Title | Links |

|---|---|---|---|---|---|

| 1 | 2019 | DLLF | ICCV | Deep Learning for Light Field Saliency Detection | Paper/Project |

| 2 | 2019 | DLSD | IJCAI | Deep Light-field-driven Saliency Detection from a Single View | Paper/Project |

| 3 | 2019 | MoLF | NIPS | Memory-oriented Decoder for Light Field Salient Object Detection | Paper/Project |

| 4 | 2020 | ERNet | AAAI | Exploit and Replace: An Asymmetrical Two-Stream Architecture for Versatile Light Field Saliency Detection | Paper/Project |

| 5 | 2020 | LFNet | TIP | LFNet: Light Field Fusion Network for Salient Object Detection | Paper/Project |

| 6 | 2020 | MAC | TIP | Light Field Saliency Detection with Deep Convolutional Networks | Paper/Project |

| 7 | 2020 | MTCNet | TCSVT | A Multi-Task Collaborative Network for Light Field Salient Object Detection | Paper/Project |

| 8 | 2021 | DUT-LFSaliency | Arxiv | DUT-LFSaliency: Versatile Dataset and Light Field-to-RGB Saliency Detection | Paper/Project |

| 9 | 2021 | OBGNet | ACM MM | Occlusion-aware Bi-directional Guided Network for Light Field Salient Object Detection | Paper/Project |

| 10 | 2021 | DLGLRG | ICCV | Light Field Saliency Detection with Dual Local Graph Learning and Reciprocative Guidance | Paper/Project |

| 11 | 2021 | GAGNN | IEEE TIP | Geometry Auxiliary Salient Object Detection for Light Fields via Graph Neural Networks | Paper/Project |

| 12 | 2021 | SANet | BMVC | Learning Synergistic Attention for Light Field Salient Object Detection | Paper/Project |

| 13 | 2021 | TCFANet | IEEE SPL | Three-Stream Cross-Modal Feature Aggregation Network for Light Field Salient Object Detection | Paper/Project |

| 14 | 2021 | PANet | IEEE TCYB | PANet: Patch-Aware Network for Light Field Salient Object Detection | Paper/Project |

| 15 | 2021 | MGANet | IEEE ICMEW | Multi-Generator Adversarial Networks For Light Field Saliency Detection | Paper/Project |

| 16 | 2022 | MEANet | Neurocomputing | MEANet: Multi-Modal Edge-Aware Network for Light Field Salient Object Detection | Paper/Project |

| 17 | 2022 | DGENet | IVC | Dual guidance enhanced network for light field salient object detection | Paper/Project |

| 18 | 2022 | NoiseLF | CVPR | Learning from Pixel-Level Noisy Label : A New Perspective for Light Field Saliency Detection | Paper/Project |

| 19 | 2022 | ARFNet | IEEE Systems Journal | ARFNet: Attention-Oriented Refinement and Fusion Network for Light Field Salient Object Detection | Paper/Project |

| 20 | 2022 | -- | IEEE TIP | Weakly-Supervised Salient Object Detection on Light Fields | Paper/Project |

| 21 | 2022 | ESCNet | IEEE TIP | Exploring Spatial Correlation for Light Field Saliency Detection: Expansion From a Single View | Paper/Project |

| 22 | 2022 | LFBCNet | ACM MM | LFBCNet: Light Field Boundary-aware and Cascaded Interaction Network for Salient Object Detection | Paper/Project |

| 23 | 2023 | TENet | IVC | TENet: Accurate light-field salient object detection with a transformer embedding network | Paper/Project |

| 24 | 2023 | -- | TPAMI | A Thorough Benchmark and a New Model for Light Field Saliency Detection | Paper/Project |

| 25 | 2023 | GFRNet | ICME | Guided Focal Stack Refinement Network For Light Field Salient Object Detection | Paper/Project |

| 26 | 2023 | FESNet | IEEE TMM | Fusion-Embedding Siamese Network for Light Field Salient Object Detection | Paper/Project |

| 27 | 2023 | LFTransNet | IEEE TCSVT | LFTransNet: Light Field Salient Object Detection via a Learnable Weight Descriptor | Paper/Project |

| 🔥 28 | 2023 | CDINet | IEEE TCSVT | Light Field Salient Object Detection with Sparse Views via Complementary and Discriminative Interaction Network | Paper/Project |

| 🔥 29 | 2024 | LF-Tracy | arXiv | LF Tracy: A Unified Single-Pipeline Approach for Salient Object Detection in Light Field Cameras | Paper/Project |

| 🔥 30 | 2024 | PANet | IEEE SPL | Parallax-Aware Network for Light Field Salient Object Detection | Paper/Project |

Table III: Overview of related reviews and surveys to LFSOD.

| No. | Year | Model | pub. | Title | Links |

|---|---|---|---|---|---|

| 1 | 2015 | CS | NEURO | Light field saliency vs.2D saliency : A comparative study | Paper/Project |

| 2 | 2020 | RGBDS | CVM | RGB-D Salient Object Detection: A Survey | Paper/Project |

Table IV: Overview of light field SOD datasets. About the abbreviations: MOP=Multiple-Object Proportion (The percentage of images regarding the entire dataset, which have more than one objects per image), FS=Focal Stacks, DE=Depth maps, MV=Multi-view images, ML=Micro-lens images, GT=Ground-truth, Raw=Raw light field data. FS, MV, DE, ML, GT and Raw indicate the data provided by the datasets. '✓' denotes the data forms provided in the original datasets, while '✔️' indicates the data forms generated by us. Original data forms as well as supplement data forms can be download at 'Download' with the fetch code: 'lfso'. You can also download original dataset in 'Original Link'.

| No. | Dataset | Year | Pub. | Size | MOP | FS | MV | DE | ML | GT | Raw | Download | Original Link |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 1 | LFSD | 2014 | CVPR | 100 | 0.04 | ✓ | ✔️ | ✓ | ✔️ | ✓ | ✓ | Link | Link |

| 2 | HFUT-Lytro | 2017 | ACM TOMM | 255 | 0.29 | ✓ | ✓ | ✓ | ✔️ | ✓ | Link | Link | |

| 3 | DUTLF-FS | 2019 | ICCV | 1462 | 0.05 | ✓ | ✓ | ✓ | Link | Link | |||

| 4 | DUTLF-MV | 2019 | IJCAI | 1580 | 0.04 | ✓ | ✓ | Link | Link | ||||

| 5 | Lytro Illum | 2020 | IEEE TIP | 640 | 0.15 | ✔️ | ✔️ | ✔️ | ✓ | ✓ | ✓ | Link | Link |

| 6 | DUTLF-V2 | 2021 | Arxiv | 4204 | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | Link | ||

| 🔥 7 | CITYU-Lytro | 2021 | IEEE TIP | 817 | ✓ | ✓ | ✓ | ✓ | ✓ | ||||

| 🔥 8 | PKU-LF | 2021 | TPAMI | 5000 | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | Link |

Table V: Overview of RGB-D SOD models in our tests.

| No. | Year | Model | Pub. | Title | Links |

|---|---|---|---|---|---|

| 1 | 2020 | BBS | ECCV | BBS-Net: RGB-D Salient Object Detection with a Bifurcated Backbone Strategy Network | Paper/Project |

| 2 | 2020 | JLDCF | CVPR | JL-DCF: Joint learning and densely-cooperative fusion framework for RGB-D salient object detection | Paper/Project |

| 3 | 2020 | SSF | CVPR | Select, supplement and focus for RGB-D saliency detection | Paper/Project |

| 4 | 2020 | UCNet | CVPR | UC-Net: Uncertainty Inspired RGB-D Saliency Detection via Conditional Variational Autoencoders | Paper/Project |

| 5 | 2020 | D3Net | IEEE TNNLS | Rethinking RGB-D salient object detection: models, datasets, and large-scale benchmarks | Paper/Project |

| 6 | 2020 | S2MA | CVPR | Learning selective self-mutual attention for RGB-D saliency detection | Paper/Project |

| 7 | 2020 | cmMS | ECCV | RGB-D salient object detection with cross-modality modulation and selection | Paper/Project |

| 8 | 2020 | HDFNet | ECCV | Hierarchical Dynamic Filtering Network for RGB-D Salient Object Detection | Paper/Project |

| 9 | 2020 | ATSA | ECCV | Asymmetric Two-Stream Architecture for Accurate RGB-D Saliency Detection | Paper/Project |

Table VI: Quantitative measures: S-measure (Sα), max F-measure (Fβmax), mean F-measure (Fβmean), adaptive F-measure (Fβadp), max E-measure (EΦmax), mean E-measure (EΦmean), adaptive E-measure (EΦadp), and MAE (M) of nine light field SOD models (i.e., LFS, WSC, DILF, RDFD, DLSD, MoLF, ERNet, LFNet, MAC) and nine SOTA RGB-D based SOD models (i.e., BBS, JLDCF, SSF, UCNet, D3Net, S2MA, cmMS, HDFNet, and ATSA).

Note in the table, light field SOD models are marked by "†". Symbol “N/T” indicates that a model was trained on quite some images from the corresponding dataset, and, thus, it is not tested. The top three models among light field and RGB-D based SOD models are highlighted in red, blue and green, separately. ↑/↓ denotes that a larger/smaller value is better.

Other important notes:

1). There are TWO types of ground-truth (GT) corresponding to focal stacks and multi-view images due to an inevitable issue of software used for generation, and there are usually shifts between them. Models using different data with different GT are not directly comparable. Luckly, this problem is avoided, as in the table, all the models on the same dataset are based on the SINGLE GT type of focal stacks.

2). On LFSD, model MAC is tested on micro-lens image arrays, whose GT is dffierent from that of other models. As a solution, we find the transfomration between these two GT types and then again transform the MAC's results to make them aligned to the other GT type. Generally, we find the differences of metric numbers are quite small before and after transform.

3). Again about MAC, on DUTLF-FS and HFUT-Lytro, MAC is tested on single up-sampled all-in-focus images.

4). On HFUT-Lytro, ERNet is only tested on 155 images, since it used the remaining 100 images for training. So the obtain metric numbers are only for reference, as the other models are tested on 255 images.

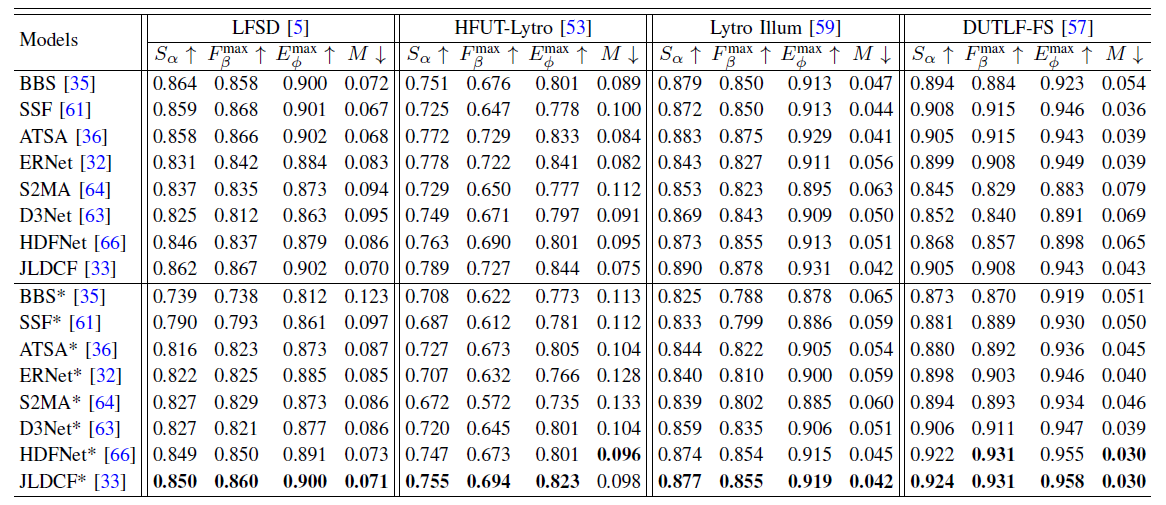

🔥 Table VII: Quantitative measures: S-measure (Sα), max F-measure (Fβmax), max E-measure (EΦmax), and MAE (M) of one retrained light field SOD model (ERNet) and seven retrained RGB-D based SOD models (ie, BBS, SSF, ATSA, S2MA, D3Net, HDFNet, and JLDCF). Note in the table, the results of original models are taken from Table VI, and the retrained models are marked by *. The best results of retrained models are highlighted in bold. ↑/↓ denotes that a larger/smaller value is better.

Fig. 2 PR curves on four datasets ((a) LFSD, (b) HFUT-Lytro, (c) Lytro Illum, and (d) DUTLF-FS) for nine light field SOD models (i.e., LFS, WSC, DILF, RDFD, DLSD, MoLF, ERNet, LFNet, MAC) and nine SOTA RGB-D based SOD models (i.e., BBS, JLDCF, SSF, UCNet, D3Net, S2MA, cmMS, HDFNet, and ATSA). Note that in this figure, the solid lines and dashed lines represent the PR curves of RGB-D based SOD models and light field SOD models, respectively.

All models' saliency maps generated and used for our evaluation table are now publicly available at Baidu Pan (code: lfso) or Google Drive.

Fig. 3 Visual comparison of five light field SOD (i.e., LFS, DILF, DLSD, MoLF, ERNet, , bounded in the green box) and

three SOTA RGB-D based SOD models (i.e., JLDCF, BBS, and ATSA, bounded in the red box). The first two rows in this figure show easy cases while the third to fifth rows show cases with complex backgrounds or sophisticated boundaries. The last row gives an example with low color contrast between foreground and background.

Fig. 4 Visual comparison of five light field SOD (i.e., LFS, DILF, DLSD, MoLF, ERNet, , bounded in the green box) and three SOTA RGB-D based SOD models (i.e., JLDCF, BBS, and ATSA, bounded in the red box) on detecting multiple (first three rows) and small objects (remaining rows).

Please cite our paper if you find the work useful:

@article{Fu2021lightfieldSOD,

title={Light Field Salient Object Detection: A Review and Benchmark},

author={Fu, Keren and Jiang, Yao and Ji, Ge-Peng and Zhou, Tao and Zhao, Qijun and Fan, Deng-Ping},

journal={Computational Visual Media},

volume={8},

number={4},

pages={509--534},

year={2022},

publisher={Springer}

}