CN112135129A - Inter-frame prediction method and device - Google Patents

Inter-frame prediction method and device Download PDFInfo

- Publication number

- CN112135129A CN112135129A CN201910600591.9A CN201910600591A CN112135129A CN 112135129 A CN112135129 A CN 112135129A CN 201910600591 A CN201910600591 A CN 201910600591A CN 112135129 A CN112135129 A CN 112135129A

- Authority

- CN

- China

- Prior art keywords

- mode

- current

- value

- image block

- flag

- Prior art date

- Legal status (The legal status is an assumption and is not a legal conclusion. Google has not performed a legal analysis and makes no representation as to the accuracy of the status listed.)

- Granted

Links

- 238000000034 method Methods 0.000 title claims abstract description 129

- 230000004927 fusion Effects 0.000 claims abstract description 426

- 238000012545 processing Methods 0.000 claims description 103

- 238000009795 derivation Methods 0.000 claims description 101

- 230000015654 memory Effects 0.000 claims description 50

- 238000004458 analytical method Methods 0.000 claims description 18

- 238000013139 quantization Methods 0.000 description 74

- 239000013598 vector Substances 0.000 description 67

- 239000000872 buffer Substances 0.000 description 35

- 230000008569 process Effects 0.000 description 33

- 238000004891 communication Methods 0.000 description 29

- 238000010586 diagram Methods 0.000 description 23

- 208000037170 Delayed Emergence from Anesthesia Diseases 0.000 description 16

- 238000005192 partition Methods 0.000 description 14

- 230000002123 temporal effect Effects 0.000 description 10

- 238000003491 array Methods 0.000 description 9

- 230000006835 compression Effects 0.000 description 9

- 238000007906 compression Methods 0.000 description 9

- 230000005540 biological transmission Effects 0.000 description 8

- 230000003044 adaptive effect Effects 0.000 description 7

- 238000005457 optimization Methods 0.000 description 7

- 241000023320 Luma <angiosperm> Species 0.000 description 6

- 238000001914 filtration Methods 0.000 description 6

- OSWPMRLSEDHDFF-UHFFFAOYSA-N methyl salicylate Chemical compound COC(=O)C1=CC=CC=C1O OSWPMRLSEDHDFF-UHFFFAOYSA-N 0.000 description 6

- 238000004590 computer program Methods 0.000 description 5

- 238000013500 data storage Methods 0.000 description 5

- 238000005516 engineering process Methods 0.000 description 5

- 238000000638 solvent extraction Methods 0.000 description 5

- 230000003287 optical effect Effects 0.000 description 4

- 238000003672 processing method Methods 0.000 description 4

- 238000004364 calculation method Methods 0.000 description 3

- 238000006243 chemical reaction Methods 0.000 description 3

- 230000006870 function Effects 0.000 description 3

- 239000000203 mixture Substances 0.000 description 3

- 238000005070 sampling Methods 0.000 description 3

- 230000011664 signaling Effects 0.000 description 3

- 238000012546 transfer Methods 0.000 description 3

- 230000007704 transition Effects 0.000 description 3

- PXFBZOLANLWPMH-UHFFFAOYSA-N 16-Epiaffinine Natural products C1C(C2=CC=CC=C2N2)=C2C(=O)CC2C(=CC)CN(C)C1C2CO PXFBZOLANLWPMH-UHFFFAOYSA-N 0.000 description 2

- 230000002146 bilateral effect Effects 0.000 description 2

- 238000004422 calculation algorithm Methods 0.000 description 2

- 230000000295 complement effect Effects 0.000 description 2

- 238000010276 construction Methods 0.000 description 2

- 238000011161 development Methods 0.000 description 2

- 230000018109 developmental process Effects 0.000 description 2

- 239000000835 fiber Substances 0.000 description 2

- 238000009499 grossing Methods 0.000 description 2

- 238000003384 imaging method Methods 0.000 description 2

- 239000004973 liquid crystal related substance Substances 0.000 description 2

- 239000011159 matrix material Substances 0.000 description 2

- 238000012805 post-processing Methods 0.000 description 2

- 238000007781 pre-processing Methods 0.000 description 2

- 239000007787 solid Substances 0.000 description 2

- 230000003068 static effect Effects 0.000 description 2

- 238000009966 trimming Methods 0.000 description 2

- 238000012952 Resampling Methods 0.000 description 1

- XUIMIQQOPSSXEZ-UHFFFAOYSA-N Silicon Chemical compound [Si] XUIMIQQOPSSXEZ-UHFFFAOYSA-N 0.000 description 1

- 230000003190 augmentative effect Effects 0.000 description 1

- 230000001413 cellular effect Effects 0.000 description 1

- 230000000694 effects Effects 0.000 description 1

- 230000002441 reversible effect Effects 0.000 description 1

- 230000011218 segmentation Effects 0.000 description 1

- 229910052710 silicon Inorganic materials 0.000 description 1

- 239000010703 silicon Substances 0.000 description 1

- 238000001228 spectrum Methods 0.000 description 1

- 238000006467 substitution reaction Methods 0.000 description 1

- 230000001360 synchronised effect Effects 0.000 description 1

- 230000009466 transformation Effects 0.000 description 1

- 230000001131 transforming effect Effects 0.000 description 1

Images

Classifications

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N19/00—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals

- H04N19/10—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding

- H04N19/102—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding characterised by the element, parameter or selection affected or controlled by the adaptive coding

- H04N19/13—Adaptive entropy coding, e.g. adaptive variable length coding [AVLC] or context adaptive binary arithmetic coding [CABAC]

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N19/00—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals

- H04N19/10—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding

- H04N19/102—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding characterised by the element, parameter or selection affected or controlled by the adaptive coding

- H04N19/103—Selection of coding mode or of prediction mode

- H04N19/107—Selection of coding mode or of prediction mode between spatial and temporal predictive coding, e.g. picture refresh

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N19/00—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals

- H04N19/10—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding

- H04N19/169—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding characterised by the coding unit, i.e. the structural portion or semantic portion of the video signal being the object or the subject of the adaptive coding

- H04N19/17—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding characterised by the coding unit, i.e. the structural portion or semantic portion of the video signal being the object or the subject of the adaptive coding the unit being an image region, e.g. an object

- H04N19/176—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using adaptive coding characterised by the coding unit, i.e. the structural portion or semantic portion of the video signal being the object or the subject of the adaptive coding the unit being an image region, e.g. an object the region being a block, e.g. a macroblock

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N19/00—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals

- H04N19/42—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals characterised by implementation details or hardware specially adapted for video compression or decompression, e.g. dedicated software implementation

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N19/00—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals

- H04N19/50—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using predictive coding

- H04N19/503—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals using predictive coding involving temporal prediction

- H04N19/51—Motion estimation or motion compensation

- H04N19/56—Motion estimation with initialisation of the vector search, e.g. estimating a good candidate to initiate a search

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N19/00—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals

- H04N19/70—Methods or arrangements for coding, decoding, compressing or decompressing digital video signals characterised by syntax aspects related to video coding, e.g. related to compression standards

Landscapes

- Engineering & Computer Science (AREA)

- Multimedia (AREA)

- Signal Processing (AREA)

- Compression Or Coding Systems Of Tv Signals (AREA)

Abstract

The application provides an inter-frame prediction method and device. The method comprises the following steps: after determining that the current image block uses the fusion mode to perform inter-frame prediction, determining whether the current image block allows each of K alternative fusion modes to be used; under the condition that the current image block allows to use a current fusion mode and the current image block allows to use a fusion mode except the current fusion mode in the K standby fusion modes, analyzing a code stream to obtain a value of a first identifier of the current fusion mode; and under the condition that the value of the first identifier indicates that the fusion mode for inter-frame prediction of the current image block is the current fusion mode, performing inter-frame prediction on the current image block by using the current fusion mode to obtain a prediction block of the current image block. In the method and the device, the parsing redundancy of the fusion syntax elements is removed, the decoding complexity is reduced to a certain extent, and the decoding efficiency is improved.

Description

Technical Field

The present application relates to the field of video image processing technologies, and in particular, to an inter-frame prediction method and apparatus.

Background

Digital video capabilities can be incorporated into a wide variety of devices, including digital televisions, digital direct broadcast systems, wireless broadcast systems, Personal Digital Assistants (PDAs), laptop or desktop computers, tablet computers, electronic book readers, digital cameras, digital recording devices, digital media players, video gaming devices, video gaming consoles, cellular or satellite radio telephones (so-called "smart phones"), video teleconferencing devices, video streaming devices, and the like. Digital video devices implement video compression techniques, such as those described in the standards defined by MPEG-2, MPEG-4, ITU-T H.263, ITU-T H.264/MPEG-4 part 10 Advanced Video Coding (AVC), the video coding standard H.265/High Efficiency Video Coding (HEVC), and extensions of such standards. Video devices may transmit, receive, encode, decode, and/or store digital video information more efficiently by implementing such video compression techniques.

Video compression techniques perform spatial (intra-picture) prediction and/or temporal (inter-picture) prediction to reduce or remove redundancy inherent in video sequences. For block-based video coding, a video slice (i.e., a video frame or a portion of a video frame) may be partitioned into tiles, which may also be referred to as treeblocks, Coding Units (CUs), and/or coding nodes. An image block in a to-be-intra-coded (I) strip of an image is encoded using spatial prediction with respect to reference samples in neighboring blocks in the same image. An image block in a to-be-inter-coded (P or B) slice of an image may use spatial prediction with respect to reference samples in neighboring blocks in the same image or temporal prediction with respect to reference samples in other reference images. A picture may be referred to as a frame and a reference picture may be referred to as a reference frame.

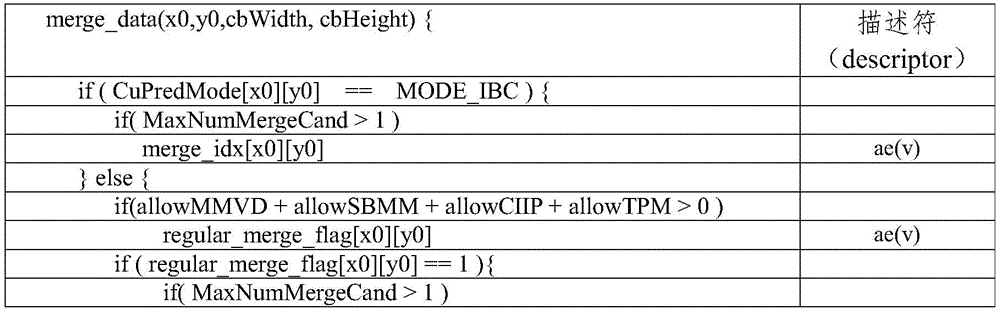

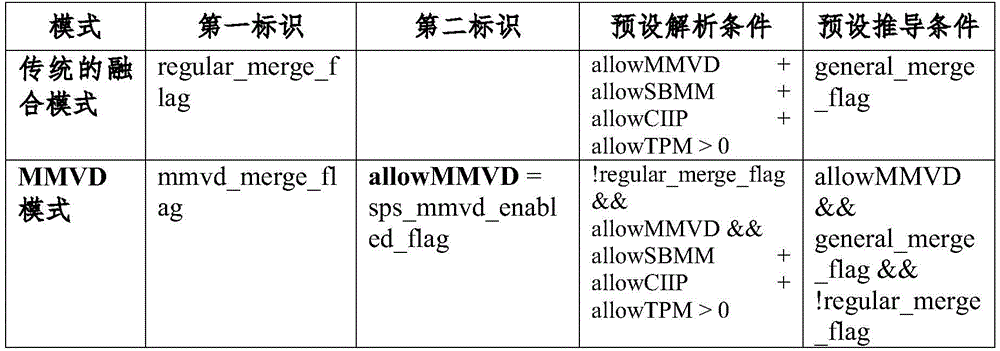

Currently, a merge (merge) technique is an inter prediction technique, and determines motion information with the minimum rate-distortion (RD) cost in a candidate motion vector list as a Motion Vector Predictor (MVP) of a current block by constructing the candidate motion vector list. If the current image block uses the fusion technology to perform inter-frame prediction, a fusion mode needs to be selected to obtain inter-frame prediction parameters to perform inter-frame prediction on the current image block, and the fusion mode may include: one or more of a conventional blend mode, a blend with motion vector difference (MMVD) mode, a sub-block blend mode (SBMM), a combined intra and intra prediction mode (CIIP), and a triangle prediction unit (TPM). In the syntax parsing process of the fusion data (merge data), it is necessary to sequentially determine which one or more fusion modes are finally used to perform inter-frame prediction on the current image block, so that there will be parsing redundancy, which results in higher decoding complexity and lower decoding efficiency in some cases.

Disclosure of Invention

The application provides an inter-frame prediction method and an inter-frame prediction device, which can reduce the decoding complexity to a certain extent and improve the decoding efficiency.

In a first aspect, the present application provides an inter prediction method, which can be applied in a video decoder. The method can comprise the following steps: after determining that the current image block uses the fusion mode to perform inter-frame prediction, determining whether the current image block allows each of K alternative fusion modes to be used, wherein K is a positive integer greater than or equal to 2; under the condition that the current image block allows the current fusion mode to be used and the current image block allows the fusion mode except the current fusion mode in the K alternative fusion modes to be used, analyzing and obtaining a first identifier value of the current fusion mode from the code stream; and under the condition that the value of the first identifier indicates that the fusion mode for inter-frame prediction of the current image block is the current fusion mode, performing inter-frame prediction on the current image block by using the current fusion mode to obtain a prediction block of the current image block.

In this application, the second identifier is used to indicate whether the current image block uses the corresponding fusion mode. The first identification may include, but is not limited to: one or more of a regular _ merge _ flag, an mmvd _ merge _ flag, a merge _ sublock _ flag, a ciip _ flag, a merge _ triangle _ flag, and the like.

The merge _ triangle _ flag may be a MergeTriangleFlag.

In the application, on the premise that the decoder determines that the current image block uses the fusion mode to perform inter-frame prediction, if the current image block allows the current fusion mode to be used and the current image block allows the fusion mode except the current fusion mode among the K alternative fusion modes to be used, the decoder uses the current fusion mode to perform inter-frame prediction on the current image block according to the indication of the value of the first identifier of the current image block obtained by analyzing in the code stream so as to obtain the prediction block of the current image block, and does not need to analyze the values of the first identifiers of the fusion modes except the current fusion mode among the K alternative fusion modes, so that the analysis redundancy of the fusion syntax elements is removed, the decoding complexity is reduced to a certain extent, and the decoding efficiency is improved.

Based on the first aspect, in some possible embodiments, the method further includes: and under the condition that the current image block does not allow the use of a fusion mode except the current fusion mode of the K alternative fusion modes, performing inter-frame prediction on the current image block by using the current fusion mode to obtain a prediction block of the current image block.

Based on the first aspect, in some possible embodiments, determining whether the current image block allows using each of the K candidate fusion modes includes: acquiring a prediction parameter corresponding to a current image block; determining whether the current image block allows to use each fusion mode or not according to the prediction parameters; wherein the prediction parameters include one or more of: an indication of a syntax element of a superior video processing unit related to the current image block, a size of the current image block, indication information indicating whether the current image block has a residual, a type of the superior video processing unit.

Based on the first aspect, in some possible embodiments, the upper level video processing unit includes a slice in which the current image block is located, a slice group in which the current image block is located, an image in which the current image block is located, or a video sequence in which the current image block is located.

Based on the first aspect, in some possible embodiments, in a case that the current image block allows the current fusion mode to be used, and the current image block allows a fusion mode other than the current fusion mode among the K candidate fusion modes to be used, parsing the code stream to obtain a value of the first identifier of the current fusion mode includes: under the condition that the current image block allows to use at least one of an MMVD mode, an SBMM, a CIIP mode and a TPM, analyzing and obtaining a regular _ merge _ flag value of a traditional fusion mode from a code stream; wherein, the regular _ merge _ flag is a first flag of the conventional fusion mode.

Based on the first aspect, in some possible embodiments, in a case that the current image block allows the current fusion mode to be used, and the current image block allows a fusion mode other than the current fusion mode among the K candidate fusion modes to be used, parsing the code stream to obtain a value of the first identifier of the current fusion mode includes: under the condition that the current image block allows to use the MMVD mode and the current image block allows to use at least one of the SBMM, the CIIP mode and the TPM, analyzing and obtaining the value of MMVD _ merge _ flag of the MMVD mode from the code stream; and the MMVD _ merge _ flag is a first identifier of the MMVD mode.

Based on the first aspect, in some possible embodiments, in a case that the current image block allows the current fusion mode to be used, and the current image block allows a fusion mode other than the current fusion mode among the K candidate fusion modes to be used, parsing the code stream to obtain a value of the first identifier of the current fusion mode includes: under the condition that the current image block allows the SBMM mode to be used and the current image block allows the CIIP mode and/or the TPM to be used, analyzing the code stream to obtain the value of merge _ sub _ flag of the SBMM; and the merge _ sublock _ flag is a first identifier of the SBMM.

Based on the first aspect, in some possible embodiments, in a case that the current image block allows the current fusion mode to be used, and the current image block allows a fusion mode other than the current fusion mode among the K candidate fusion modes to be used, parsing the code stream to obtain a value of the first identifier of the current fusion mode includes: under the condition that the current image block allows using the CIIP mode and the TPM, analyzing and obtaining a value of the CIIP _ flag of the CIIP mode from the code stream; and the CIIP _ flag is a first identifier of the CIIP mode.

Based on the first aspect, in some possible embodiments, the method further includes: and when the current image block does not allow the current fusion mode to be used or the current image block does not allow the fusion mode except the current fusion mode in the K candidate fusion modes to be used, obtaining the value of the first identifier of the current fusion mode through derivation.

Based on the first aspect, in some possible embodiments, the method further includes: and when the value of the first identifier of the current fusion mode cannot be obtained by analyzing the code stream, the value of the first identifier of the current fusion mode is obtained by deduction.

Based on the first aspect, in some possible embodiments, the current fusion mode is a conventional fusion mode, and obtaining a value of a first identifier of the current fusion mode by derivation includes: setting general _ merge _ flag to the value of regular _ merge _ flag; or, setting the value of regular _ merge _ flag to a first value; the general _ merge _ flag is used to indicate whether an inter prediction parameter of a current image block is obtained from an adjacent inter prediction block, and the regular _ merge _ flag is a first identifier of a conventional fusion mode.

Based on the first aspect, in some possible embodiments, the current fusion mode is an MMVD mode, and the value of the first flag MMVD _ merge _ flag of the MMVD mode is set to a first value when the first derivation condition is satisfied; wherein the first derivation condition includes: the current image block allows the MMVD mode to be used.

Based on the first aspect, in some possible embodiments, the obtaining, by deriving the value of the first identifier of the current fusion mode, that the current fusion mode is SBMM includes: under the condition that a second derivation condition is met, setting the value of a first identification merge _ sublock _ flag of the SBMM as a first value; wherein the second derivation condition includes: the current image block allows SBMM to be used.

Based on the first aspect, in some possible embodiments, the obtaining, by derivation, a value of a first identifier of the current fusion mode is that the current fusion mode is a CIIP mode, and includes: under the condition that a third derivation condition is met, setting the value of a first identifier CIIP _ flag of the CIIP mode as a first value; wherein the third derivation condition includes: the current tile allows the CIIP mode to be used.

Based on the first aspect, in some possible embodiments, the obtaining, by deriving the value of the first identifier of the current fusion mode, that the current fusion mode is a TPM includes: under the condition that a fourth derivation condition is met, setting the value of a first identification merge _ triangle _ flag of the TPM to be a first value; wherein the fourth derivation condition includes: the current image block allows the TPM to be used.

The merge _ triangle _ flag may be a MergeTriangleFlag.

Based on the first aspect, in some possible embodiments, the K candidate fusion modes include a plurality of: traditional fusion mode, MMVD mode, SBMM, CIIP mode, TPM.

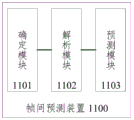

In a second aspect, the present application provides an inter-frame prediction apparatus, which can be applied in a video decoder. The apparatus may include: the determining module is used for determining whether the current image block allows each of K alternative fusion modes to be used or not after determining that the current image block uses the fusion mode for inter-frame prediction, wherein K is a positive integer greater than or equal to 2; the analysis module is used for analyzing and obtaining a value of a first identifier of the current fusion mode from the code stream under the condition that the current image block allows the current fusion mode to be used and the current image block allows the fusion mode except the current fusion mode in the K alternative fusion modes to be used; and the prediction module is used for performing inter prediction on the current image block by using the current fusion mode to obtain a prediction block of the current image block under the condition that the value of the first identifier indicates that the fusion mode for performing inter prediction on the current image block is the current fusion mode.

Based on the second aspect, in some possible embodiments, the prediction module is further configured to perform inter prediction on the current image block using the current fusion mode to obtain a prediction block of the current image block, in a case that the current image block does not allow the use of a fusion mode other than the current fusion mode from the K candidate fusion modes.

Based on the second aspect, in some possible embodiments, the determining module is configured to obtain a prediction parameter corresponding to a current image block; determining whether the current image block allows to use each fusion mode or not according to the prediction parameters; wherein the prediction parameters include one or more of: an indication of a syntax element of a superior video processing unit related to the current image block, a size of the current image block, indication information indicating whether the current image block has a residual, a type of the superior video processing unit.

Based on the second aspect, in some possible embodiments, the superior video processing unit includes a slice in which the current image block is located, a slice group in which the current image block is located, an image in which the current image block is located, or a video sequence in which the current image block is located.

Based on the second aspect, in some possible embodiments, the parsing module is configured to, in a case that the current tile block allows to use at least one of the MMVD mode, the SBMM mode, the CIIP mode, and the TPM, parse the current tile block to obtain a value of a regular _ merge _ flag in the conventional fusion mode; wherein, the regular _ merge _ flag is a first flag of the conventional fusion mode.

Based on the second aspect, in some possible embodiments, the parsing module is configured to, in a case that the MMVD mode is allowed to be used by the current tile, and the current tile is allowed to use at least one of the SBMM, the CIIP mode, and the TPM, parse the MMVD _ merge _ flag value of the MMVD mode from the bitstream; and the MMVD _ merge _ flag is a first identifier of the MMVD mode.

Based on the second aspect, in some possible embodiments, the parsing module is configured to, in a case that the current tile allows the SBMM mode to be used, and the current tile allows the CIIP mode and/or the TPM, parse the bitstream to obtain a value of merge _ sub _ flag of the SBMM; and the merge _ sublock _ flag is a first identifier of the SBMM.

Based on the second aspect, in some possible embodiments, the parsing module is configured to, in a case that the current tile allows to use the CIIP mode and the TPM, parse the value of the CIIP _ flag of the CIIP mode from the code stream; and the CIIP _ flag is a first identifier of the CIIP mode.

Based on the second aspect, in some possible embodiments, the apparatus further includes: and the derivation module is used for obtaining the value of the first identifier of the current fusion mode through derivation when the current image block does not allow the current fusion mode to be used or the current image block does not allow the fusion mode except the current fusion mode in the K alternative fusion modes to be used.

Based on the second aspect, in some possible embodiments, the apparatus further includes: and the derivation module is used for obtaining the value of the first identifier of the current fusion mode through derivation when the value of the first identifier of the current fusion mode cannot be obtained through analysis from the code stream.

Based on the second aspect, in some possible embodiments, the current fusion mode is a conventional fusion mode, and the derivation module is configured to set general _ merge _ flag to a value of regular _ merge _ flag; or, setting the value of regular _ merge _ flag to a first value; the general _ merge _ flag is used to indicate whether an inter prediction parameter of a current image block is obtained from an adjacent inter prediction block, and the regular _ merge _ flag is a first identifier of a conventional fusion mode.

Based on the second aspect, in some possible embodiments, the current fusion mode is an MMVD mode, and the derivation module is configured to set a value of a first flag MMVD _ merge _ flag of the MMVD mode to a first value if a first derivation condition is satisfied; wherein the first derivation condition includes: the current image block allows the MMVD mode to be used.

Based on the second aspect, in some possible embodiments, when the current fusion mode is the SBMM, the deriving module is configured to set a value of a first flag merge _ sublock _ flag of the SBMM to a first value if a second deriving condition is satisfied; wherein the second derivation condition includes: the current image block allows SBMM to be used.

Based on the second aspect, in some possible embodiments, the current merging mode is a CIIP mode, and the deriving module is configured to set a value of a first flag CIIP _ flag of the CIIP mode to a first value when a third deriving condition is satisfied; wherein the third derivation condition includes: the current tile allows the CIIP mode to be used.

Based on the second aspect, in some possible embodiments, the current fusion mode is a TPM mode, and the deriving module is configured to set a value of a first flag merge _ triangle _ flag of the TPM to be a first value if a fourth deriving condition is met; wherein the fourth derivation condition includes: the current image block allows the TPM to be used.

Based on the second aspect, in some possible embodiments, the K candidate fusion modes include a plurality of: traditional fusion mode, MMVD mode, SBMM, CIIP mode, TPM.

In a third aspect, the present application provides a video decoder for decoding image blocks from a bitstream, comprising: the entropy decoding module is used for decoding an index identifier from the code stream, and the index identifier is used for indicating target candidate motion information of the current decoded image block; the inter-frame prediction apparatus according to any one of the above second aspects, wherein the inter-frame prediction apparatus is configured to predict motion information of a currently decoded image block based on target candidate motion information indicated by the index identifier, and determine a predicted pixel value of the currently decoded image block based on the motion information of the currently decoded image block; a reconstruction module for reconstructing the current decoded image block based on the predicted pixel values.

In a fourth aspect, the present application provides an apparatus for decoding video data, the apparatus comprising: the memory is used for storing video data in a code stream form; and the video decoder is used for decoding the video data from the code stream.

In a fifth aspect, the present application provides a decoding device comprising: a non-volatile memory and a processor coupled to each other, the processor calling program code stored in the memory to perform part or all of the steps of any one of the methods of the first aspect.

In a sixth aspect, the present application provides a computer readable storage medium storing program code, wherein the program code comprises instructions for performing some or all of the steps of any one of the methods of the first aspect.

In a seventh aspect, the present application provides a computer program product for causing a computer to perform some or all of the steps of any one of the methods of the first aspect when the computer program product runs on the computer.

It should be understood that the second to seventh aspects of the present application are consistent with the technical solutions of the first aspect of the present application, and similar advantageous effects are obtained in all aspects and corresponding possible implementations, and thus, detailed descriptions are omitted.

Drawings

In order to more clearly illustrate the technical solutions in the embodiments or the background art of the present application, the drawings required to be used in the embodiments or the background art of the present application will be described below.

FIG. 1A is a block diagram of an example of a video encoding and decoding system 10 for implementing embodiments of the present application;

FIG. 1B is a block diagram of an example of a video coding system 40 for implementing embodiments of the present application;

FIG. 2 is a block diagram of an example structure of an encoder 20 for implementing embodiments of the present application;

FIG. 3 is a block diagram of an example structure of a decoder 30 for implementing embodiments of the present application;

FIG. 4 is a block diagram of an example of a video coding apparatus 400 for implementing an embodiment of the present application;

FIG. 5 is a block diagram of another example of an encoding device or a decoding device for implementing embodiments of the present application;

FIG. 6 is a schematic diagram of a spatial domain and a temporal domain of a current image block in an embodiment of the present application;

FIG. 7A is a diagram of an MMVD search point in an embodiment of the present application;

FIG. 7B is a schematic diagram of an MMVD search process in an embodiment of the present application;

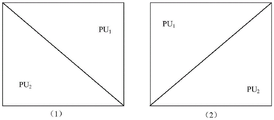

FIG. 8 is a schematic diagram illustrating a division of a current image block in an embodiment of the present application;

FIG. 9 is a first flowchart illustrating an inter-frame prediction method according to an embodiment of the present application;

FIG. 10 is a flowchart illustrating a second inter-frame prediction method according to an embodiment of the present application;

fig. 11 is a schematic structural diagram of an inter prediction apparatus in an embodiment of the present application.

Detailed Description

The embodiments of the present application will be described below with reference to the drawings. In the following description, reference is made to the accompanying drawings which form a part hereof and in which is shown by way of illustration specific aspects of embodiments of the application or in which specific aspects of embodiments of the application may be employed. It should be understood that embodiments of the present application may be used in other ways and may include structural or logical changes not depicted in the drawings. The following detailed description is, therefore, not to be taken in a limiting sense, and the scope of the present application is defined by the appended claims. For example, it should be understood that the disclosure in connection with the described methods may equally apply to the corresponding apparatus or system for performing the methods, and vice versa. For example, if one or more particular method steps are described, the corresponding apparatus may comprise one or more units, such as functional units, to perform the described one or more method steps (e.g., a unit performs one or more steps, or multiple units, each of which performs one or more of the multiple steps), even if such one or more units are not explicitly described or illustrated in the figures. On the other hand, for example, if a particular apparatus is described based on one or more units, such as functional units, the corresponding method may comprise one step to perform the functionality of the one or more units (e.g., one step performs the functionality of the one or more units, or multiple steps, each of which performs the functionality of one or more of the plurality of units), even if such one or more steps are not explicitly described or illustrated in the figures. Further, it is to be understood that features of the various exemplary embodiments and/or aspects described herein may be combined with each other, unless explicitly stated otherwise.

The technical scheme related to the embodiment of the application can be applied to the existing video coding standards (such as H.264, HEVC and the like), and can also be applied to the future video coding standards (such as H.266 standard). The terminology used in the description of the embodiments section of the present application is for the purpose of describing particular embodiments of the present application only and is not intended to be limiting of the present application. Some concepts that may be involved in embodiments of the present application are briefly described below.

Video coding generally refers to processing a sequence of pictures that form a video or video sequence. In the field of video coding, the terms "picture", "frame" or "image" may be used as synonyms. Video encoding as used herein means video encoding or video decoding. Video encoding is performed on the source side, typically including processing (e.g., by compressing) the original video picture to reduce the amount of data required to represent the video picture for more efficient storage and/or transmission. Video decoding is performed at the destination side, typically involving inverse processing with respect to the encoder, to reconstruct the video pictures. Embodiments are directed to video picture "encoding" to be understood as referring to "encoding" or "decoding" of a video sequence. The combination of the encoding part and the decoding part is also called codec (encoding and decoding).

A video sequence comprises a series of images (pictures) which are further divided into slices (slices) which are further divided into blocks (blocks). Video coding performs the coding process in units of blocks, and in some new video coding standards, the concept of blocks is further extended. For example, in the h.264 standard, there is a Macroblock (MB), which may be further divided into a plurality of prediction blocks (partitions) that can be used for predictive coding. In the High Efficiency Video Coding (HEVC) standard, basic concepts such as a Coding Unit (CU), a Prediction Unit (PU), and a Transform Unit (TU) are adopted, and various block units are functionally divided, and a brand new tree-based structure is adopted for description. For example, a video coding standard divides a frame of image into non-overlapping Coding Tree Units (CTUs), and then divides one CTU into a plurality of sub-nodes, which can be divided into smaller sub-nodes according to a Quadtree (QT), and the smaller sub-nodes can be further divided, thereby forming a quadtree structure. If the node is no longer partitioned, it is called a CU. A CU is a basic unit for dividing and encoding an encoded image. There is also a similar tree structure for PU and TU, and PU may correspond to a prediction block, which is the basic unit of predictive coding. The CU is further partitioned into PUs according to a partitioning pattern. A TU may correspond to a transform block, which is a basic unit for transforming a prediction residual. However, CU, PU and TU are basically concepts of blocks (or image blocks).

For example, in HEVC, a CTU is split into multiple CUs by using a quadtree structure represented as a coding tree. A decision is made at the CU level whether to encode a picture region using inter-picture (temporal) or intra-picture (spatial) prediction. Each CU may be further split into one, two, or four PUs according to the PU split type. The same prediction process is applied within one PU and the relevant information is transmitted to the decoder on a PU basis. After obtaining the residual block by applying a prediction process based on the PU split type, the CU may be partitioned into Transform Units (TUs) according to other quadtree structures similar to the coding tree used for the CU. In recent developments of video compression techniques, the coding blocks are partitioned using Quad-tree and binary tree (QTBT) partition frames. In the QTBT block structure, a CU may be square or rectangular in shape.

Herein, for convenience of description and understanding, an image block to be encoded in a currently encoded image may be referred to as a current block, e.g., in encoding, referring to a block currently being encoded; in decoding, refers to the block currently being decoded. A decoded image block in a reference picture used for predicting the current block is referred to as a reference block, i.e. a reference block is a block that provides a reference signal for the current block, wherein the reference signal represents pixel values within the image block. A block in the reference picture that provides a prediction signal for the current block may be a prediction block, wherein the prediction signal represents pixel values or sample values or a sampled signal within the prediction block. For example, after traversing multiple reference blocks, a best reference block is found that will provide prediction for the current block, which is called a prediction block.

In the case of lossless video coding, the original video picture can be reconstructed, i.e., the reconstructed video picture has the same quality as the original video picture (assuming no transmission loss or other data loss during storage or transmission). In the case of lossy video coding, the amount of data needed to represent the video picture is reduced by performing further compression, e.g., by quantization, while the decoder side cannot fully reconstruct the video picture, i.e., the quality of the reconstructed video picture is lower or worse than the quality of the original video picture.

Several video coding standards of h.261 belong to the "lossy hybrid video codec" (i.e., the combination of spatial and temporal prediction in the sample domain with 2D transform coding in the transform domain for applying quantization). Each picture of a video sequence is typically partitioned into non-overlapping sets of blocks, typically encoded at the block level. In other words, the encoder side typically processes, i.e., encodes, video at the block (video block) level, e.g., generates a prediction block by spatial (intra-picture) prediction and temporal (inter-picture) prediction, subtracts the prediction block from the current block (currently processed or block to be processed) to obtain a residual block, transforms the residual block and quantizes the residual block in the transform domain to reduce the amount of data to be transmitted (compressed), while the decoder side applies the inverse processing portion relative to the encoder to the encoded or compressed block to reconstruct the current image block for representation. In addition, the encoder replicates the decoder processing loop such that the encoder and decoder generate the same prediction (e.g., intra-prediction and inter-prediction) and/or reconstruction for processing, i.e., encoding, subsequent blocks.

The system architecture to which the embodiments of the present application apply is described below. Referring to fig. 1A, fig. 1A schematically shows a block diagram of a video encoding and decoding system 10 to which an embodiment of the present application is applied. As shown in fig. 1A, video encoding and decoding system 10 may include a source device 12 and a destination device 14, source device 12 generating encoded video data, and thus source device 12 may be referred to as a video encoding apparatus. Destination device 14 may decode the encoded video data generated by source device 12, and thus destination device 14 may be referred to as a video decoding apparatus. Various implementations of source apparatus 12, destination apparatus 14, or both may include one or more processors and memory coupled to the one or more processors. The memory can include, but is not limited to, RAM, ROM, EEPROM, flash memory, or any other medium that can be used to store desired program code in the form of instructions or data structures that can be accessed by a computer, as described herein. Source apparatus 12 and destination apparatus 14 may comprise a variety of devices, including desktop computers, mobile computing devices, notebook (e.g., laptop) computers, tablet computers, set-top boxes, telephone handsets such as so-called "smart" phones, televisions, cameras, display devices, digital media players, video game consoles, on-board computers, wireless communication devices, or the like.

Although fig. 1A depicts source apparatus 12 and destination apparatus 14 as separate apparatuses, an apparatus embodiment may also include the functionality of both source apparatus 12 and destination apparatus 14 or both, i.e., source apparatus 12 or corresponding functionality and destination apparatus 14 or corresponding functionality. In such embodiments, source device 12 or corresponding functionality and destination device 14 or corresponding functionality may be implemented using the same hardware and/or software, or using separate hardware and/or software, or any combination thereof.

A communication connection may be made between source device 12 and destination device 14 over link 13, and destination device 14 may receive encoded video data from source device 12 via link 13. Link 13 may comprise one or more media or devices capable of moving encoded video data from source apparatus 12 to destination apparatus 14. In one example, link 13 may include one or more communication media that enable source device 12 to transmit encoded video data directly to destination device 14 in real-time. In this example, source apparatus 12 may modulate the encoded video data according to a communication standard, such as a wireless communication protocol, and may transmit the modulated video data to destination apparatus 14. The one or more communication media may include wireless and/or wired communication media such as a Radio Frequency (RF) spectrum or one or more physical transmission lines. The one or more communication media may form part of a packet-based network, such as a local area network, a wide area network, or a global network (e.g., the internet). The one or more communication media may include routers, switches, base stations, or other apparatuses that facilitate communication from source apparatus 12 to destination apparatus 14.

the picture source 16, which may include or be any type of picture capturing device, may be used, for example, to capture real-world pictures, and/or any type of picture or comment generating device (for screen content encoding, some text on the screen is also considered part of the picture or image to be encoded), such as a computer graphics processor for generating computer animated pictures, or any type of device for obtaining and/or providing real-world pictures, computer animated pictures (e.g., screen content, Virtual Reality (VR) pictures), and/or any combination thereof (e.g., Augmented Reality (AR) pictures). The picture source 16 may be a camera for capturing pictures or a memory for storing pictures, and the picture source 16 may also include any kind of (internal or external) interface for storing previously captured or generated pictures and/or for obtaining or receiving pictures. When picture source 16 is a camera, picture source 16 may be, for example, an integrated camera local or integrated in the source device; when the picture source 16 is a memory, the picture source 16 may be an integrated memory local or integrated, for example, in the source device. When the picture source 16 comprises an interface, the interface may for example be an external interface receiving pictures from an external video source, for example an external picture capturing device such as a camera, an external memory or an external picture generating device, for example an external computer graphics processor, a computer or a server. The interface may be any kind of interface according to any proprietary or standardized interface protocol, e.g. a wired or wireless interface, an optical interface.

The picture can be regarded as a two-dimensional array or matrix of pixel elements (picture elements). The pixels in the array may also be referred to as sampling points. The number of sampling points of the array or picture in the horizontal and vertical directions (or axes) defines the size and/or resolution of the picture. To represent color, three color components are typically employed, i.e., a picture may be represented as or contain three sample arrays. For example, in RBG format or color space, a picture includes corresponding arrays of red, green, and blue samples. However, in video coding, each pixel is typically represented in a luminance/chrominance format or color space, e.g. for pictures in YUV format, comprising a luminance component (sometimes also indicated with L) indicated by Y and two chrominance components indicated by U and V. The luminance (luma) component Y represents luminance or gray level intensity (e.g., both are the same in a gray scale picture), while the two chrominance (chroma) components U and V represent chrominance or color information components. Accordingly, a picture in YUV format includes a luma sample array of luma sample values (Y), and two chroma sample arrays of chroma values (U and V). Pictures in RGB format can be converted or transformed into YUV format and vice versa, a process also known as color transformation or conversion. If the picture is black and white, the picture may include only an array of luminance samples. In the embodiment of the present application, the pictures transmitted from the picture source 16 to the picture processor may also be referred to as raw picture data 17.

An encoder 20 (or video encoder 20) for receiving the pre-processed picture data 19, processing the pre-processed picture data 19 with a relevant prediction mode (such as the prediction mode in various embodiments herein), thereby providing encoded picture data 21 (structural details of the encoder 20 will be described further below based on fig. 2 or fig. 4 or fig. 5). In some embodiments, the encoder 20 may be configured to perform various embodiments described hereinafter to implement the application of the chroma block prediction method described in the embodiments of the present application on the encoding side.

A communication interface 22, which may be used to receive encoded picture data 21 and may transmit encoded picture data 21 over link 13 to destination device 14 or any other device (e.g., memory) for storage or direct reconstruction, which may be any device for decoding or storage. Communication interface 22 may, for example, be used to encapsulate encoded picture data 21 into a suitable format, such as a data packet, for transmission over link 13.

Destination device 14 includes a decoder 30, and optionally destination device 14 may also include a communication interface 28, a picture post-processor 32, and a display device 34. Described below, respectively:

Both communication interface 28 and communication interface 22 may be configured as a one-way communication interface or a two-way communication interface, and may be used, for example, to send and receive messages to establish a connection, acknowledge and exchange any other information related to a communication link and/or data transfer, such as an encoded picture data transfer.

A decoder 30 (otherwise referred to as decoder 30) for receiving the encoded picture data 21 and providing decoded picture data 31 or decoded pictures 31 (structural details of the decoder 30 will be described further below based on fig. 3 or fig. 4 or fig. 5). In some embodiments, the decoder 30 may be configured to perform various embodiments described hereinafter to implement the application of the chroma block prediction method described in the embodiments of the present application on the decoding side.

A picture post-processor 32 for performing post-processing on the decoded picture data 31 (also referred to as reconstructed picture data) to obtain post-processed picture data 33. Post-processing performed by picture post-processor 32 may include: color format conversion (e.g., from YUV format to RGB format), toning, trimming or resampling, or any other process may also be used to transmit post-processed picture data 33 to display device 34.

A display device 34 for receiving the post-processed picture data 33 for displaying pictures to, for example, a user or viewer. Display device 34 may be or may include any type of display for presenting the reconstructed picture, such as an integrated or external display or monitor. For example, the display may include a Liquid Crystal Display (LCD), an Organic Light Emitting Diode (OLED) display, a plasma display, a projector, a micro LED display, a liquid crystal on silicon (LCoS), a Digital Light Processor (DLP), or any other display of any kind.

Although fig. 1A depicts source device 12 and destination device 14 as separate devices, device embodiments may also include the functionality of both source device 12 and destination device 14 or both, i.e., source device 12 or corresponding functionality and destination device 14 or corresponding functionality. In such embodiments, source device 12 or corresponding functionality and destination device 14 or corresponding functionality may be implemented using the same hardware and/or software, or using separate hardware and/or software, or any combination thereof.

It will be apparent to those skilled in the art from this description that the existence and (exact) division of the functionality of the different elements, or source device 12 and/or destination device 14 as shown in fig. 1A, may vary depending on the actual device and application. Source device 12 and destination device 14 may comprise any of a variety of devices, including any type of handheld or stationary device, such as a notebook or laptop computer, a mobile phone, a smartphone, a tablet or tablet computer, a camcorder, a desktop computer, a set-top box, a television, a camera, an in-vehicle device, a display device, a digital media player, a video game console, a video streaming device (e.g., a content service server or a content distribution server), a broadcast receiver device, a broadcast transmitter device, etc., and may not use or use any type of operating system.

Both encoder 20 and decoder 30 may be implemented as any of a variety of suitable circuits, such as one or more microprocessors, Digital Signal Processors (DSPs), application-specific integrated circuits (ASICs), field-programmable gate arrays (FPGAs), discrete logic, hardware, or any combinations thereof. If the techniques are implemented in part in software, an apparatus may store instructions of the software in a suitable non-transitory computer-readable storage medium and may execute the instructions in hardware using one or more processors to perform the techniques of this disclosure. Any of the foregoing, including hardware, software, a combination of hardware and software, etc., may be considered one or more processors.

In some cases, the video encoding and decoding system 10 shown in fig. 1A is merely an example, and the techniques of embodiments of the present application may be applied to video encoding settings (e.g., video encoding or video decoding) that do not necessarily involve any data communication between the encoding and decoding devices. In other examples, the data may be retrieved from local storage, streamed over a network, and so on. A video encoding device may encode and store data to a memory, and/or a video decoding device may retrieve and decode data from a memory. In some examples, the encoding and decoding are performed by devices that do not communicate with each other, but merely encode data to and/or retrieve data from memory and decode data.

Referring to fig. 1B, fig. 1B is an illustrative diagram of an example of a video coding system 40 including the encoder 20 of fig. 2 and/or the decoder 30 of fig. 3, according to an example embodiment. Video coding system 40 may implement a combination of the various techniques of the embodiments of the present application. In the illustrated embodiment, video coding system 40 may include an imaging device 41, an encoder 20, a decoder 30 (and/or a video codec implemented by logic 47 of a processing unit 46), an antenna 42, one or more processors 43, one or more memories 44, and/or a display device 45.

As shown in fig. 1B, the imaging device 41, the antenna 42, the processing unit 46, the logic circuit 47, the encoder 20, the decoder 30, the processor 43, the memory 44, and/or the display device 45 can communicate with each other. As discussed, although video coding system 40 is depicted with encoder 20 and decoder 30, in different examples video coding system 40 may include only encoder 20 or only decoder 30.

In some instances, antenna 42 may be used to transmit or receive an encoded bitstream of video data. Additionally, in some instances, display device 45 may be used to present video data. In some examples, logic 47 may be implemented by processing unit 46. The processing unit 46 may comprise application-specific integrated circuit (ASIC) logic, a graphics processor, a general-purpose processor, or the like. Video decoding system 40 may also include an optional processor 43, which optional processor 43 similarly may include application-specific integrated circuit (ASIC) logic, a graphics processor, a general-purpose processor, or the like. In some examples, the logic 47 may be implemented in hardware, such as video encoding specific hardware, and the processor 43 may be implemented in general purpose software, an operating system, and so on. In addition, the memory 44 may be any type of memory, such as a volatile memory (e.g., Static Random Access Memory (SRAM), Dynamic Random Access Memory (DRAM), etc.) or a non-volatile memory (e.g., flash memory, etc.), and so on. In a non-limiting example, storage 44 may be implemented by a speed cache memory. In some instances, logic circuitry 47 may access memory 44 (e.g., to implement an image buffer). In other examples, logic 47 and/or processing unit 46 may include memory (e.g., cache, etc.) for implementing image buffers, etc.

In some examples, encoder 20, implemented by logic circuitry, may include an image buffer (e.g., implemented by processing unit 46 or memory 44) and a graphics processing unit (e.g., implemented by processing unit 46). The graphics processing unit may be communicatively coupled to the image buffer. The graphics processing unit may include an encoder 20 implemented by logic circuitry 47 to implement the various modules discussed with reference to fig. 2 and/or any other encoder system or subsystem described herein. Logic circuitry may be used to perform various operations discussed herein.

In some examples, decoder 30 may be implemented by logic circuitry 47 in a similar manner to implement the various modules discussed with reference to decoder 30 of fig. 3 and/or any other decoder system or subsystem described herein. In some examples, logic circuit implemented decoder 30 may include an image buffer (implemented by processing unit 43 or memory 44) and a graphics processing unit (e.g., implemented by processing unit 46). The graphics processing unit may be communicatively coupled to the image buffer. The graphics processing unit may include a decoder 30 implemented by logic circuitry 47 to implement the various modules discussed with reference to fig. 3 and/or any other decoder system or subsystem described herein.

In some instances, antenna 42 may be used to receive an encoded bitstream of video data. As discussed, the encoded bitstream may include data related to the encoded video frame, indicators, index values, mode selection data, etc., discussed herein, such as data related to the encoding partition (e.g., transform coefficients or quantized transform coefficients, (as discussed) optional indicators, and/or data defining the encoding partition). Video coding system 40 may also include a decoder 30 coupled to antenna 42 and used to decode the encoded bitstream. The display device 45 is used to present video frames.

It should be understood that for the example described with reference to encoder 20 in the embodiments of the present application, decoder 30 may be used to perform the reverse process. With respect to signaling prediction parameters, decoder 30 may be configured to receive and parse such prediction parameters and decode the associated video data accordingly. In some examples, encoder 20 may entropy encode the prediction parameters into an encoded video bitstream. In such instances, decoder 30 may parse such prediction parameters and decode the relevant video data accordingly.

It should be noted that the optimization processing method for the fused motion vector difference technique described in the embodiment of the present application is mainly used for an inter-frame prediction process, which exists in both the encoder 20 and the decoder 30, and the encoder 20 and the decoder 30 in the embodiment of the present application may be a video standard protocol such as h.263, h.264, HEVV, MPEG-2, MPEG-4, VP8, VP9, or a codec corresponding to a next-generation video standard protocol (e.g., h.266).

Referring to fig. 2, fig. 2 shows a schematic/conceptual block diagram of an example of an encoder 20 for implementing embodiments of the present application. In the example of fig. 2, encoder 20 includes a residual calculation unit 204, a transform processing unit 206, a quantization unit 208, an inverse quantization unit 210, an inverse transform processing unit 212, a reconstruction unit 214, a buffer 216, a loop filter unit 220, a Decoded Picture Buffer (DPB) 230, a prediction processing unit 260, and an entropy encoding unit 270. Prediction processing unit 260 may include inter prediction unit 244, intra prediction unit 254, and mode selection unit 262. Inter prediction unit 244 may include a motion estimation unit and a motion compensation unit (not shown). The encoder 20 shown in fig. 2 may also be referred to as a hybrid video encoder or a video encoder according to a hybrid video codec.

For example, the residual calculation unit 204, the transform processing unit 206, the quantization unit 208, the prediction processing unit 260, and the entropy encoding unit 270 form a forward signal path of the encoder 20, and, for example, the inverse quantization unit 210, the inverse transform processing unit 212, the reconstruction unit 214, the buffer 216, the loop filter 220, the Decoded Picture Buffer (DPB) 230, the prediction processing unit 260 form a backward signal path of the encoder, wherein the backward signal path of the encoder corresponds to a signal path of a decoder (see the decoder 30 in fig. 3).

The encoder 20 receives, e.g., via an input 202, a picture 201 or an image block 203 of a picture 201, e.g., a picture in a sequence of pictures forming a video or a video sequence. Image block 203 may also be referred to as a current picture block or a picture block to be encoded, and picture 201 may be referred to as a current picture or a picture to be encoded (especially when the current picture is distinguished from other pictures in video encoding, such as previously encoded and/or decoded pictures in the same video sequence, i.e., a video sequence that also includes the current picture).

An embodiment of the encoder 20 may comprise a partitioning unit (not shown in fig. 2) for partitioning the picture 201 into a plurality of blocks, e.g. image blocks 203, typically into a plurality of non-overlapping blocks. The partitioning unit may be used to use the same block size for all pictures in a video sequence and a corresponding grid defining the block size, or to alter the block size between pictures or subsets or groups of pictures and partition each picture into corresponding blocks.

In one example, prediction processing unit 260 of encoder 20 may be used to perform any combination of the above-described segmentation techniques.

Like picture 201, image block 203 is also or can be considered as a two-dimensional array or matrix of sample points having sample values, although its size is smaller than picture 201. In other words, the image block 203 may comprise, for example, one sample array (e.g., a luma array in the case of a black and white picture 201) or three sample arrays (e.g., a luma array and two chroma arrays in the case of a color picture) or any other number and/or class of arrays depending on the color format applied. The number of sampling points in the horizontal and vertical directions (or axes) of the image block 203 defines the size of the image block 203.

The encoder 20 as shown in fig. 2 is used to encode a picture 201 block by block, e.g. performing encoding and prediction for each image block 203.

The residual calculation unit 204 is configured to calculate a residual block 205 based on the picture image block 203 and the prediction block 265 (further details of the prediction block 265 are provided below), e.g. by subtracting sample values of the prediction block 265 from sample values of the picture image block 203 sample by sample (pixel by pixel) to obtain the residual block 205 in the sample domain.

The transform processing unit 206 is configured to apply a transform, such as a Discrete Cosine Transform (DCT) or a Discrete Sine Transform (DST), on the sample values of the residual block 205 to obtain transform coefficients 207 in a transform domain. The transform coefficients 207 may also be referred to as transform residual coefficients and represent the residual block 205 in the transform domain.

The transform processing unit 206 may be used to apply integer approximations of DCT/DST, such as the transform specified for HEVC/h.265. Such integer approximations are typically scaled by some factor compared to the orthogonal DCT transform. To maintain the norm of the residual block processed by the forward transform and the inverse transform, an additional scaling factor is applied as part of the transform process. The scaling factor is typically selected based on certain constraints, e.g., the scaling factor is a power of 2 for a shift operation, a trade-off between bit depth of transform coefficients, accuracy and implementation cost, etc. For example, a specific scaling factor may be specified on the decoder 30 side for the inverse transform by, for example, inverse transform processing unit 212 (and on the encoder 20 side for the corresponding inverse transform by, for example, inverse transform processing unit 212), and correspondingly, a corresponding scaling factor may be specified on the encoder 20 side for the forward transform by transform processing unit 206.

The inverse quantization unit 210 is configured to apply inverse quantization of the quantization unit 208 on the quantized coefficients to obtain inverse quantized coefficients 211, e.g., to apply an inverse quantization scheme of the quantization scheme applied by the quantization unit 208 based on or using the same quantization step as the quantization unit 208. The dequantized coefficients 211 may also be referred to as dequantized residual coefficients 211, corresponding to transform coefficients 207, although the loss due to quantization is typically not the same as the transform coefficients.

The inverse transform processing unit 212 is configured to apply an inverse transform of the transform applied by the transform processing unit 206, for example, an inverse Discrete Cosine Transform (DCT) or an inverse Discrete Sine Transform (DST), to obtain an inverse transform block 213 in the sample domain. The inverse transform block 213 may also be referred to as an inverse transform dequantized block 213 or an inverse transform residual block 213.

The reconstruction unit 214 (e.g., summer 214) is used to add the inverse transform block 213 (i.e., the reconstructed residual block 213) to the prediction block 265 to obtain the reconstructed block 215 in the sample domain, e.g., to add sample values of the reconstructed residual block 213 to sample values of the prediction block 265.

Optionally, a buffer unit 216 (or simply "buffer" 216), such as a line buffer 216, is used to buffer or store the reconstructed block 215 and corresponding sample values, for example, for intra prediction. In other embodiments, the encoder may be used to use the unfiltered reconstructed block and/or corresponding sample values stored in buffer unit 216 for any class of estimation and/or prediction, such as intra prediction.

For example, an embodiment of encoder 20 may be configured such that buffer unit 216 is used not only to store reconstructed blocks 215 for intra prediction 254, but also for loop filter unit 220 (not shown in fig. 2), and/or such that buffer unit 216 and decoded picture buffer unit 230 form one buffer, for example. Other embodiments may be used to use filtered block 221 and/or blocks or samples from decoded picture buffer 230 (neither shown in fig. 2) as input or basis for intra prediction 254.

The loop filter unit 220 (or simply "loop filter" 220) is used to filter the reconstructed block 215 to obtain a filtered block 221, so as to facilitate pixel transition or improve video quality. Loop filter unit 220 is intended to represent one or more loop filters, such as a deblocking filter, a sample-adaptive offset (SAO) filter, or other filters, such as a bilateral filter, an Adaptive Loop Filter (ALF), or a sharpening or smoothing filter, or a collaborative filter. Although loop filter unit 220 is shown in fig. 2 as an in-loop filter, in other configurations, loop filter unit 220 may be implemented as a post-loop filter. The filtered block 221 may also be referred to as a filtered reconstructed block 221. The decoded picture buffer 230 may store the reconstructed encoded block after the loop filter unit 220 performs a filtering operation on the reconstructed encoded block.

Embodiments of encoder 20 (correspondingly, loop filter unit 220) may be configured to output loop filter parameters (e.g., sample adaptive offset information), e.g., directly or after entropy encoding by entropy encoding unit 270 or any other entropy encoding unit, e.g., such that decoder 30 may receive and apply the same loop filter parameters for decoding.

Decoded Picture Buffer (DPB) 230 may be a reference picture memory that stores reference picture data for use by encoder 20 in encoding video data. DPB 230 may be formed from any of a variety of memory devices, such as Dynamic Random Access Memory (DRAM) including Synchronous DRAM (SDRAM), Magnetoresistive RAM (MRAM), Resistive RAM (RRAM), or other types of memory devices. The DPB 230 and the buffer 216 may be provided by the same memory device or separate memory devices. In a certain example, a Decoded Picture Buffer (DPB) 230 is used to store filtered blocks 221. Decoded picture buffer 230 may further be used to store other previous filtered blocks, such as previous reconstructed and filtered blocks 221, of the same current picture or of a different picture, such as a previous reconstructed picture, and may provide the complete previous reconstructed, i.e., decoded picture (and corresponding reference blocks and samples) and/or the partially reconstructed current picture (and corresponding reference blocks and samples), e.g., for inter prediction. In a certain example, if reconstructed block 215 is reconstructed without in-loop filtering, Decoded Picture Buffer (DPB) 230 is used to store reconstructed block 215.

Prediction processing unit 260, also referred to as block prediction processing unit 260, is used to receive or obtain image block 203 (current image block 203 of current picture 201) and reconstructed picture data, e.g., reference samples of the same (current) picture from buffer 216 and/or reference picture data 231 of one or more previously decoded pictures from decoded picture buffer 230, and to process such data for prediction, i.e., to provide prediction block 265, which may be inter-predicted block 245 or intra-predicted block 255.

The mode selection unit 262 may be used to select a prediction mode (e.g., intra or inter prediction mode) and/or a corresponding prediction block 245 or 255 used as the prediction block 265 to calculate the residual block 205 and reconstruct the reconstructed block 215.

Embodiments of mode selection unit 262 may be used to select prediction modes (e.g., from those supported by prediction processing unit 260) that provide the best match or the smallest residual (smallest residual means better compression in transmission or storage), or that provide the smallest signaling overhead (smallest signaling overhead means better compression in transmission or storage), or both. The mode selection unit 262 may be configured to determine a prediction mode based on Rate Distortion Optimization (RDO), i.e., select a prediction mode that provides the minimum rate distortion optimization, or select a prediction mode in which the associated rate distortion at least meets the prediction mode selection criteria.

The prediction processing performed by the example of the encoder 20 (e.g., by the prediction processing unit 260) and the mode selection performed (e.g., by the mode selection unit 262) will be explained in detail below.

As described above, the encoder 20 is configured to determine or select the best or optimal prediction mode from a set of (predetermined) prediction modes. The prediction mode set may include, for example, intra prediction modes and/or inter prediction modes.

The intra prediction mode set may include 35 different intra prediction modes, for example, non-directional modes such as DC (or mean) mode and planar mode, or directional modes as defined in h.265, or may include 67 different intra prediction modes, for example, non-directional modes such as DC (or mean) mode and planar mode, or directional modes as defined in h.266 under development.

In possible implementations, the set of inter prediction modes may include, for example, an Advanced Motion Vector (AMVP) mode and a merge (merge) mode depending on available reference pictures (i.e., at least partially decoded pictures stored in the DBP230, for example, as described above) and other inter prediction parameters, for example, depending on whether the entire reference picture or only a portion of the reference picture, such as a search window region of a region surrounding the current block, is used to search for a best matching reference block, and/or depending on whether pixel interpolation, such as half-pixel and/or quarter-pixel interpolation, is applied, for example. In a specific implementation, the inter prediction mode set may include an improved control point-based AMVP mode and an improved control point-based merge mode according to an embodiment of the present application. In one example, intra-prediction unit 254 may be used to perform any combination of the inter-prediction techniques described below.

In addition to the above prediction mode, embodiments of the present application may also apply a skip (skip) mode and/or a direct mode.

The prediction processing unit 260 may further be configured to partition the image block 203 into smaller block partitions or sub-blocks, for example, by iteratively using quad-tree (QT) partitions, binary-tree (BT) partitions, or triple-tree (TT) partitions, or any combination thereof, and to perform prediction, for example, for each of the block partitions or sub-blocks, wherein mode selection includes selecting a tree structure of the partitioned image block 203 and selecting a prediction mode to apply to each of the block partitions or sub-blocks.

The inter prediction unit 244 may include a Motion Estimation (ME) unit (not shown in fig. 2) and a Motion Compensation (MC) unit (not shown in fig. 2). The motion estimation unit is used to receive or obtain a picture image block 203 (current picture image block 203 of current picture 201) and a decoded picture 231, or at least one or more previously reconstructed blocks, e.g., reconstructed blocks of one or more other/different previously decoded pictures 231, for motion estimation. For example, the video sequence may comprise a current picture and a previously decoded picture 31, or in other words, the current picture and the previously decoded picture 31 may be part of, or form, a sequence of pictures forming the video sequence.

For example, the encoder 20 may be configured to select a reference block from a plurality of reference blocks of the same or different one of a plurality of other pictures and provide the reference picture and/or an offset (spatial offset) between a position (X, Y coordinates) of the reference block and a position of the current block to a motion estimation unit (not shown in fig. 2) as an inter prediction parameter. This offset is also called a Motion Vector (MV).