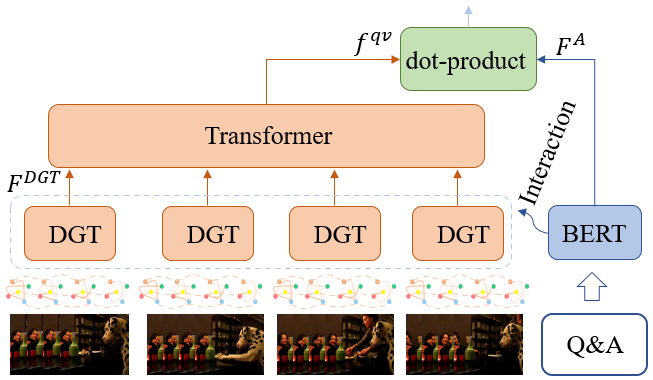

VGT: Video Graph Transformer for Video Question Answering

Introduction

Existing transformer-style models only demonstrate their success in answering questions that involve the coarse recognition or scene-level description of video contents. Their performance remains either unknown or weak in answering questions that emphasize fine-grained visual relation reasoning, especially the causal and temporal relations that feature video dynamics at action and event level. In this paper, we propose Video Graph Transformer (VGT) model to advance VideoQA from coarse recognition and scene-level description to fine-gained visual relation reasoning and cognition-level understanding of the dynamic visual contents. Specifically, we make the following contributions:

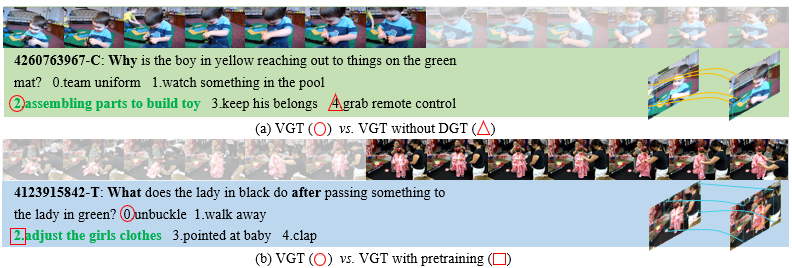

- We design dynamic graph transformer (DGT) to encode visual graph dynamics for relation reasoning in space-time.

- We demonstrate that supervised contrastive learning significantly outperforms classification for multi-choice cross-modal video understanding. Also, a fine-grained cross-modal interaction can help improve performance.

- We demonstrate that pretraining visual graph transformer can benefit video-language understanding towards a more data-efficient and fine-grained direction.

See our poster at ECCV'22 for a quick overview of the work.

- Release feature of TGIF-QA + MSRVTT-QA [temporally access, email us if needed.].

Assume you have installed Anaconda, please do the following to setup the envs:

>conda create -n videoqa python==3.8.8

>conda activate videoqa

>git clone https://github.com/sail-sg/VGT.git

>pip install -r requirements.txt

Please create a data folder outside this repo, so you have two folders in your workspace 'workspace/data/' and 'workspace/VGT/'.

Below we use NExT-QA as an example to get you farmiliar with the code.

Please download the related video feature and QA annotations according to the links provided in the Results and Resources section. Extract QA annotations into workspace/data/datasets/nextqa/, video features into workspace/data/feats/nextqa/ and checkpoint files into workspace/data/save_models/nextqa/.

./shell/next_test.sh 0

python eval_next.py --folder VGT --mode val

Table 1. VideoQA Accuracy (%).

| Cross-Modal Pretrain | NExT-QA | TGIF-QA (Action) | TGIF-QA (Trans) | TGIF-QA (FrameQA) | TGIF-QA-R* (Action) | TGIF-QA-R* (Trans) | MSRVTT-QA |

|---|---|---|---|---|---|---|---|

| - | 53.7 | 95.0 | 97.6 | 61.6 | 59.9 | 70.5 | 39.7 |

| WebVid0.18M | 55.7 | - | - | - | 60.5 | 71.5 | - |

| - | feats | feats | feats | feats | feats | feats | feats |

| - | train&val+test | videos | videos | videos | videos | videos | videos |

| - | Q&A | Q&A | Q&A | Q&A | Q&A | Q&A | Q&A |

We have provided all the scripts in the folder 'shells', you can start your training by specifying the GPU IDs behind the script. (If you have multiple GPUs, you can separate them with comma: ./shell/nextqa_train.sh 0,1)

./shell/nextqa_train.sh 0

It will train the model and save to the folder 'save_models/nextqa/'

@inproceedings{xiao2022video,

title={Video Graph Transformer for Video Question Answering},

author={Xiao, Junbin and Zhou, Pan and Chua, Tat-Seng and Yan, Shuicheng},

booktitle={European Conference on Computer Vision},

pages={39--58},

year={2022},

organization={Springer}

}

Some code is token from VQA-T, and our video feature extraction is inspired by HQGA. Thanks the authors for their great work and code.

If you use any resources (feature & code & models) from this repo, please kindly cite our paper and acknowledge the source.

This repository is released under the Apache 2.0 license as found in the LICENSE file.