Yuntong Ye, Changfeng Yu, Yi Chang, Lin Zhu, Xi-le Zhao, Luxin Yan, Yonghong Tian

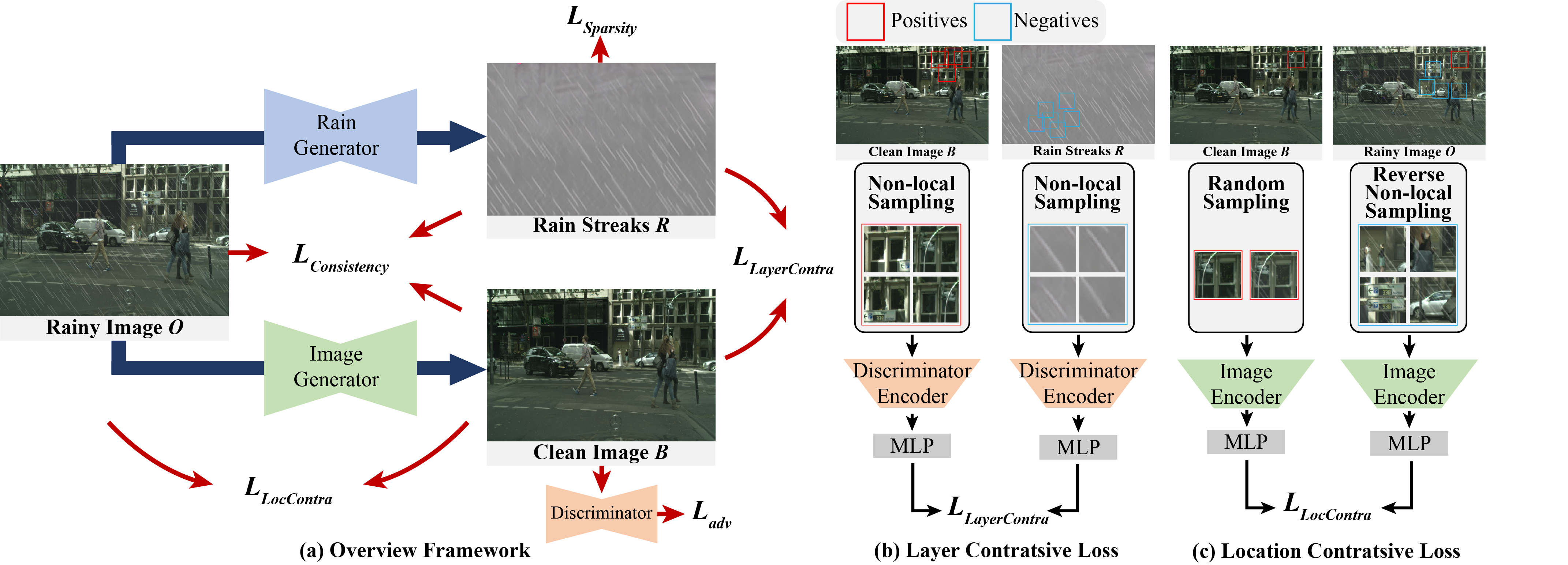

In this work, we propose a novel non-local contrastive learning (NLCL) method for unsupervised image deraining. Consequently, we not only utilize the intrinsic self-similarity property within samples, but also the mutually exclusive property between the two layers, so as to better differ the rain layer from the clean image. Specifically, the non-local self-similarity image layer patches as the positives are pulled together and similar rain layer patches as the negatives are pushed away. Thus the similar positive/negative samples that are close in the original space benefit us to enrich more discriminative representation. Apart from the self-similarity sampling strategy, we analyze how to choose an appropriate feature encoder in NLCL. Extensive experiments on different real rainy datasets demonstrate that the proposed method obtains state-of-the-art performance in deraining.

The project is built with PyTorch 1.6.0, Python3.6. For package dependencies, you can install them by:

pip install -r requirements.txtThe pre-trained models of both Rain and Background Generator Networks are provided in checkpoints/RealRain.

To train NLCL on real rain dataset, you can begin the training by:

python train.py --dataroot DATASET_ROOT --model NLCL --name NAME --dataset_mode unaligned

The DATASET_ROOT example are provided in datasets/RealRain.

To evaluate NLCL, you can run:

python test.py --dataroot DATASET_ROOT --model NLCL --name NAME --dataset_mode single --preprocess None

If you find this project useful in your research, please consider citing:

@InProceedings{Ye_2022_CVPR,

author = {Ye, Yuntong and Yu, Changfeng and Chang, Yi and Zhu, Lin and Zhao, Xi-Le and Yan, Luxin and Tian, Yonghong},

title = {Unsupervised Deraining: Where Contrastive Learning Meets Self-Similarity},

booktitle = {Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR)},

month = {June},

year = {2022},

pages = {5821-5830}

}

This code is inspired by CycleGAN.

Please contact us if there is any question or suggestion(Yun Guo [email protected], Yuntong Ye [email protected], Yi Chang [email protected]).