Audio detector of wildfire in open nature.

-

Labeled video dataset read from Audioset (https://research.google.com/audioset/dataset/index.html)

-

Nature-related sounds were extracted from the entire ontology. From those nature videos, sound was extracted in wav format. A new binary classification problem was generated by assigning class 1 to sounds where fire is present, whereas 0 is assigned to sounds without that sound.

-

From sounds, we create spectogram images which are saved and fed into a deep learning model.

-

A Convolutional Neural Network (CNN) was elected as the ML component for modeling, and ResNet34 as the pre-trained weight set for transfer learning.

-

Once model was trained, it was put into "production" by uploading the model to Google Cloud, and a tiny but functional web app to Render (https://render.com/)

-

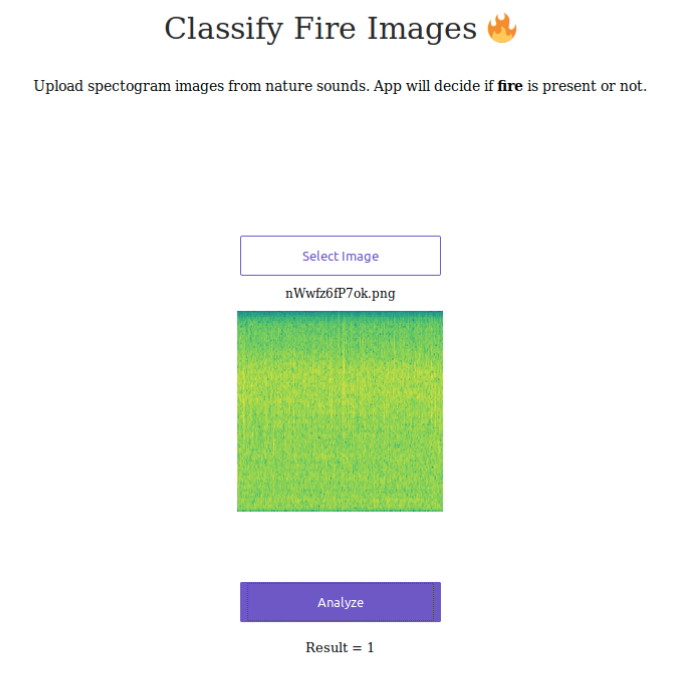

The app offers a frontpage that looks like the image below. This basically offers a starting point where users can upload an spectogram (224x224, PNG format) and predict if that sounds contains fire (1) or not (0).

- Web app is contained in /app

- Notebooks for extraction, data munging and transformation, and modeling are contained in /Notebooks