Started initially as C# port of ConvNetJS. You can use ConvNetSharp to train and evaluate convolutional neural networks (CNN).

Thank you very much to the original author of ConvNetJS (Andrej Karpathy) and to all the contributors!

ConvNetSharp relies on ManagedCuda library to acces NVidia's CUDA

| Core.Layers | Flow.Layers | Computation graph |

|---|---|---|

| No computation graph | Layers that create a computation graph behind the scene | 'Pure flow' |

| Network organised by stacking layers | Network organised by stacking layers | 'Ops' connected to each others. Can implement more complex networks |

|

|

|

| E.g. MnistDemo | E.g. MnistFlowGPUDemo or Flow version of Classify2DDemo | E.g. ExampleCpuSingle |

Here's a minimum example of defining a 2-layer neural network and training it on a single data point:

using System;

using ConvNetSharp.Core;

using ConvNetSharp.Core.Layers.Double;

using ConvNetSharp.Core.Training.Double;

using ConvNetSharp.Volume;

using ConvNetSharp.Volume.Double;

namespace MinimalExample

{

internal class Program

{

private static void Main()

{

// specifies a 2-layer neural network with one hidden layer of 20 neurons

var net = new Net<double>();

// input layer declares size of input. here: 2-D data

// ConvNetJS works on 3-Dimensional volumes (width, height, depth), but if you're not dealing with images

// then the first two dimensions (width, height) will always be kept at size 1

net.AddLayer(new InputLayer(1, 1, 2));

// declare 20 neurons

net.AddLayer(new FullyConnLayer(20));

// declare a ReLU (rectified linear unit non-linearity)

net.AddLayer(new ReluLayer());

// declare a fully connected layer that will be used by the softmax layer

net.AddLayer(new FullyConnLayer(10));

// declare the linear classifier on top of the previous hidden layer

net.AddLayer(new SoftmaxLayer(10));

// forward a random data point through the network

var x = BuilderInstance.Volume.From(new[] { 0.3, -0.5 }, new Shape(2));

var prob = net.Forward(x);

// prob is a Volume. Volumes have a property Weights that stores the raw data, and WeightGradients that stores gradients

Console.WriteLine("probability that x is class 0: " + prob.Get(0)); // prints e.g. 0.50101

var trainer = new SgdTrainer(net) { LearningRate = 0.01, L2Decay = 0.001 };

trainer.Train(x, BuilderInstance.Volume.From(new[] { 1.0, 0.0, 0.0, 0.0, 0.0, 0.0, 0.0, 0.0, 0.0, 0.0 }, new Shape(1, 1, 10, 1))); // train the network, specifying that x is class zero

var prob2 = net.Forward(x);

Console.WriteLine("probability that x is class 0: " + prob2.Get(0));

// now prints 0.50374, slightly higher than previous 0.50101: the networks

// weights have been adjusted by the Trainer to give a higher probability to

// the class we trained the network with (zero)

}

}

}Fluent API (see FluentMnistDemo)

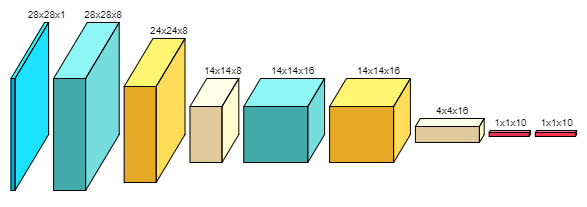

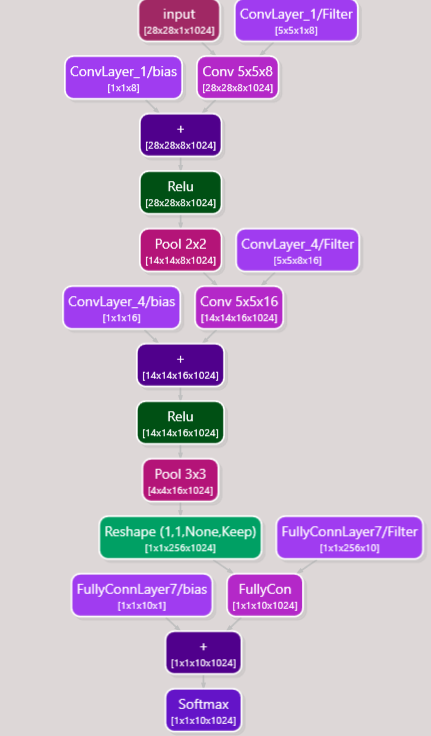

var net = FluentNet<double>.Create(24, 24, 1)

.Conv(5, 5, 8).Stride(1).Pad(2)

.Relu()

.Pool(2, 2).Stride(2)

.Conv(5, 5, 16).Stride(1).Pad(2)

.Relu()

.Pool(3, 3).Stride(3)

.FullyConn(10)

.Softmax(10)

.Build();To switch to GPU mode:

- add '

GPU' in the namespace:using ConvNetSharp.Volume.GPU.Single;orusing ConvNetSharp.Volume.GPU.Double; - add

BuilderInstance<float>.Volume = new ConvNetSharp.Volume.GPU.Single.VolumeBuilder();orBuilderInstance<double>.Volume = new ConvNetSharp.Volume.GPU.Double.VolumeBuilder();at the beggining of your code

You must have CUDA version 8 and Cudnn version 6.0 (April 27, 2017) installed. Cudnn bin path should be referenced in the PATH environment variable.

Mnist GPU demo here

using ConvNetSharp.Core.Serialization;

[...]

// Serialize to json

var json = net.ToJson();

// Deserialize from json

Net deserialized = SerializationExtensions.FromJson<double>(json);using ConvNetSharp.Flow.Serialization;

[...]

// Serialize to two files: MyNetwork.graphml (graph structure) / MyNetwork.json (volume data)

net.Save("MyNetwork");

// Deserialize from files

var deserialized = SerializationExtensions.Load<double>("MyNetwork", false)[0]; // first element is the network (second element is the cost if it was saved along)