Siddharth Mishra-Sharma, Yiding Song, and Jesse Thaler

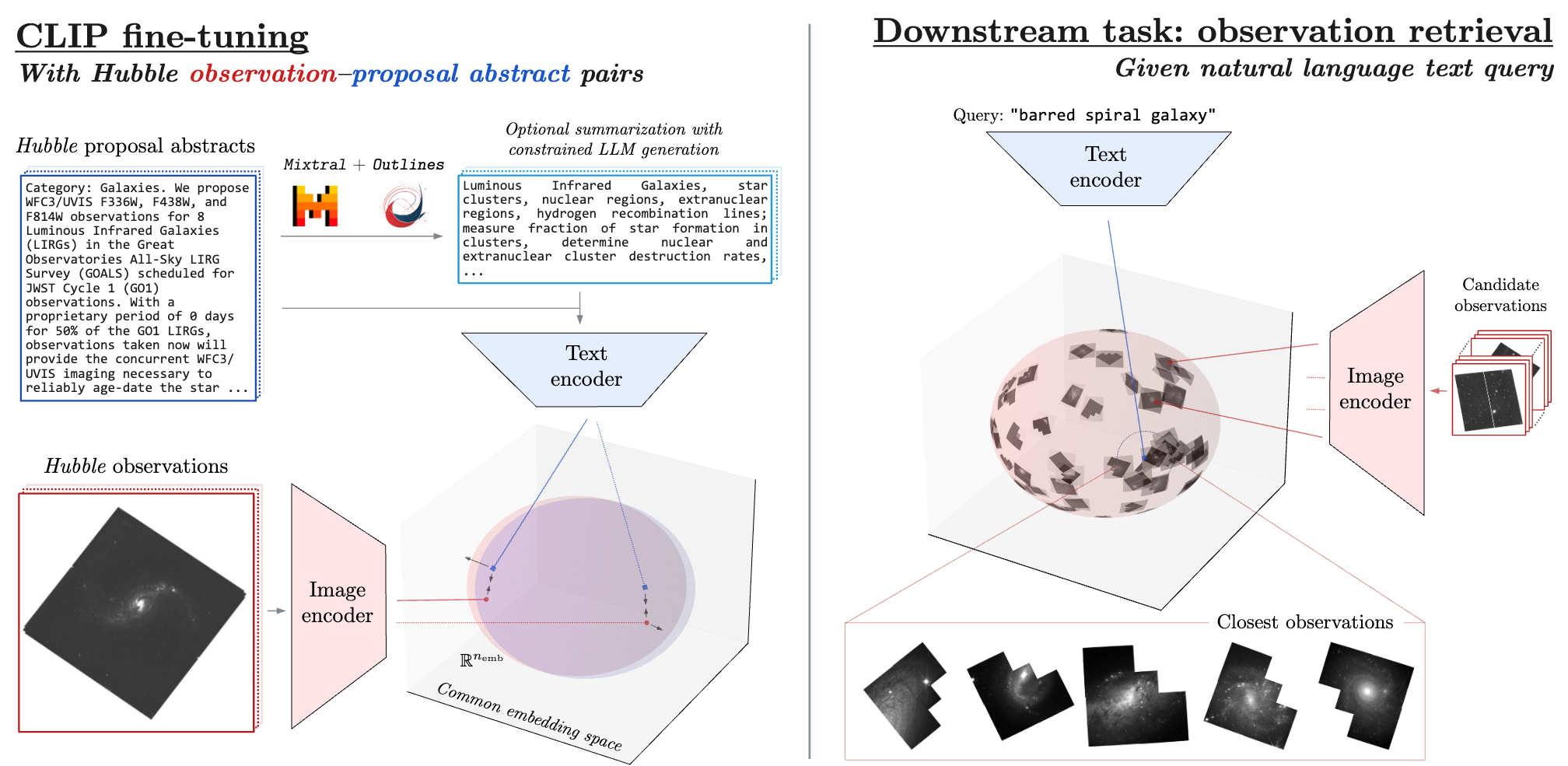

We present PAPERCLIP (Proposal Abstracts Provide an Effective Representation for Contrastive Language-Image Pre-training), a method which associates astronomical observations imaged by telescopes with natural language using a neural network model. The model is fine-tuned from a pre-trained Contrastive Language-Image Pre-training (CLIP) model using successful observing proposal abstracts and corresponding downstream observations, with the abstracts optionally summarized via guided generation using large language models (LLMs). Using observations from the Hubble Space Telescope (HST) as an example, we show that the fine-tuned model embodies a meaningful joint representation between observations and natural language through tests targeting image retrieval (i.e., finding the most relevant observations using natural language queries) and description retrieval (i.e., querying for astrophysical object classes and use cases most relevant to a given observation). Our study demonstrates the potential for using generalist foundation models rather than task-specific models for interacting with astronomical data by leveraging text as an interface.

Link to paper draft. The PDF is compiled automatically from the main branch into the main-pdf branch on push.

Since PyTorch and Jax can be tricky to have under the same roof, the Python environment for downloading data and guided LLM summarization using Outlines is defined in environment_outlines.yml, and the one for training and evaluating the CLIP model in environment.py. To create the environment run e.g.,

mamba env create --file environment.yamlThe code primarily uses Jax. The main components are:

- The script for downloading the data is download_data.py, the summarization script is summarize.py, and training script is train.py.

- notebooks/01_create_dataset.ipynb is used to create the

tfrecordsdata used for training. - notebooks/03_eval.ipynb creates the qualitative and quantitative evaluation plots.

- notebooks/09_dot_product_eval.ipynb generates additional quantitative evaluation.

Coming soon.

If you use this code, please cite our paper:

@misc{mishrasharma2024paperclip,

title={PAPERCLIP: Associating Astronomical Observations and Natural Language with Multi-Modal Models},

author={Siddharth Mishra-Sharma and Yiding Song and Jesse Thaler},

year={2024},

eprint={2403.08851},

archivePrefix={arXiv},

primaryClass={astro-ph.IM}

}