🙈 Vision utilities for web interaction agents 🙈

🔗 Main site • 🐦 Twitter • 📢 Discord

If you've tried using an LLM to automate web interactions, you've probably run into questions like:

- How should you feed the webpage to an LLM? (e.g. HTML, Accessibility Tree, Screenshot)

- How do you map LLM responses back to web elements?

- How can you inform a text-only LLM about the page's visual structure?

At Reworkd, we iterated on all these problems across tens of thousands of real web tasks to build a powerful perception system for web agents... Tarsier! In the video below, we use Tarsier to provide webpage perception for a minimalistic GPT-4 LangChain web agent.

tarsier.mp4

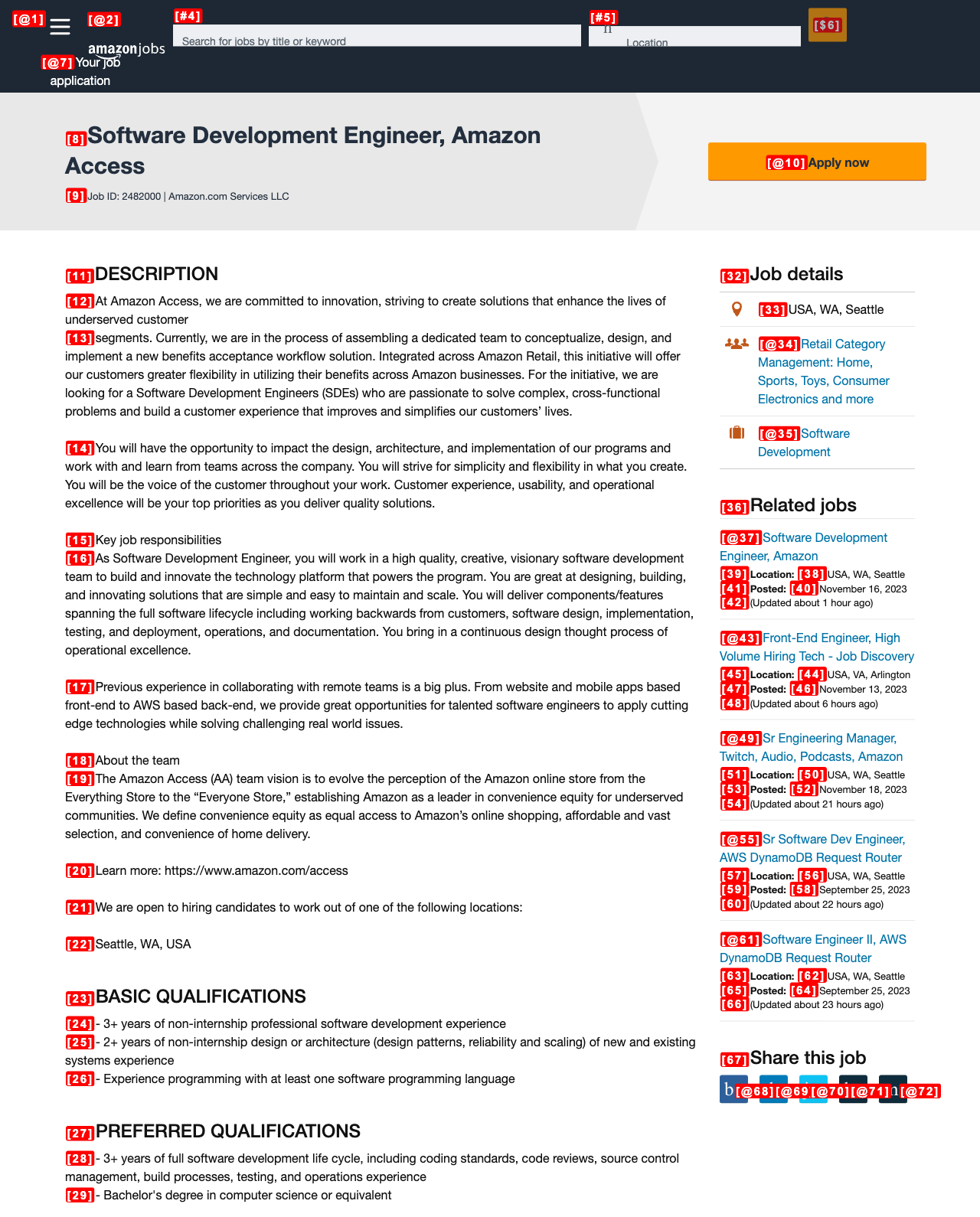

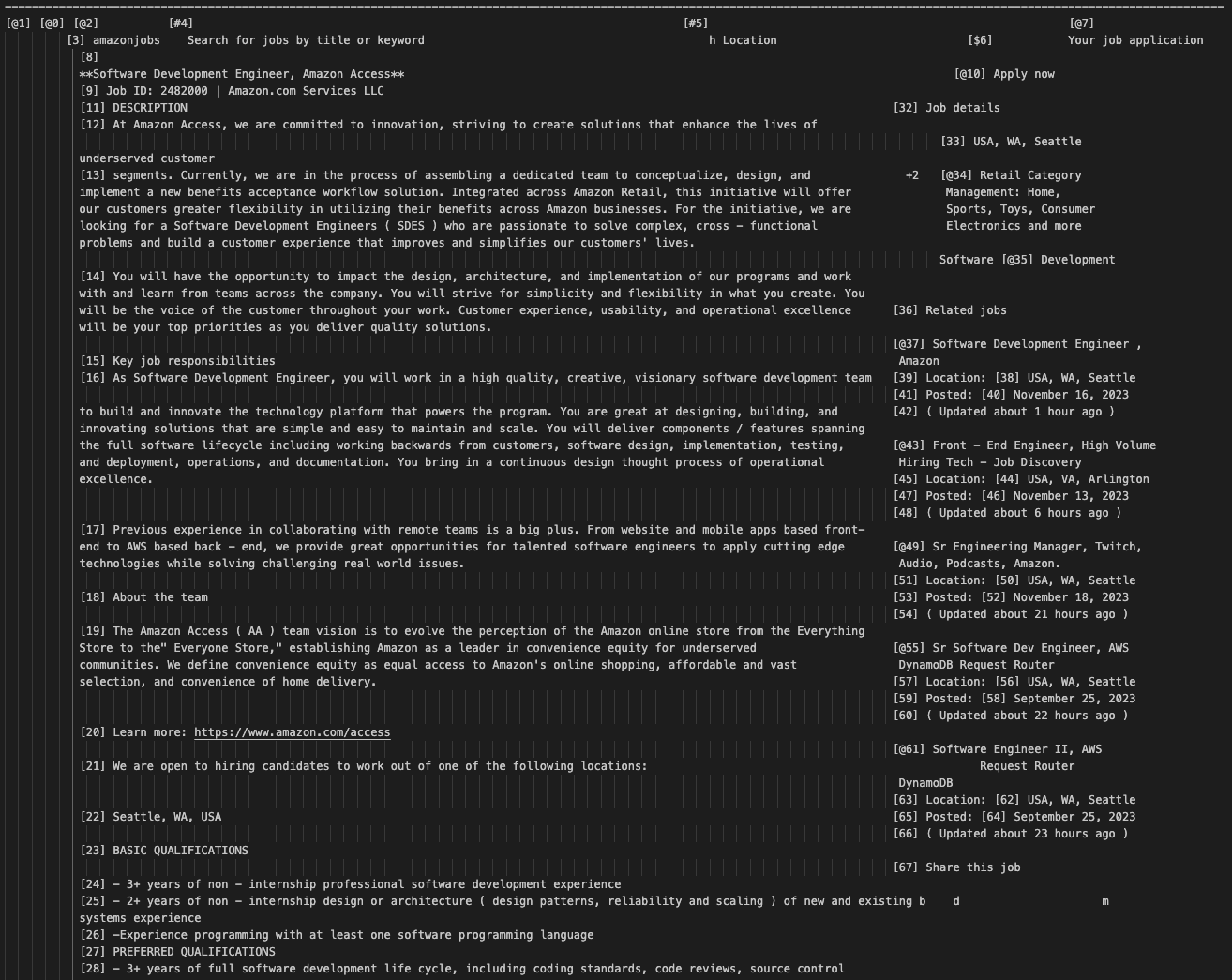

Tarsier visually tags interactable elements on a page via brackets + an ID e.g. [23].

In doing this, we provide a mapping between elements and IDs for an LLM to take actions upon (e.g. CLICK [23]).

We define interactable elements as buttons, links, or input fields that are visible on the page; Tarsier can also tag all textual elements if you pass tag_text_elements=True.

Furthermore, we've developed an OCR algorithm to convert a page screenshot into a whitespace-structured string (almost like ASCII art) that an LLM even without vision can understand. Since current vision-language models still lack fine-grained representations needed for web interaction tasks, this is critical. On our internal benchmarks, unimodal GPT-4 + Tarsier-Text beats GPT-4V + Tarsier-Screenshot by 10-20%!

| Tagged Screenshot | Tagged Text Representation |

|---|---|

|

|