-

Notifications

You must be signed in to change notification settings - Fork 243

New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

Adding NVIDIA SE-ResNeXt #257

Adding NVIDIA SE-ResNeXt #257

Conversation

|

Hi @nv-kkudrynski! Thank you for your pull request and welcome to our community. Action RequiredIn order to merge any pull request (code, docs, etc.), we require contributors to sign our Contributor License Agreement, and we don't seem to have one on file for you. ProcessIn order for us to review and merge your suggested changes, please sign at https://code.facebook.com/cla. If you are contributing on behalf of someone else (eg your employer), the individual CLA may not be sufficient and your employer may need to sign the corporate CLA. Once the CLA is signed, our tooling will perform checks and validations. Afterwards, the pull request will be tagged with If you have received this in error or have any questions, please contact us at [email protected]. Thanks! |

|

✔️ Deploy Preview for pytorch-hub-preview ready! 🔨 Explore the source changes: e333d0d 🔍 Inspect the deploy log: https://app.netlify.com/sites/pytorch-hub-preview/deploys/619f67d0b965960007ff7982 😎 Browse the preview: https://deploy-preview-257--pytorch-hub-preview.netlify.app |

Hi, I'm chasing them to see what's happening, sorry about that! I'll keep you posted. |

| ).to(device) | ||

| ``` | ||

|

|

||

| Run inference. Use `pick_n_best(predictions=output, n=topN)` helepr function to pick N most probably hypothesis according to the model. |

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

helepr -> helper?

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

also, N most probable?

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

Hypothesis -> Hypotheses

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

fixed

|

|

||

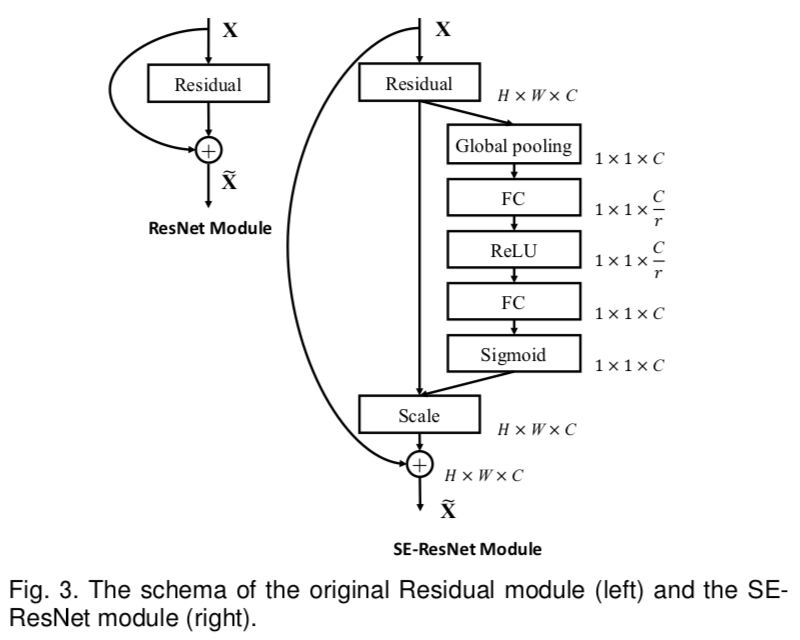

| The ***SE-ResNeXt101-32x4d*** is a [ResNeXt101-32x4d](https://arxiv.org/pdf/1611.05431.pdf) | ||

| model with added Squeeze-and-Excitation module introduced | ||

| in [Squeeze-and-Excitation Networks](https://arxiv.org/pdf/1709.01507.pdf) paper. |

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

in the XX paper IINW

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

fixed

|

|

||

| #### Model architecture | ||

|

|

||

|  |

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

image not displaying, why not relative import?

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

I found out this is where the image lands on your side after the merge. From here it is visible both in the markdown in the hub and in the gcollab (where relative path does not work).

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

Ok got it, it does not appear in netlify though... I guess we can consider it as a netlify issue then!

| Image shows the architecture of SE block and where is it placed in ResNet bottleneck block. | ||

|

|

||

|

|

||

| Note that the SE-ResNeXt101-32x4d model can be deployed for inference on the [NVIDIA Triton Inference Server](https://github.com/NVIDIA/trtis-inference-server) using TorchScript, ONNX Runtime or TensorRT as an execution backend. For details check [NGC](https://catalog.ngc.nvidia.com/orgs/nvidia/resources/se_resnext_for_triton_from_pytorch) |

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

full stop missing.

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

fixed

| ### Details | ||

| For detailed information on model input and output, training recipies, inference and performance visit: | ||

| [github](https://github.com/NVIDIA/DeepLearningExamples/tree/master/PyTorch/Classification/ConvNets/se-resnext101-32x4d) | ||

| and/or [NGC](https://catalog.ngc.nvidia.com/orgs/nvidia/resources/se_resnext_for_pytorch) |

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

full stop missing

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

fixed

@vmoens @soumith Adding another from Nvidia.

In the meanwhile can we fix my CLA. I did all the formalites on my side and sent the email to [email protected] followed by confirmation from my officials. Thanks