Giskard creates interfaces for humans to inspect & test AI models. It is open-source and self-hosted.

Giskard lets you instantly see your model's prediction for a given set of feature values. You can set the values directly in Giskard and see the prediction change.

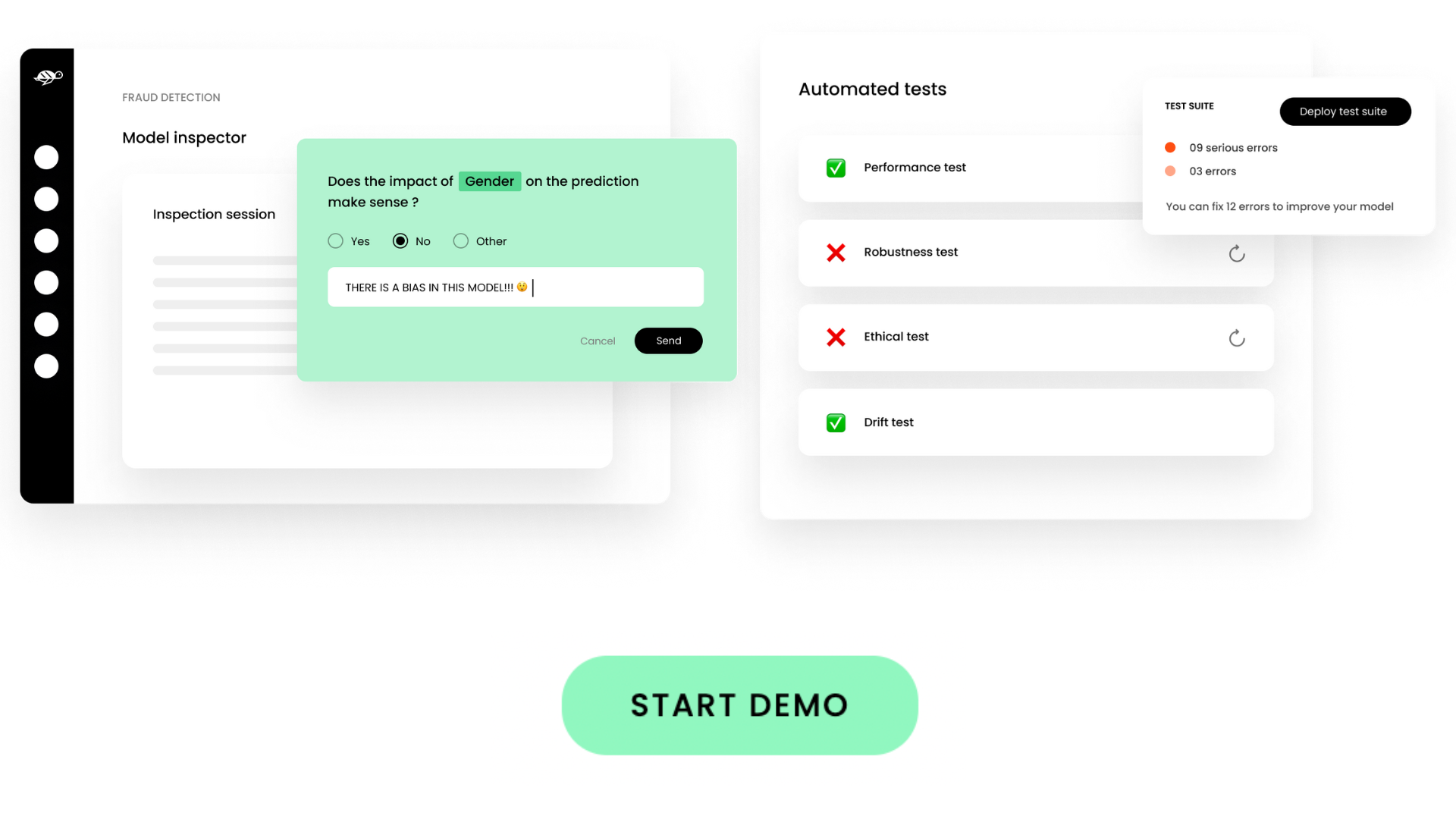

Saw anything strange? Leave a feedback directly within Giskard, so that your team can explore the query that generated the faulty result. Designed for both tech and business users, Giskard is super intuitive to use! 👌

And of course, Giskard works with any model, any environment and integrates seemlessly with your favorite tools

- instantly see the model's prediction for a given set of feature values

- change the values directly in Giskard and see the prediction change

- works with any type of models, datasets and environments

- leave notes and tag teammates directly within the Giskard interface

- use discussion threads to have all information centralized for easier follow-up and decision making

- enjoy Giskard's super-intuitive design, made with both tech and business users in mind

- turn the collected feedback into executable tests for safe deployment. Giskard provides presets of tests so that you design and execute your tests in no time

- receive actionable alerts on AI model bugs in production

- protect your ML models against the risk of regressions, drift and bias

- Explore your ML model: Easily upload any Python model: PyTorch, TensorFlow, 🤗 Transformers, Scikit-learn, etc. Play with the model to test its performance.

- Discuss and analyze feedback: Enter feedback directly within Giskard and discuss it with your team.

- Turn feedback into tests: Use Giskard test presets to design and execute your tests in no time.

Giskard lets you automate tests in any of the categories below:

Metamorphic testing

Test if your model outputs behave as expected before and after input perturbationStatistical testing

Test if your model output respects some business rulesPerformance testing

Test if your model performance is sufficiently high within some particular data slicesData drift testing

Test if your features don't drift between the reference and actual datasetPrediction drift testing

Test the absence of concept drift inside your modelPlay with Giskard before installing! Click the image below to start the demo:

Are you a developer? Check our developer's readme

Requirements: git, docker and docker-compose

git clone https://github.com/Giskard-AI/giskard.git

cd giskard

docker-compose up -dThat's it. Access at http:https://localhost:19000 with login/password: admin/admin.

Follow our handy guides to get started on the basics as quickly as possible:

As our product is young, working in close collaboration with our first users is very important to identify what to improve, and how we can deliver value. It needs to be a Win-Win scenario!

If you are interested in joining our Design Partner program, drop us a line at [email protected].

❗️ The final entry date is September 2022 ❗️

We welcome contributions from the Machine Learning community!

Read this guide to get started.

🌟 Leave us a star, it helps the project to get discovered by others and keeps us motivated to build awesome open-source tools! 🌟