- 分布式处理

- MongoDB存储

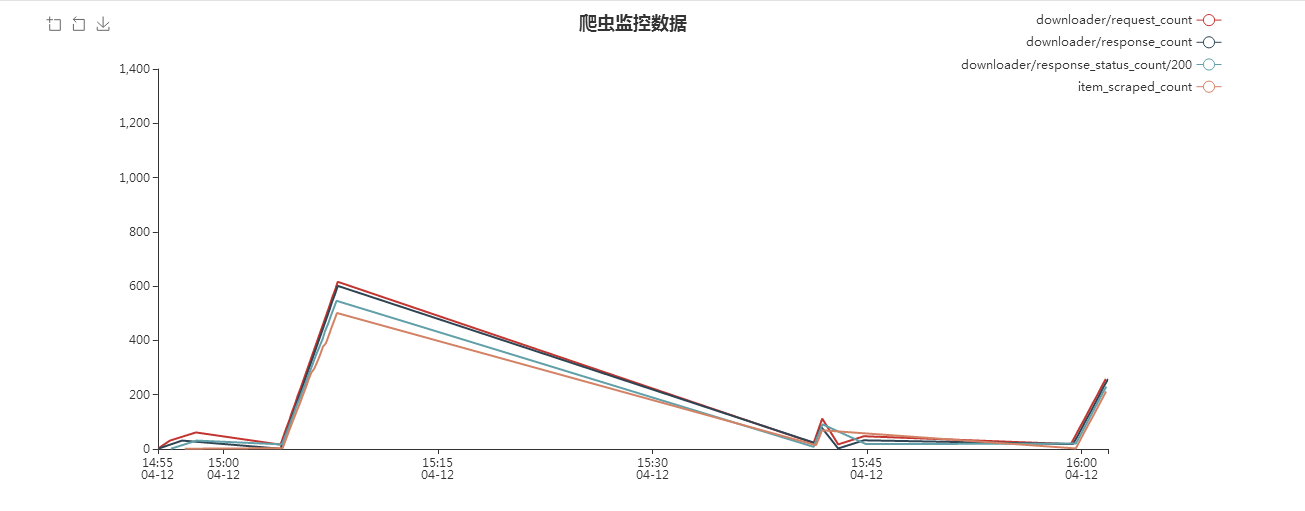

- 爬虫监控

- 断点续爬

- scrapy

- redis

- mongoDB

- redis

- scrapy-redis

- flask

- scrapy不会安装的可参考:https://blog.csdn.net/junmoxi/article/details/70519598 *

- 启动MongoDB服务

- 启动Redis服务

- 运行monitor下的app.py启动监控系统

- 运行lpush.py将起始URL压入队列

- 运行start.py

将monitor目录clone到spiders的同级目录下 在 scrapy的settings.py中添加下列设置

- 添加DOWNLOADER_MIDDLEWARES

monitor.statscol.StatcollectorMiddleware

- 添加ITEM_PIPELINES

monitor.statscol.SpiderRunStatspipeline

- 添加STATS_KEYS

STATS_KEYS = ['downloader/request_count', 'downloader/response_count','downloader/response_status_count/200', 'item_scraped_count']

- 安装模块:

- pip install bsddb3

- pip install scrapy-deltafetch

- pip install scrapy-magicfields

安装bsddb3时如果失败,可以去网站:https://www.lfd.uci.edu/~gohlke/pythonlibs/下载bsddb3的包 然后通过 pip install 包名.whl 安装

- 导入文件

将scrapy_deltafetch 和 scrapy_magicfields 两个文件夹放入到你的目录下

- 新增配置 在scrapy的setting文件中新增配置

SPIDER_MIDDLEWARES = { 'zufang.scrapy_deltafetch.middleware.DeltaFetch': 50, 'scrapy_magicfields.middleware.MagicFieldsMiddleware': 51, } DELTAFETCH_ENABLED = True MAGICFIELDS_ENABLED = True MAGIC_FIELDS = { "url": "scraped from $response:url", }