Yining Hong, Haoyu Zhen, Peihao Chen, Shuhong Zheng, Yilun Du, Zhenfang Chen, Chuang Gan

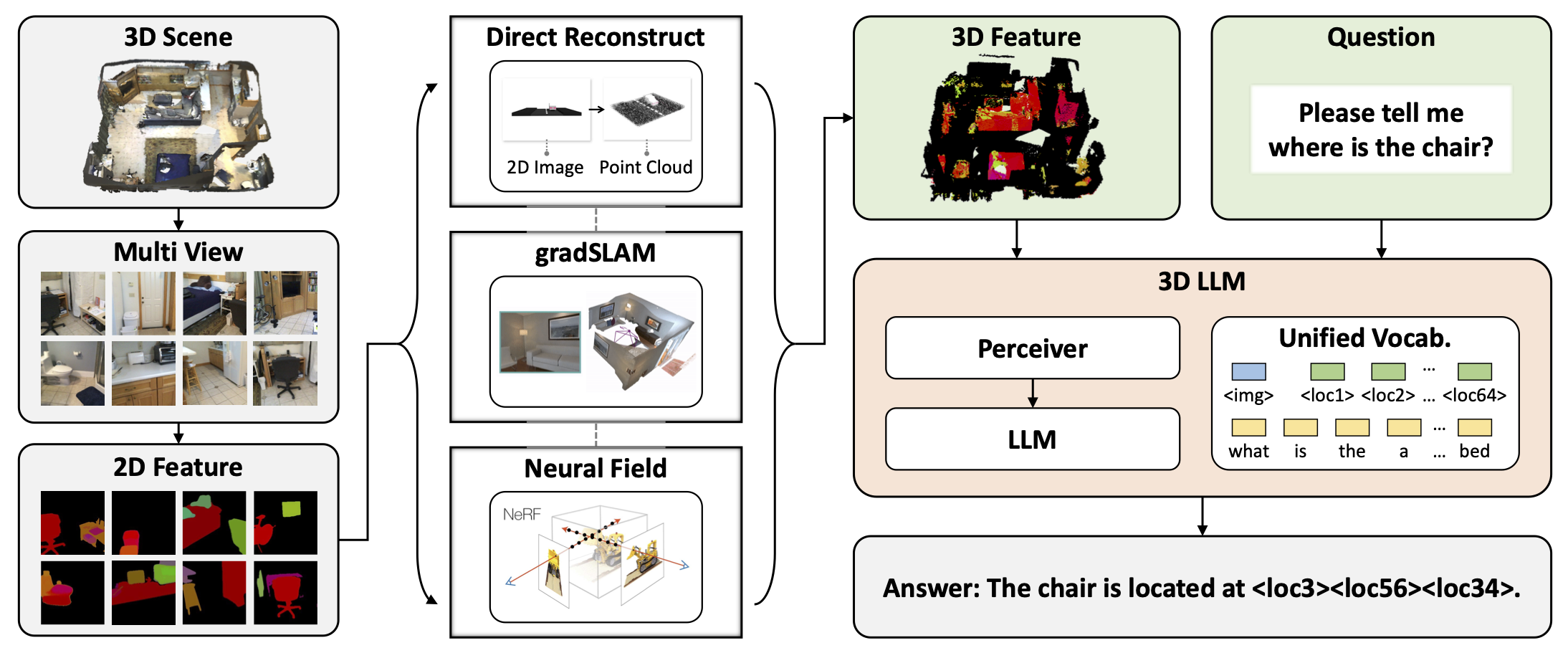

3D-LLM is the first Large Language Model that could take 3D representations as inputs. It is able to handle both object (e.g., objaverse) and scene data (e.g., scannet & hm3d).

Install salesforce-lavis

$ conda create -n lavis python=3.8

$ conda activate lavis

$ git clone https://github.com/salesforce/LAVIS.git SalesForce-LAVIS

$ cd SalesForce-LAVIS

$ pip install -e .

$ pip install positional_encodingsPretrained checkpoints are released (Please use v2!)

Finetuning checkpoints for ScanQA, SQA3d, and 3DMV_VQA are released. The results are better than preprint-version paper. We will update the camera-ready paper to the arxiv soon.

Download the objaverse subset features here. Download the pretrained checkpoints. For more details, please refer to 3DLLM_BLIP2-base/DEMO.md.

$ cd 3DLLM_BLIP2-base

$ conda activate lavis

python inference.py # for objects

python inference.py --mode room # for scenes

TODO: huggingface auto load checkpoint.

Finetuning config yaml files that need to be changed are in this directory

- Download the pretrained checkpoints. Modify the "resume_checkpoint_path" path in the yaml files

- Download the questions, modify the "annotations" path in the yaml files

- Download the scannet features or 3dmv-vqa features. Modify the path (both train and val) in lavis/datasets/datasets/threedvqa_datasets.py

$ cd 3DLLM_BLIP2-base

$ conda activate lavis

python -m torch.distributed.run --nproc_per_node=8 train.py --cfg-path lavis/projects/blip2/train/<finetune_yaml_file>

You can also load the finetuning checkpoints here.

5.Calculating scores

cd calculate_scores

python calculate_score_<task>.py --folder <your result dir> --epoch <your epoch>

please also modify the feature and question path in the scripts

TODO: huggingface auto load checkpoint.

All data will be gradually released in Google Drive and Huggingface (All files are released in Google Drive first and then Huggingface. Please refer to the Google Drive for file structure)

We are still cleaning the grounding & navigation part. All other pre-training data are released.

Language annotations of object data released here.

For downloading Objaverse data, please refer to Objaverse website.

To get 3D features and point clouds of the Objaverse data, please refer to Step1 and Step3 of 3DLanguage Data generation - ChatCaptioner based

A small set of objaverse features is released here.

TODO: We will probably release the whole set of Objaverse 3D features

3D features and point clouds (~250G) are released here. However, if you want to explore generating the features yourself, please refer to the Three-step 3D Feature Extraction part here. Please use v2 to be consistent with the checkpoints (and also result in better performances).

3D features and point clouds of Scannet (used for finetuning ScanQA and SQA3D) are released in here. 3D features and point clouds of 3DMV-VQA are released here (3DMV-VQA data will be further updated for a clearer structure).

All questions can be found here.

Follow the instruction in 3DLanguage_data/ChatCaptioner_based/objaverse_render/README.md for installation.

The following code will render images of a objaverse scene (e.g. f6e9ec5953854dff94176c36b877c519). The rendered images will be saved at 3DLanguage_data/ChatCaptioner_based/objaverse_render/output.

(Please refer to 3DLanguage_data/ChatCaptioner_based/objaverse_render/README.md for more details about the command)

$ cd ./3DLanguage_data/ChatCaptioner_based/objaverse_render

$ {path/to/blender} -b -P render.py -noaudio --disable-crash-handler -- --uid f6e9ec5953854dff94176c36b877c519

Installation:

Please follow ChatCaptioner to install the environment/

The following code will read the rended images of an objaverse scene (e.g., f6e9ec5953854dff94176c36b877c519) and generate scene caption at 3DLanguage_data/ChatCaptioner_based/output

$ cd ./3DLanguage_data/ChatCaptioner_based

$ python chatcaption.py --specific_scene f6e9ec5953854dff94176c36b877c519Follow the instruction in 3DLanguage_data/ChatCaptioner_based/gen_features/README.md for extracting 3D features from rendered images.

$ cd ./3DLanguage_data/ChatCaptioner_based/gen_featuresTODO

TODO

This section is for constructing 3D features for scene data. If you already downloaded our released scene data, please skip this section.

Installation:

Please follow Mask2Former to install the environment and download the pretrained weight to the current directory if extracting the masks with Mask2Former.

Please follow Segment Anything to install the environment and download the pretrained weight to the current directory if extracting the masks with SAM.

Extract masks with Mask2Former:

$ cd ./three_steps_3d_feature/first_step

$ python maskformer_mask.py --scene_dir_path DATA_DIR_WITH_RGB_IMAGES --save_dir_path DIR_YOU_WANT_TO_SAVE_THE_MASKSExtract masks with Segment Anything:

$ cd ./three_steps_3d_feature/first_step

$ python sam_mask.py --scene_dir_path DATA_DIR_WITH_RGB_IMAGES --save_dir_path DIR_YOU_WANT_TO_SAVE_THE_MASKSAfter the first step, we are expected to obtain a directory of masks (specified by --save_dir_path) that contains extracted masks for

multi-view images of the scenes.

Note: BLIP features are for LAVIS(BLIP2), CLIP features are for open-flamingo.

Installation: The same as the following 3D-LLM_BLIP2-based section to install salesforce-lavis.

There are four options: (1) Extract CLIP feature with Mask2Former masks; (2) Extract CLIP feature with SAM masks; (3) Extract BLIP feature with Mask2Former masks; (4) Extract BLIP feature with SAM masks.

Extract 2D CLIP features with Mask2Former masks:

$ cd ./three_steps_3d_feature/second_step/

$ python clip_maskformer.py --scene_dir_path DATA_DIR_WITH_RGB_IMAGES --mask_dir_path MASK_DIR_FROM_1ST_STEP --save_dir_path DIR_YOU_WANT_TO_SAVE_THE_FEATFor the other options, the scripts are in similar format.

After the second step, we are expected to obtain a directory of features (specified by --save_dir_path) that contains 2D features for

multi-view images of the scenes.

Installation:

Please install the Habitat environment.

Reconstruct 3D feature from multi-view 2D features:

$ cd ./three_steps_3d_feature/third_step/

$ python sam_mask.py --data_dir_path DATA_DIR_WITH_RGB_IMAGES --depth_dir_path DATA_DIR_WITH_DEPTH_IMAGES --feat_dir_path FEATURE_DIR_FROM_2ND_STEPAfter the third step, we are expected to obtain two files (pcd_pos.pt and pcd_feat.pt) for each room inside the corresponding RGB directory.

pcd_pos.pt contains the point positions of the 3D point cloud (shape: N * 3). pcd_feat.pt contains the point features of the 3D point cloud (shape: N * n_dim).

N is the number of sampled points in the point cloud (default: 300000) and n_dim is the feature dimension (1024 for CLIP feature, 1408 for BLIP feature).

Refer to Concept Fusion.

We will also release our reproduced version of Concept Fusion for our feature generation (we reproduced the paper before their official release).

Please refer to 3D-CLR repository.

$ cd 3DLLM_BLIP2-base

$ conda activate lavis

# use facebook/opt-2.7b:

$ TODO

# use flant5

$ python -m torch.distributed.run --nproc_per_node=8 train.py --cfg-path lavis/projects/blip2/train/pretrain.yamlTODO.

If you find our work useful, please consider citing:

@article{3dllm,

author = {Hong, Yining and Zhen, Haoyu and Chen, Peihao and Zheng, Shuhong and Du, Yilun and Chen, Zhenfang and Gan, Chuang},

title = {3D-LLM: Injecting the 3D World into Large Language Models},

journal = {NeurIPS},

year = {2023},

}

https://github.com/salesforce/LAVIS

https://github.com/facebookresearch/Mask2Former

https://github.com/facebookresearch/segment-anything

https://github.com/mlfoundations/open_flamingo