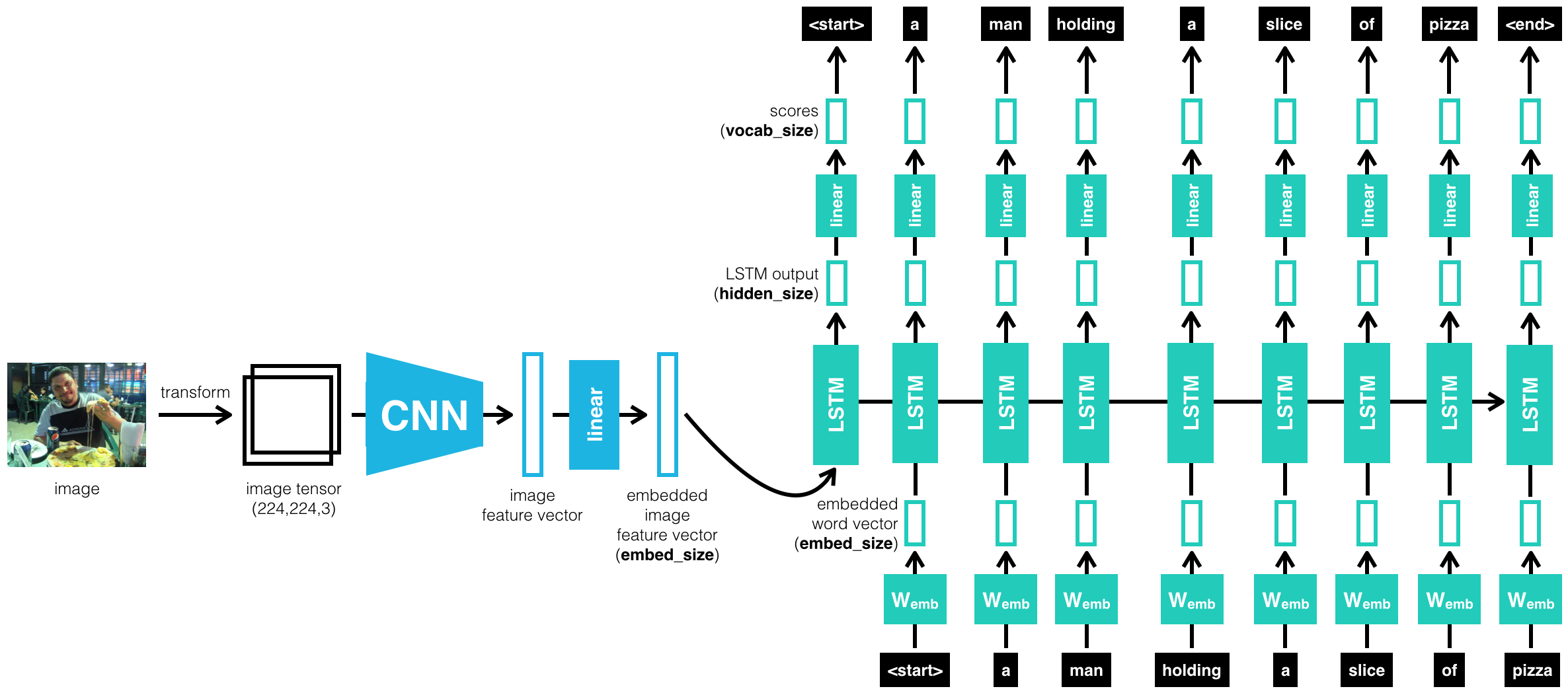

In this work we have to combine Deep Convolutional Nets for image classification with Recurrent Networks for sequence modeling, to create a single network that generates descriptions of image using COCO Dataset - Common Objects in Context.

COCO is a large image dataset designed for object detection, segmentation, person keypoints detection, stuff segmentation, and caption generation. GPU Accelerated Computing (CUDA) is neccessery for this project.

- Clone this repo: https://github.com/cocodataset/cocoapi

git clone https://github.com/cocodataset/cocoapi.git

- Setup the coco API (also described in the readme here)

cd cocoapi/PythonAPI

make

cd ..

- Download some specific data from here: https://cocodataset.org/#download (described below)

-

Under Annotations, download:

- 2017 Train/Val annotations [241MB] (extract captions_train2017.json and captions_val2017.json, and place at locations cocoapi/annotations/captions_train2017.json and cocoapi/annotations/captions_val2017.json, respectively)

- 2017 Testing Image info [1MB] (extract image_info_test2017.json and place at location cocoapi/annotations/image_info_test2017.json)

-

Under Images, download:

- 2017 Train images [118K/18GB] (extract the train2017 folder and place at location cocoapi/images/train2017/)

- 2017 Val images [5K/1GB] (extract the val2017 folder and place at location cocoapi/images/val2017/)

- 2017 Test images [41K/6GB] (extract the test2017 folder and place at location cocoapi/images/test2017/)

The project is structured as a series of Jupyter notebooks that are designed to be completed in sequential order:

Notebook 0 : Microsoft Common Objects in COntext (MS COCO) dataset;

Notebook 1 : Load and pre-process data from the COCO dataset;

Notebook 2 : Training the CNN-RNN Model;

Notebook 3 : Load trained model and generate predictions.

$ git clone https://github.com/nalbert9/Image-Captioning.git

$ pip3 install -r requirements.txtFollowing are a few results obtained after training the model for 3 epochs.

Microsoft COCO, arXiv:1411.4555v2 [cs.CV] 20 Apr 2015 and arXiv:1502.03044v3 [cs.LG] 19 Apr 2016