DanNet is a WordNet for the Danish language. The goal of this project is to represent DanNet in full using RDF as its native representation at both the database level, in the application space, and as its primary serialisation format.

Special care has been taken to maximise the compatibility of this iteration of DanNet. Like the DanNet of yore, the base dataset of this iteration is published as both RDF (Turtle) and CSV. RDF is the native representation and can be loaded as-is inside a suitable RDF graph database, e.g. Apache Jena. The CSV files now more closely map the native RDF structure and are published along with column metadata as CSVW.

Apart from the base DanNet dataset, several companion datasets exist expanding it with additional data. The companion datasets collectively provide a broader view of the data with both implicit and explicit links to other data.

The COR companion dataset links DanNet resources to IDs from the COR project, while the DDS companion dataset decorates DanNet resources with sentiment data.

Additional data is also implicitly inferred from the base dataset, the aforementioned companion datasets, and any associated ontological metadata. Doing so can be both computationally expensive and mentally taxing for the consumer of the data, so having a fully realised complete dataset available for download is essential for some end users.

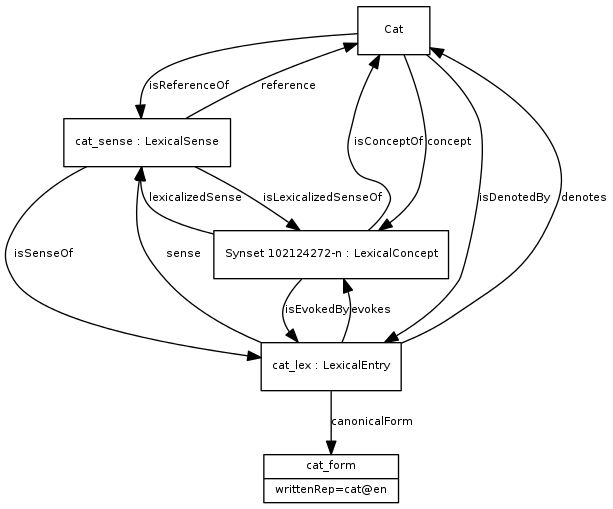

The old DanNet was modelled as tables inside a relational database. Two serialised representations also exist: RDF/XML 1.0 and a custom CSV format. The latter served as input for the new data model, remapping the relations described in these files onto a modern WordNet based on the Ontolex-lemon standard combined with the various relations defined by the Global Wordnet Association as used in the official GWA RDF standard.

In Ontolex-lemon...

- Synsets are analogous to

ontolex:LexicalConcept. - Wordsenses are analogous to

ontolex:LexicalSense. - Words are analogous to

ontolex:LexicalEntry. - Forms are analogous to

ontolex:Form.

By choosing these standards, we maximise DanNet's ability to integrate with other lexical resources, in particular with other WordNets.

In its native Clojure representation, DanNet can be queried in a variety of ways (described in queries.md). It is especially convenient to query data from within a Clojure REPL.

Support for Apache Jena transactions is built-in and enabled automatically when needed. This ensures support for persistence on disk through the TDB layer included with Apache Jena (mandatory for TDB 2). Both in-memory and persisted graphs can thus be queried using the same function calls. The DanNet website contains the complete dataset inside a TDB 2 graph.

Furthermore, DanNet query results are all decorated with support for the Clojure Navigable protocol. The entire RDF graph can therefore easily be navigated in tools such as Morse or Reveal from a single query result.

DanNet uses a new schema, available in this repository and also at https://wordnet.dk/dannet/schema.

DanNet uses the following URI prefixes for the dataset instances, concepts (the range of dns:ontologicalFacet and dns:ontologicalType) and the schema itself:

dn-> https://wordnet.dk/dannet/data/dnc-> https://wordnet.dk/dannet/concepts/dns-> https://wordnet.dk/dannet/schema/

These new prefixes/URIs take over from the ones used for DanNet 2.2:

dn-> http:https://www.wordnet.dk/owl/instance/2009/03/instances/dn_schema-> http:https://www.wordnet.dk/owl/instance/2009/03/schema/

All the new URIs resolve to HTTP resources, which is to say that accessing a resource with a GET request (e.g. through a web browser) returns data for the resource (or schema) in question.

Finally, the new DanNet schema is written in accordance with the RDF conventions listed by Philippe Martin.

The main database that the new tooling has been developed for is Apache Jena, which is a mature RDF triplestore that also supports OWL inferences. When represented inside Jena, the many relations of DanNet are turned into a queryable knowledge graph. The new DanNet is developed in the Clojure programming language (an alternative to Java on the JVM) which has multiple libraries for interacting with the Java-based Apache Jena, e.g. Aristotle and igraph-jena.

However, standardising on the basic RDF triple abstraction does open up a world of alternative data stores, query languages, and graph algorithms. See rationale.md for more.

Note: A more detailed explanation is available at doc/web.md.

The URIs of each of the resources in DanNet resolve to actual HTML pages with content relating to the resource at the IRI. The frontend is built using Rum and is served by Pedestal in the backend. If JavaScript is turned on, the initial HTML page becomes the entrypoint of a single-page app. If JavaScript is unavailable, this web app converts to a regular HTML website.

Every DanNet resource accessible through a web browser has both an HTML representation and several other representations which can be accessed via HTTP content negotiation. When JavaScript is disabled, usually only the HTML representation is used by the browser. However, when JavaScript is available, a frontend router (reitit) reroutes all navigation requests (e.g. clicking a hyperlink or submitting a form) towards fetching the application/transit+json representation instead. This data is used to refresh the Rum components, allowing them to update in place, while "fake" browser history item is inserted by reitit. The very same Rum components are also used to render the static HTML webpages.

Language negotiation is used to select the most suitable RDF data when multiple languages are available in the dataset.

The initial dataset was bootstrapped from the old DanNet 2.2 CSV files (technically: a slightly more recent, unpublished version) as well as several other input sources, e.g. the list of new adjectives produced by CST and DSL. This old CSV export mirrors the SQL tables of the old DanNet database.

This is a two-step process which happens in two separate namespaces:

- dk.cst.dannet.bootstrap: the raw data from the previous version of DanNet is loaded into memory, cleaned up, and converted into triple data structures using the new RDF schema structure.

- dk.cst.dannet.db: these triples are imported into an Apache Jena graph. Additional triples are either inferred through OWL schemas or added programmatically via queries.

Finally, on the final run of this bootstrap process, the graph is exported into an RDF dataset. This dataset constitutes the new official version of DanNet.

NOTE: the data used for bootstrapping should be located inside the

./boostrapsubdirectory (relative to the execution directory).

This process is made obsolete now that the next version of DanNet has been made available. The canonical version of DanNet is now the RDF dataset published at wordnet.dk/dannet.

The code is all written in Clojure and it must be compiled to Java Bytecode and run inside a Java Virtual Machine (JVM). The primary means to do this is Clojure's official CLI tools which can both fetch dependencies and build/run Clojure code. The project dependencies are specified in the deps.edn file.

While developing, ideally you should be running code in a Clojure REPL.

However, when testing release you can either run the docker compose setup from inside the ./docker directory using the following command:

docker compose up --buildUsually, the Caddy container can keep running in between restarts, i.e. only the DanNet container should be rebuilt:

docker compose up -d dannet --buildNOTE: requires that the Docker daemon is installed and running!

Or you may build and run a new release manually from this directory:

shadow-cljs --aliases :frontend release app

clojure -T:build org.corfield.build/uber :lib dk.cst/dannet :main dk.cst.dannet.web.service :uber-file "\"dannet.jar\""

java -jar -Xmx4g dannet.jarNOTE: requires that Java, Clojure, and shadow-cljs are all installed.

By default, the web service is accessed on localhost:3456. The data is loaded into a TDB2 database located in the ./db/tdb2 directory.

The current release workflow assumes that the database and the export files are created on a development machine and the transferred to the production server. During the transfer, the DanNet web service will momentarily be down, so keep this in mind!

To build the database, load a Clojure REPL and load the dk.cst.dannet.web.service namespace. From here, execute (restart) to get a service up and running. When the service is up, go to the dk.cst.dannet.db namespace and excute the following:

;; Note: exporting the complete dataset (including inferences) usually takes ~40-45 minutes

(export-rdf! @dk.cst.dannet.web.resources/db "export/rdf/" :complete true)

(export-csv! @dk.cst.dannet.web.resources/db)Normally, the Caddy service can keep running, so only the DanNet service needs to be briefly stopped:

# from inside the docker/ directory on the production server

docker compose stop dannetOnce the service is down, the database and export files can be transferred using SFTP to the relevant directories on the server. The git commit on the production server should also match the uploaded data, of course!

The service is finally restarted with:

docker compose up -d dannet --buildCurrently, the entire system, including the web service, uses ~1.4 GB when idle and ~3GB when rebuilding the Apache Jena database. A server should therefore have perhaps 4GB of available RAM to run the full version of DanNet.

DanNet depends on React 17 since the React wrapper Rum depends on this version of React:

npm init -y

npm install react@17 react-dom@17 create-react-class@17The easiest way to query DanNet currently is by compiling and running the Clojure code, then navigating to the dk.cst.dannet.db namespace in the Clojure REPL. From there, you can use a variety of query methods as described in queries.md.

For simple lemma searches, you can of course visit the official instance at wordnet.dk/dannet.