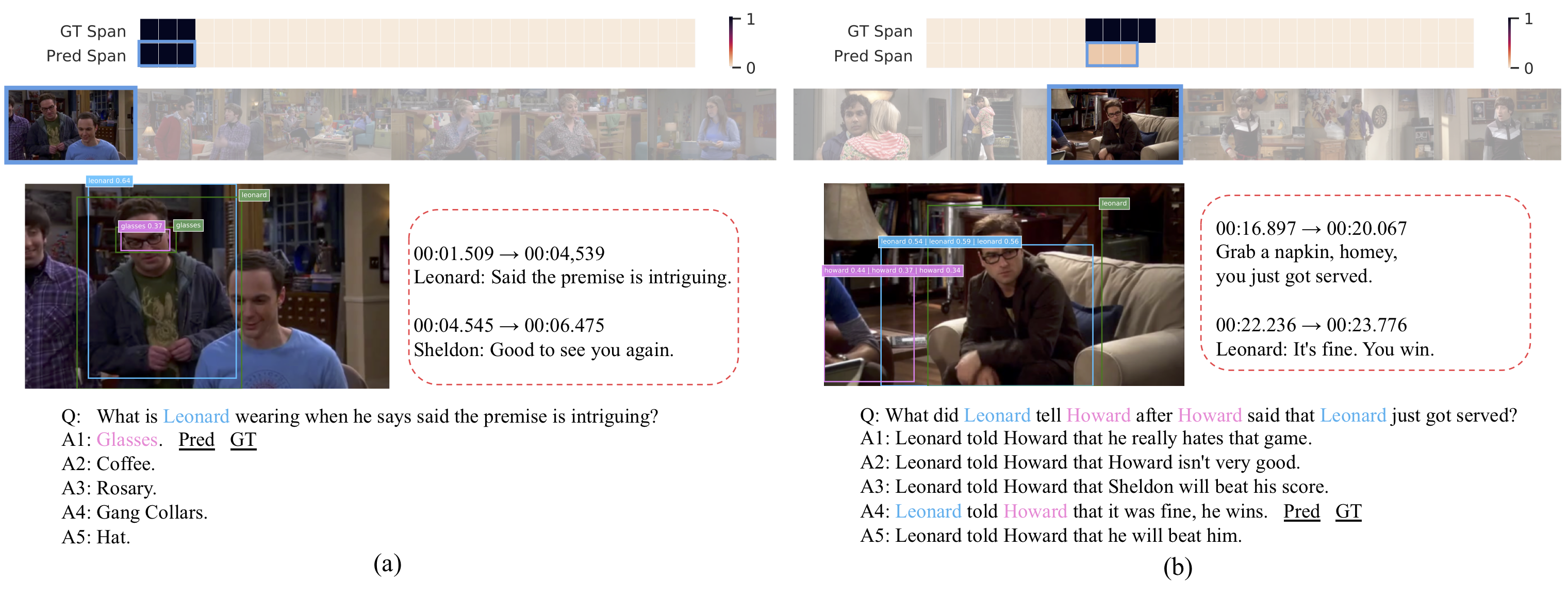

We present the task of Spatio-Temporal Video Question Answering, which requires intelligent systems to simultaneously retrieve relevant moments and detect referenced visual concepts (people and objects) to answer natural language questions about videos. We first augment the TVQA dataset with 310.8k bounding boxes, linking depicted objects to visual concepts in questions and answers. We name this augmented version as TVQA+. We then propose Spatio-Temporal Answerer with Grounded Evidence (STAGE), a unified framework that grounds evidence in both the spatial and temporal domains to answer questions about videos. Comprehensive experiments and analyses demonstrate the effectiveness of our framework and how the rich annotations in our TVQA+ dataset can contribute to the question answering task. As a side product, by performing this joint task, our model is able to produce more insightful intermediate results.

In this repository, we provide PyTorch Implementation of the STAGE model, along with basic preprocessing and evaluation code for TVQA+ dataset.

TVQA+: Spatio-Temporal Grounding for Video Question Answering

Jie Lei, Licheng Yu,

Tamara L. Berg, Mohit Bansal.

[PDF]

- Data: TVQA+ dataset, please use this new link

- Website: https://tvqa.cs.unc.edu

- Submission: codalab evaluation server

- Related works: TVR (Moment Retrieval), TVC (Video Captioning), TVQA (Localized VideoQA)

-

STAGE Overview. Spatio-Temporal Answerer with Grounded Evidence (STAGE), a unified framework that grounds evidence in both the spatial and temporal domains to answer questions about videos.

- Python 2.7

- PyTorch 1.1.0 (should work for 0.4.0 - 1.2.0)

- tensorboardX

- tqdm

- h5py

- numpy

1, Download and uncompress preprocessed features from Google Drive.

& uncompress the file into project root directory, you should get a dir `tvqa_plus_stage_features`

containing all the required feature files.

cd $PROJECT_ROOT; tar -xf tvqa_plus_stage_features_new.tar.gz

gdrive is a good tool to use for downloading the file. The features are changed, you have to re-download the features if you have our previous version

2, Run in debug mode to test your environment, path settings:

bash run_main.sh debug

3, Train the full STAGE model:

bash run_main.sh --add_local

note you will need around 30 GB of memory to load the data. Otherwise, you can additionally add --no_core_driver flag to stop loading

all the features into memory. After training, you should be able to get ~72.00% QA Acc, which is comparable to the reported number.

The trained model and config file are stored at ${$PROJECT_ROOT}/results/${MODEL_DIR}

4, Inference

bash run_inference.sh --model_dir ${MODEL_DIR} --mode ${MODE}

${MODE} could be valid or test. After inference, you will get a ${MODE}_inference_predictions.json

file in ${MODEL_DIR}, which is similar to the sample prediction file here eval/data/val_sample_prediction.json.

5, Evaluation

cd eval; python eval_tvqa_plus.py --pred_path ../results/${MODEL_DIR}/valid_inference_predictions.json --gt_path data/tvqa_plus_val.json

Note you can only evaluate val prediction here. To evaluate test set, please follow instructions here.

@inproceedings{lei2019tvqa,

title={TVQA+: Spatio-Temporal Grounding for Video Question Answering},

author={Lei, Jie and Yu, Licheng and Berg, Tamara L and Bansal, Mohit},

booktitle={Tech Report, arXiv},

year={2019}

}

- Add data preprocessing scripts (provided preprocessed features)

- Add model and training scripts

- Add inference and evaluation scripts

- Dataset: faq-tvqa-unc [at] googlegroups.com

- Model: Jie Lei, jielei [at] cs.unc.edu