[Project page] [paper]

An officical implementation of "Focal Inverse Distance Transform Map for Crowd Localization" (Accepted by IEEE TMM).

We propose a novel label named Focal Inverse Distance Transform (FIDT) map, which can represent each head location information.

We now provide the predicted coordinates txt files, and other researchers can use them to fairly evaluate the localization performance.

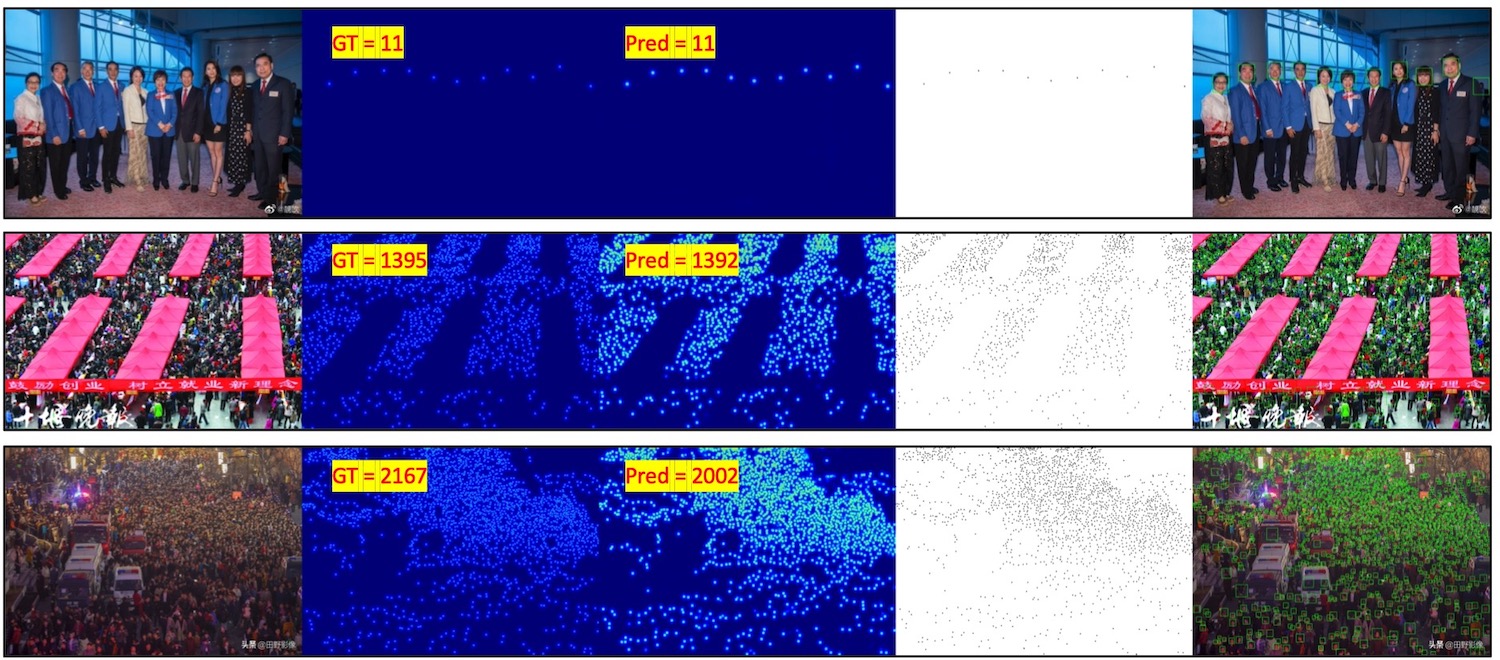

Visualizations for bounding boxes

- Testing Code (2021.3.16)

- Training baseline code (2021.4.29)

- Pretrained model

- ShanghaiA (2021.3.16)

- ShanghaiB (2021.3.16)

- UCF_QNRF (2021.4.29)

- JHU-Crowd++ (2021.4.29)

- NWPU-Crowd++ (2021.4.29)

- Bounding boxes visualizations(2021.3.24)

- Video demo(2021.3.29)

- Predicted coordinates txt file(2021.8.20)

python >=3.6

pytorch >=1.4

opencv-python >=4.0

scipy >=1.4.0

h5py >=2.10

pillow >=7.0.0

imageio >=1.18

nni >=2.0 (python3 -m pip install --upgrade nni)

- Download ShanghaiTech dataset from Baidu-Disk, passward:cjnx; or Google-Drive

- Download UCF-QNRF dataset from here

- Download JHU-CROWD ++ dataset from here

- Download NWPU-CROWD dataset from Baidu-Disk, passward:3awa; or Google-Drive

cd data

run python fidt_generate_xx.py

“xx” means the dataset name, including sh, jhu, qnrf, and nwpu. You should change the dataset path.

Download the pretrained model from Baidu-Disk, passward:gqqm, or OneDrive

git clone https://github.com/dk-liang/FIDTM.git

Download Dataset and Model

Generate FIDT map ground-truth

Generate image file list: python make_npydata.py

Test example:

python test.py --dataset ShanghaiA --pre ./model/ShanghaiA/model_best.pth --gpu_id 0

python test.py --dataset ShanghaiB --pre ./model/ShanghaiB/model_best.pth --gpu_id 1

python test.py --dataset UCF_QNRF --pre ./model/UCF_QNRF/model_best.pth --gpu_id 2

python test.py --dataset JHU --pre ./model/JHU/model_best.pth --gpu_id 3

If you want to generate bounding boxes,

python test.py --test_dataset ShanghaiA --pre model_best.pth --visual True

(remember to change the dataset path in test.py)

If you want to test a video,

python video_demo.py --pre model_best.pth --video_path demo.mp4

(the output video will in ./demo.avi; By default, the video size is reduced by two times for inference. You can change the input size in the video_demo.py)

Visiting bilibili or Youtube to watch the video demonstration. The original demo video can be downloaded from Baidu-Disk, passed: cebh

Visiting bilibili or Youtube to watch the video demonstration. The original demo video can be downloaded from Baidu-Disk, passed: cebh

More config information is provided in config.py

| Shanghai Teach Part A | Precision | Recall | F1-measure |

|---|---|---|---|

| σ=4 | 59.1% | 58.2% | 58.6% |

| σ=8 | 78.1% | 77.0% | 77.6% |

| Shanghai Teach Part B | Precision | Recall | F1-measure |

|---|---|---|---|

| σ=4 | 64.9% | 64.5% | 64.7% |

| σ=8 | 83.9% | 83.2% | 83.5% |

| JHU_Crowd++ (test set) |

Precision | Recall | F1-measure |

|---|---|---|---|

| σ=4 | 38.9% | 38.7% | 38.8% |

| σ=8 | 62.5% | 62.4% | 62.4% |

| UCF_QNRF | Av.Precision | Av.Recall | Av. F1-measure |

|---|---|---|---|

| σ=1....100 | 84.49% | 80.10% | 82.23% |

| NWPU-Crowd (val set) | Precision | Recall | F1-measure |

|---|---|---|---|

| σ=σ_l | 82.2% | 75.9% | 78.9% |

| σ=σ_s | 76.7% | 70.9% | 73.7% |

Evaluation example:

For Shanghai tech, JHU-Crowd (test set), and NWPU-Crowd (val set):

cd ./local_eval

python eval.py ShanghaiA

python eval.py ShanghaiB

python eval.py JHU

python eval.py NWPU

For UCF-QNRF dataset:

python eval_qnrf.py --data_path path/to/UCF-QNRF_ECCV18

For NWPU-Crowd (test set), please submit the nwpu_pred_fidt.txt to the website.

We also provide the predicted coordinates txt file in './local_eval/point_files/', and you can use them to fairly evaluate the other localization metric.

(We hope the community can provide the predicted coordinates file to help other researchers fairly evaluate the localization performance.)

Tips:

The GT format is:

1 total_count x1 y1 4 8 x2 y2 4 8 .....

2 total_count x1 y1 4 8 x2 y2 4 8 .....

The predicted format is:

1 total_count x1 y1 x2 y2.....

2 total_count x1 y1 x2 y2.....

The evaluation code is modifed from NWPU.

The training strategy is very simple. You can replace the density map with the FIDT map in any regressors for training.

If you want to train based on the HRNET (borrow from the IIM-code link), please first download the ImageNet pre-trained models from the official link, and replace the pre-trained model path in HRNET/congfig.py (__C.PRE_HR_WEIGHTS).

Here, we provide the training baseline code:

Training baseline example:

python train_baseline.py --dataset ShanghaiA --crop_size 256 --save_path ./save_file/ShanghaiA

python train_baseline.py --dataset ShanghaiB --crop_size 256 --save_path ./save_file/ShanghaiB

python train_baseline.py --dataset UCF_QNRF --crop_size 512 --save_path ./save_file/QNRF

python train_baseline.py --dataset JHU --crop_size 512 --save_path ./save_file/JHU

For ShanghaiTech, you can train by a GPU with 8G memory. For other datasets, please utilize a single GPU with 24G memory or multiple GPU for training.

Improvements We have not studied the effect of some hyper-parameter. Thus, the results can be further improved by using some tricks, such as adjust the learning rate, batch size, crop size, and data augmentation.

If you find this project is useful for your research, please cite:

@article{liang2022focal,

title={Focal inverse distance transform maps for crowd localization},

author={Liang, Dingkang and Xu, Wei and Zhu, Yingying and Zhou, Yu},

journal={IEEE Transactions on Multimedia},

year={2022},

publisher={IEEE}

}