TaskingAI 是一款集成了 OPENAI 模型的人工智能工具,旨在方便中国用户免去VPN的麻烦,轻松使用。

TaskingAI 内置了 OPENAI_BASE_URL,您只需要认准以下地址即可:

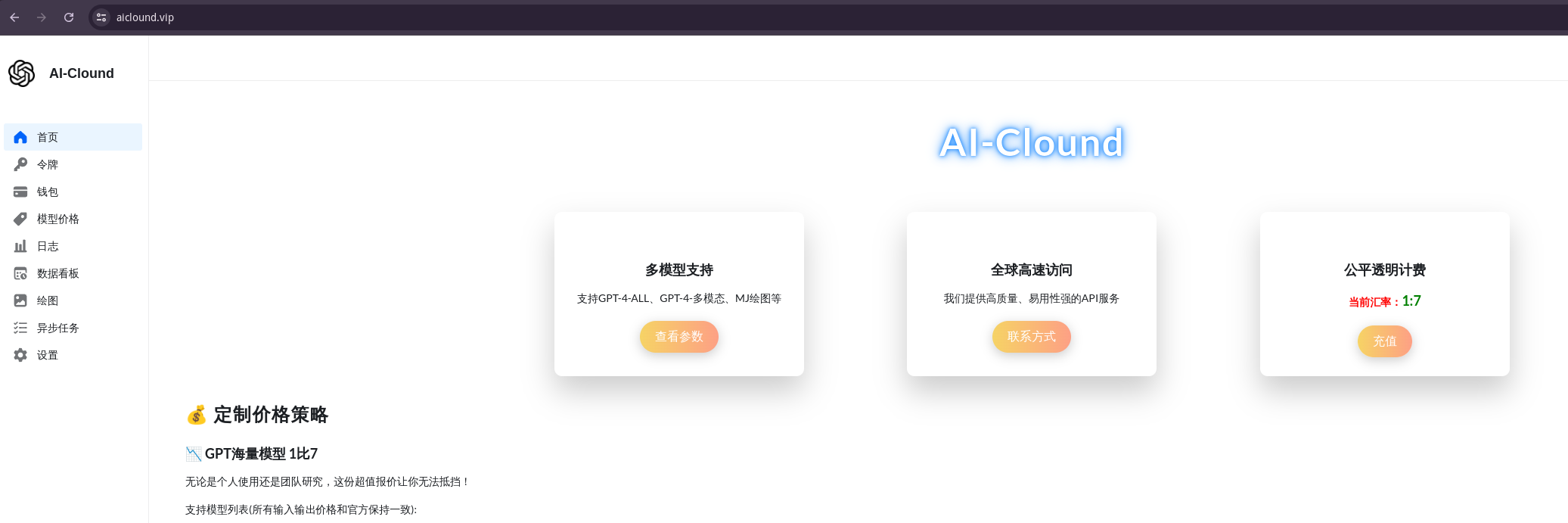

https://aiclound.vip

并且只需前往 https://aiclound.vip 获取 API key,输入后即可使用。价格实惠,量大有优惠!

- 克隆仓库

git clone https://github.com/cppcloud/TaskingAI.git- 进入

docker目录

cd TaskingAI/docker- 复制环境变量配置文件

cp .env.example .env- 启动服务

docker-compose -p taskingai --env-file .env up -d以上步骤完成后,您就可以愉快地使用 TaskingAI 了!

- 确保您的系统上已经安装了 Docker 和 Docker Compose。

- 确保网络通畅以便拉取相关镜像。

- 在 .env 文件中输入从 aiclound.vip 获取的 API key。

TaskingAI is a BaaS (Backend as a Service) platform for LLM-based Agent Development and Deployment. It unified the integration of hundreds of LLM models, and provides an intuitive user interface for managing your LLM application's functional modules, including tools, RAG systems, assistants, conversation history, and more.

- All-In-One LLM Platform: Access hundreds of AI models with unified APIs.

- Abundant enhancement: Enhance LLM agent performance with hundreds of customizable built-in tools and advanced Retrieval-Augmented Generation (RAG) system

- BaaS-Inspired Workflow: Separate AI logic (server-side) from product development (client-side), offering a clear pathway from console-based prototyping to scalable solutions using RESTful APIs and client SDKs.

- One-Click to Production: Deploy your AI agents with a single click to production stage, and scale them with ease. Let TaskingAI handle the rest.

- Asynchronous Efficiency: Harness Python FastAPI's asynchronous features for high-performance, concurrent computation, enhancing the responsiveness and scalability of the applications.

- Intuitive UI Console: Simplifies project management and allows in-console workflow testing.

Models: TaskingAI connects with hundreds of LLMs from various providers, including OpenAI, Anthropic, and more. We also allow users to integrate local host models through Ollama, LM Studio and Local AI.

Plugins: TaskingAI supports a wide range of built-in plugins to empower your AI agents, including Google search, website reader, stock market retrieval, and more. Users can also create custom tools to meet their specific needs.

LangChain is a tool framework for LLM application development, but it faces practical limitations:

- Statelessness: Relies on client-side or external services for data management.

- Scalability Challenges: Statelessness impacts consistent data handling across sessions.

- External Dependencies: Depends on outside resources like model SDKs and vector storage.

OpenAI's Assistant API excels in delivering GPTs-like functionalities but comes with its own constraints:

- Tied Functionalities: Integrations like tools and retrievals are tied to each assistant, not suitable for multi-tenant applications.

- Proprietary Limitations: Restricted to OpenAI models, unsuitable for diverse needs.

- Customization Limits: Users cannot customize agent configuration such as memory and retrieval system.

- Supports both stateful and stateless usages: Whether to keep track of and manage the message histories and agent conversation sessions, or just make stateless chat completion requests, TaskingAI has them both covered.

- Decoupled modular management: Decoupled the management of tools, RAGs systems, language models from the agent. And allows free combination of these modules to build a powerful AI agent.

- Multi-tenant support: TaskingAI supports fast deployment after development, and can be used in multi-tenant scenarios. No need to worry about the cloud services, just focus on the AI agent development.

- Unified API: TaskingAI provides unified APIs for all the modules, including tools, RAGs systems, language models, and more. Super easy to manage and change the AI agent's configurations.

- Interactive Application Demos

- AI Agents for Enterprise Productivity

- Multi-Tenant AI-Native Applications for Business

Please give us a FREE STAR 🌟 if you find it helpful 😇

Click the image above to view the TaskingAI Console Demo Video.

Once the console is up, you can programmatically interact with the TaskingAI server using the TaskingAI client SDK.

Ensure you have Python 3.8 or above installed, and set up a virtual environment (optional but recommended). Install the TaskingAI Python client SDK using pip.

pip install taskingaiHere is a client code example:

import taskingai

taskingai.init(api_key='YOUR_API_KEY', host='https://localhost:8080')

# Create a new assistant

assistant = taskingai.assistant.create_assistant(

model_id="YOUR_MODEL_ID",

memory="naive",

)

# Create a new chat

chat = taskingai.assistant.create_chat(

assistant_id=assistant.assistant_id,

)

# Send a user message

taskingai.assistant.create_message(

assistant_id=assistant.assistant_id,

chat_id=chat.chat_id,

text="Hello!",

)

# generate assistant response

assistant_message = taskingai.assistant.generate_message(

assistant_id=assistant.assistant_id,

chat_id=chat.chat_id,

)

print(assistant_message)Note that the YOUR_API_KEY and YOUR_MODEL_ID should be replaced with the actual API key and chat completion model ID you created in the console.

You can learn more in the documentation.

Please see our contribution guidelines for how to contribute to the project.

TaskingAI is released under a specific TaskingAI Open Source License. By contributing to this project, you agree to abide by its terms.

For support, please refer to our documentation or contact us at [email protected].