The HIS-ELK project is designed to automatically monitor Hospital Information System (HIS) services and server statuses. By integrating the ELK (Elasticsearch, Logstash, Kibana) stack, this system streamlines data retrieval and visualization, facilitating efficient monitoring and analysis of HIS performance and issues.

- ELK introduction

- HIS-ELK System Structure

- Features

- Install ELK Master

- Deploy ELK Client to VM

- Install Java Agent

- Configuration

- Usage

- Contributing

- License

The ELK stack, consisting of Elasticsearch, Logstash, and Kibana, is a powerful solution for real-time data analysis and visualization.

- Elasticsearch: A distributed search and analytics engine for storing and indexing data.

- Logstash: A data processing pipeline that ingests, transforms, and sends data to Elasticsearch.

- Kibana: A visualization tool for exploring and interacting with data stored in Elasticsearch.

Together, they enable comprehensive log and event data analysis, making it easier to monitor applications, troubleshoot issues, and gain insights. The ELK stack is scalable, flexible, and widely used for its powerful capabilities in handling large volumes of data efficiently.

This is a sort ppt for introduction PPT.

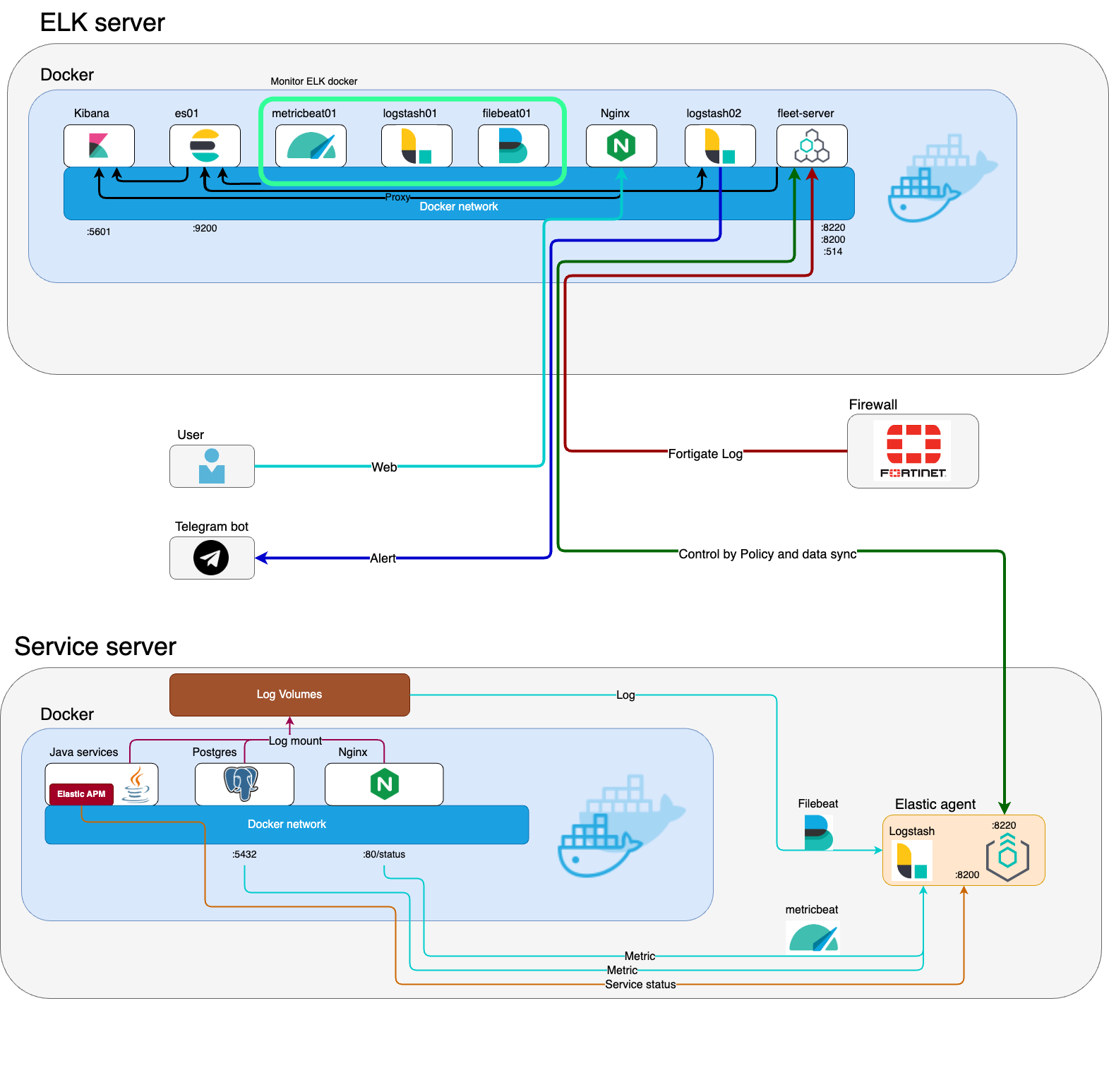

The diagram above illustrates the system architecture of the HIS-ELK integration, showcasing how data flows from the Hospital Information System through Logstash, is indexed in Elasticsearch, and finally visualized in Kibana.

- Automated Data Requests: Periodically requests data from the ELK stack.

- Data Processing: Cleans and processes the data for analysis.

- Visualization: Generates visual reports and dashboards using Kibana.

- Scheduling: Automates the report generation process on a weekly basis.

- Docker and Docker Compose installed

- Clone the repository:

git clone https://github.com/aston668334/HIS-ELK.git

This project is based on this blog Elastic blog post.

cd HIS-ELK

docker-compose up --build -ddocker cp es-cluster-es01-1:/usr/share/elasticsearch/config/certs/ca/ca.crt /tmp/.

openssl x509 -fingerprint -sha256 -noout -in /tmp/ca.crt | awk -F"=" {' print $2 '} | sed s/:https://g

cat /tmp/ca.crt- Access Kibana and navigate to Fleet settings

- Add the Elasticsearch host and certificate fingerprint

- Save and deploy the settings

Cert must start with

ssl:

certificate_authorities:

- |Open your browser and go to http:https://localhost:9200

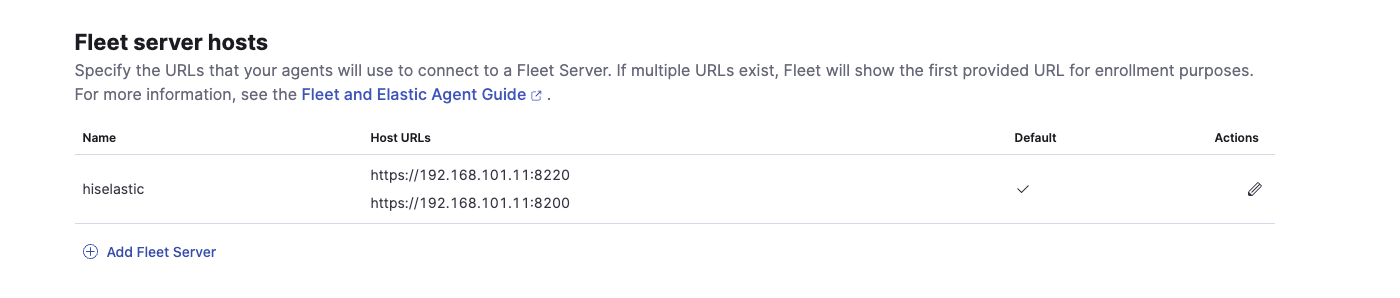

Click Actionand set Name( what ever you want) add URL (ex. https://192.168.101.11:8220)

Ensure the VM can connect to the ELK master (192.168.101.11).

- Add Agent Policies (optional): Follow the link to add agent policies.

- Enroll Agent:

- Select policies and choose [Enroll in Fleet (recommended)].

- Copy the command from this link and run it on your VM.

Add insecure flag to the command:

sudo ./elastic-agent install --url=https://192.168.101.11:8220 --enrollment-token=your_token --insecureSet the username and password in Agent policies:

Navigate to your policy.

- Go to PostgreSQL -> Collect PostgreSQL metrics -> Settings.

- Set the username and password.

- Download the elastic-apm-agent:

curl "https://oss.sonatype.org/service/local/repositories/snapshots/content/co/elastic/apm/elastic-apm-agent/1.49.1-SNAPSHOT/elastic-apm-agent-1.49.1-20240429.100006-21.jar" -o /usr/local/apm/elastic-apm-agent.jar- Add the following to your Java environment:

-javaagent:/usr/local/apm/elastic-apm-agent.jar- Set the following environment variable in your ENV file:

ELASTIC_APM_SERVER_URL=http:https://localhost:8200We welcome contributions to improve this project! Please fork the repository and create a pull request with your changes.

This project is licensed under the Apache License. See the LICENSE file for details.