This project was created to allow us to parse and analyze log files in order to gather relevant data. It can be used as is or as an SDK. Where you can define your own parsing.

The basic method for using this library is, that you create a definition for your parsing. This definition allows you to parse a set of log files and extract all entries that match this pattern.

- Installation

- Parse Definitions

- Extracting Data from Logs

- Code Structure

- Searching and organizing log data

- Assertions and LogDataAssertions

- Exporting Results to a CSV File

- Release Notes

For now we are using this library with maven, in later iteration we will publish other build system examples:

The following dependency needs to be added to your pom file:

<dependency>

<groupId>com.adobe.campaign.tests</groupId>

<artifactId>log-parser</artifactId>

<version>1.0.10</version>

</dependency>

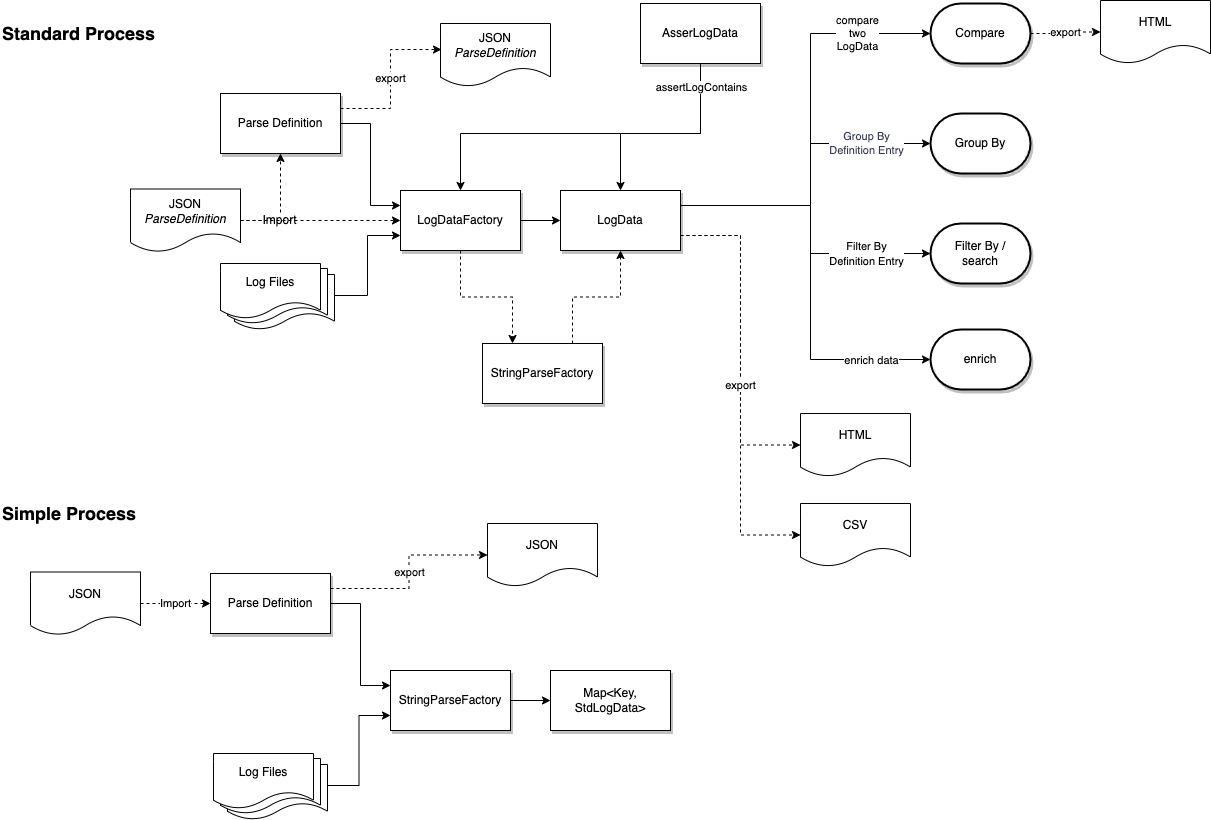

We have two ways of running the log parser:

- Programmatically, as a library and in your test you can simply use the log-parser to analyse your log files.

- Command-Line, as of version 1.11.0, we allow you to run your log-parsing from the command-line. Further details can be found in the section Command-line Execution of the Log-Parser.

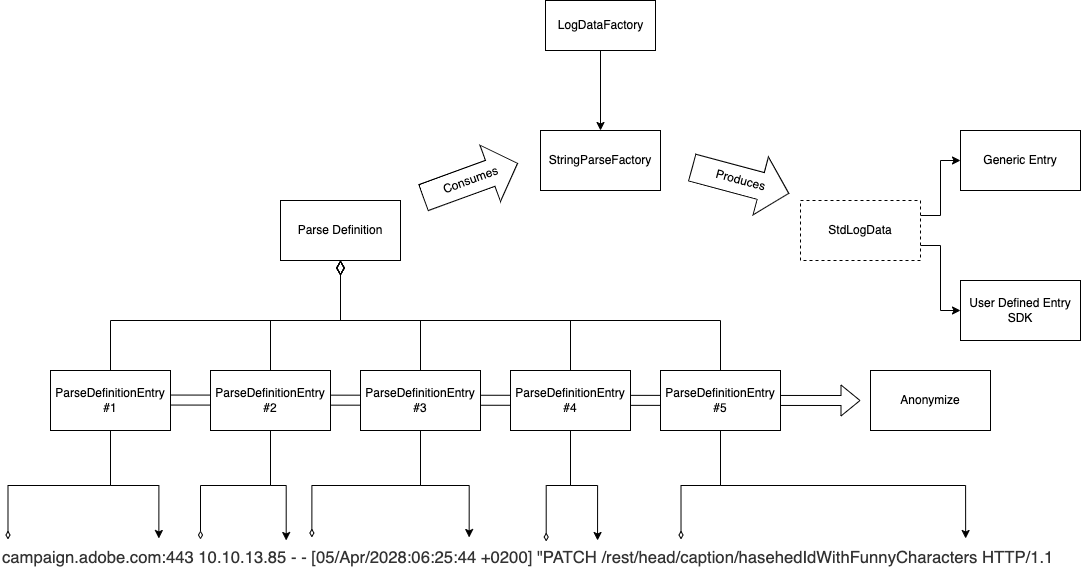

In order to parse logs you need to define a ParseDefinition. A ParseDefinition contains a set of ordered ParseDefinition Entries. While parsing a line of logs, the LogParser will see if all entries can be found in the line of logs. If that is the case, the line is stored according to the definitions.

Each Parse Definition consists of :

- Title

- A set of entries

- A Padding allowing us to create a legible key

- A key Order which is used for defining the Key

- If you want the result to include the log file name and path

Each entry for a Parse Definition allows us to define:

- A title for the value which will be found.

- The start pattern of the string that will contain the value (null if in the start of a line)

- The end pattern of the string that will contain the value (null if in the end of a line)

- Case Sensitive search

- Is to be kept. In some cases we just need to find a line with certain particularities, but we don't actually want to store the value.

- Anonymizers, we can provide a set of anonymizers so that some values are skipped when parsing a line.

When you have defined your parsing you use the LogDataFactory by passing it:

- The log files it should parse

- The ParseDefinition

By using the StringParseFactory we get a LogData object with allows us to manage the logs data you have found.

We have discovered that it would be useful to anonymize data. This will aloow you to group some log data that contains variables. Anonymization has two features:

- Replacing Data using

{}, - Ignoring Data using

[].

For example if you store an anonymizer with the value:

Storing key '{}' in the system

the log-parser will merge all lines that contain the same text, but with different values for the key. For example:

- Storing key 'F' in the system

- Storing key 'B' in the system

- Storing key 'G' in the system

will all be stored as Storing key '{}' in the system.

Sometimes we just want to anonymize part of a line. This is useful if you want to do post-treatment. For example in our previous example as explained Storing key 'G' in the system, would be merged, however NEO-1234 : Storing key 'G' in the system would not be merged. In this cas we can do a partial anonymization using the [] notation. For example if we enrich our original template:

[]Storing key '{}' in the system

In this case the lines:

NEO-1234 : Storing key 'G' in the systemwill be stored asNEO-1234 : Storing key '{}' in the systemNEO-1234 : Storing key 'H' in the systemwill be stored asNEO-1234 : Storing key '{}' in the systemEXA-1234 : Storing key 'Z' in the systemwill be stored asEXA-1234 : Storing key '{}' in the systemEXA-1234 : Storing key 'X' in the systemwill be stored asEXA-1234 : Storing key '{}' in the system

Here is an example of how we can parse a string. The method is leveraged to perform the same parsing in one or many files.

@Test

public void parseAStringDemo() throws StringParseException {

String logString = "afthostXX.qa.campaign.adobe.com:443 - - [02/Apr/2022:08:08:28 +0200] \"GET /rest/head/workflow/WKF193 HTTP/1.1\" 200 ";

//Create a parse definition

ParseDefinitionEntry verbDefinition = new ParseDefinitionEntry();

verbDefinition.setTitle("verb");

verbDefinition.setStart("\"");

verbDefinition.setEnd(" /");

ParseDefinitionEntry apiDefinition = new ParseDefinitionEntry();

apiDefinition.setTitle("path");

apiDefinition.setStart(" /");

apiDefinition.setEnd(" ");

List<ParseDefinitionEntry> definitionList = Arrays.asList(verbDefinition,apiDefinition);

//Perform Parsing

Map<String, String> parseResult = StringParseFactory.parseString(logString, definitionList);

//Check Results

assertThat("We should have an entry for verb", parseResult.containsKey("verb"));

assertThat("We should have the correct value for logDate", parseResult.get("verb"), is(equalTo("GET")));

assertThat("We should have an entry for the API", parseResult.containsKey("path"));

assertThat("We should have the correct value for logDate", parseResult.get("path"),

is(equalTo("rest/head/workflow/WKF193")));

}In the code above we want to parse the log line below, and want to fin the REST call "GET /rest/head/workflow/WKF193", and to extract the verb "GET", and the api "/rest/head/workflow/WKF193".

afthostXX.qa.campaign.adobe.com:443 - - [02/Apr/2022:08:08:28 +0200] \"GET /rest/head/workflow/WKF193 HTTP/1.1\" 200

The code starts with the creation a parse definition with at least two parse definitions that tell us between which markers should each data be extracted. The parse difinition is then handed to the StringParseFactory so that the data can be extracted. At the end we can see that each data is stored in a map with the parse defnition entry title as a key.

You can import or store a Parse Definition to or from a JSON file.

You can define a Parse Definition in a JSON file.

This can then be imported and used for parsing using the method ParseDefinitionFactory.importParseDefinition. Here is small example of how the JSON would look like:

{

"title": "Anonymization",

"storeFileName": false,

"storeFilePath": false,

"storePathFrom": "",

"keyPadding": "#",

"keyOrder": [],

"definitionEntries": [

{

"title": "path",

"start": "HTTP/1.1|",

"end": "|Content-Length",

"caseSensitive": false,

"trimQuotes": false,

"toPreserve": true,

"anonymizers": [

"X-Security-Token:{}|SOAPAction:[]"

]

}

]

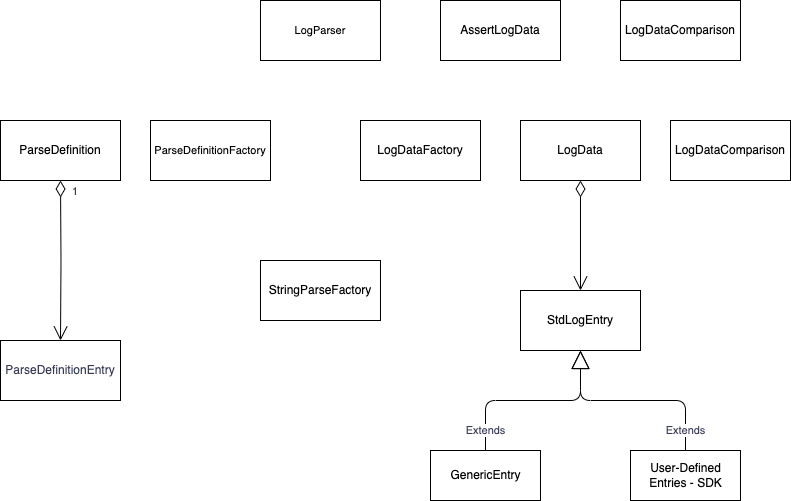

}By default each entry for your lag parsing will be stored as a Generic entry. This means that all values will be stored as Strings. Each entry will have a :

- Key

- A set of values

- The frequence of the key as found in the logs

Using the log parser as an SDK allow you to define your own transformations and also to override many of the behaviors.

In order to use this feature you need to define a class that extends the class StdLogEntry.

You will often want to transform the parsed information into a more manageable object by defining your own fields in the SDK class.

You will need to declare a default constructor and a copy constructor. The copy constructor will allow you to copy the values from one object to another.

You will need to declare how the parsed variables are transformed into your SDL. This is done in the method setValuesFromMap().

In there you can define a fine-grained extraction of the variables. This could be extracting hidden data in strings of the extracted data, or simple data transformations such as integer or dates.

You will need to define how a unique line will look like. Although this is already done in the Definition Rules, you may want to provide more precisions. This is doen in the method makeKey().

Depending on the fields you have defined, you will want to define how the results are represented when they are stored in your system.

You will need to give names to the headers, and provide a map that extracts the values.

Below is a diagram representing the class structure:

As of versions 1.0.4 & 1.0.5 we have a series of search and organizing the log data.

We have introduced the filter and search mechanisms. These allow you to search the LogData for values for a given ParseDefinitionEntry. For this we have introduced the following methods:

- isElementPresent

- searchEntries

- filterBy

We currently have the following signatures:

public boolean isEntryPresent(String in_parseDefinitionName, String in_searchValue)

public boolean isEntryPresent(Map<String, Object> in_searchKeyValues)

public LogData<T> searchEntries(String in_parseDefinitionName, String in_searchValue)

public LogData<T> searchEntries(Map<String, Object> in_searchKeyValues)

public LogData<T> filterBy(Map<String, Object> in_filterKeyValues)In the cases where the method accepts a map we allow the user to search by a series of search terms. Example:

Map<String, Object> l_filterProperties = new HashMap<>();

l_filterProperties.put("Definition 1", "14");

l_filterProperties.put("Definition 2", "13");

LogData<GenericEntry> l_foundEntries = l_logData.searchEntries(l_filterProperties)); We have introduced the groupBy mechanism. This functionality allows you to organize your results with more detail. Given a log data object, and an array of ParseDefinitionEntry names, we generate a new LogData Object containing groups made by the passed ParseDeinitionEnries and and number of entries for each group.

Let's take the following case:

| Definition 1 | Definition 2 | Definition 3 | Definition 4 |

|---|---|---|---|

| 12 | 14 | 13 | AA |

| 112 | 114 | 113 | AAA |

| 120 | 14 | 13 | AA |

If we perform groupBy with the parseDefinition Definition 2, we will be getting a new LogData object with two entries:

| Definition 2 | Frequence |

|---|---|

| 14 | 2 |

| 114 | 1 |

We can also pass a list of group by items, or even perform a chaining of the group by predicates.

We can create a sub group of the LogData by creating group by function:

LogData<GenericEntry> l_myGroupedData = logData.groupBy(Arrays.asList("Definition 1", "Definition 4"));

//or

LogData<MyImplementationOfStdLogEntry> l_myGroupedData = logData.groupBy(Arrays.asList("Definition 1", "Definition 4"), MyImplementationOfStdLogEntry.class);In this case we get :

| Definition 1 | Definition 4 | Frequence |

|---|---|---|

| 12 | AA | 1 |

| 112 | AAA | 1 |

| 120 | AA | 1 |

The GroupBy can also be chained. Example:

LogData<GenericEntry> l_myGroupedData = logData.groupBy(Arrays.asList("Definition 1", "Definition 4")).groupBy("Definition 4");In this case we get :

| Definition 4 | Frequence |

|---|---|

| AA | 2 |

| AAA | 1 |

As of version 1.11.0 we have introduced the possibility to compare two LogData objects. This is a light compare that checks that for a given key, if it is absent, added or changes in frequency. The method compare returns a LogDataComparison object that contains the results of the comparison. A comparison can be of three types:

- NEW : The entry has been added

- Removed : The entry has been removed

- Changed : The entry has changed in frequency

Apart from this we return the :

- delta : The difference in frequency

- deltaRatio : The difference in frequency as a ratio in %

These values are negative if the values have decreased.

Creating a differentiation report is done with the method LogData.compare(LogData<T> in_logData). This method returns a LogDataComparison object that contains the results of the comparison.

We can generate an HTML Report where the differences are high-lighted. This is done with the method LogDataFactory.generateComparisonReport(LogData reference, LogData target, String filename). This method will generate an HTML Report detailing the found differences.

As of version 1.0.5 we have introduced the notion of assertions. Assertions can either take a LogData object or a set of files as input.

We currently have the following assertions:

AssertLogData.assertLogContains(LogData<T> in_logData, String in_entryTitle, String in_expectedValue)

AssertLogData.assertLogContains(List<String> in_filePathList, ParseDefinition in_parseDefinition, String in_entryTitle, String in_expectedValue)AssertLogData.assertLogContains(LogData<T>, String, String ) allows you to perform an assertion on an existing LogData Object.

AssertLogData.assertLogContains(List<String>, ParseDefinition, String, String) allows you to perform an assertion directly on a file.

We now have the possibility to export the log data results into a CSV file. The file will be a concatenation of the Parse Definition file, suffixed with "-export.csv".

We now have the possibility to export the log data results into a CSV file. The file will be a concatenation of the Parse Definition file, suffixed with "-export.csv".

As of version 1.11.0 we have introduced the possibility of running the log-parser from the command line. This is done by using the executable jar file or executing the main method in maven.

The results will currently be stored as a CSV or HTML file.

The command line requires you to at least provide the following information:

--startDir: The root path from which the logs should be searched.--parseDefinition: The path to the parse definition file.

The typical command line would look like this:

mvn exec:java -Dexec.args="--startDir=src/test/resources/nestedDirs/ --parseDefinition=src/test/resources/parseDefinition.json"

or

java -jar log-parser-1.11.0.jar --startDir=/path/to/logs --parseDefinition=/path/to/parseDefinition.json

You can provide additional information such as:

--fileFilter: The wildcard used for selecting the log files. The default value is *.log--reportType: The format of the report. The allowed values are currently HTML & CSV. The default value is HTML--reportFileName: The name of the report file. By default, this is the name of the Parse Definition name suffixed with '-export'--reportName: The report title as show in an HTML report. By default the title includes the Parse Definition name

You can get a print out of the command line options by running the command with the --help flag.

All reports are stored in the directory ./log-parser-reports/export/.

- (new feature) #10 We now have an executable for the log-parser. You can perform a log parsing using the command line. For more information please read the section on Command-line Execution of the Log-Parser.

- (new feature) #127 You can now compare two LogData Objects. This is a light compare that checks that for a given key, if it is absent, added or changes in frequency.

- (new feature) #137 We can now generate an HTML report for the differences in log data.

- (new feature) #138 We now have the possibility of anonymizing log data during parsing. For more information please read the section on Anonymizing Data.

- (new feature) #117 You can now include the file name in the result of the analysis.

- (new feature) #141 You can now export a LogData as a table in a HTML file.

- (new feature) #123 We now log the total number and size of the parsed files.

- #110 Moved to Java 11

- #112 Updating License Headers

- #119 Cleanup of deprecated methods, and the consequences thereof.

- Moved main code and tests to the package "core"

- #67 We can now select the files using a wild card. Given a directory we can now look for files in the sub-directory given a wild-card. The wildcards are implemented using Adobe Commons IO. You can read more on this in the WildcardFilter JavaDoc

- #68 We now present a report of the findings at the end of the analysis.

- #55 We can now export the log parsing results into a CSV file.

- #102 Corrected bug where Log parser could silently stop with no error when confronted with CharSet incompatibilities.

- #120 Corrected the export system as it did not work well with SDK defined entries.

- #148 The LogData#groupBy method did not work well when it is based on an SDK. We now look at the headers and values of the SDK. Also the target for a groupBy will have to be a GenricEntry as cannot guarantee that the target class can support a groupBy.

- Removed ambiguities in the methods for StdLogEntry. For example "fetchValueMap" is no longer abstract, but it can be overriden.

- Building with java8.

- Upgraded Jackson XML to remove critical version

- Setting system to work in both java8 and java11. (Java11 used for sonar)

- Moving back to Java 8 as our clients are still using Java8

- #39 updated the log4J library to 2.17.1 to avoid the PSIRT vulnerability

- #38 Resolved some issues with HashCode

- #37 Upgraded the build to Java11

- #34 Activated sonar in the build process

- #23 Added the searchEntries, and the isEntryPresent methods.

- #20 Adding log data assertions

- keyOrder is now a List

- #32 we have solved an issue with exporting and importing the key orders

- #30 Allowing for the LogDataFactory to accept a JSON file as input for the ParseDefinitions

- #31 Solved bug with importing the JSON file

- #6 We Can now import a definition from a JSON file. You can also export a ParseDefinition into a JSON file.

- #8 & #18 Added the filter function.

- #13 Added copy constructors.

- #13 Added a copy method in the StdLogEntry (#13).

- #14 Added a set method to LogData. This allows you to change a Log data given a key value and ParseDefinition entry title

- Renamed exception IncorrectParseDefinitionTitleException to IncorrectParseDefinitionException.

- Introduced the LogData Top Class. This encapsulates all results.

- Introduced the LogDataFactory

- Added the groupBy method to extract data from the results

- Open source release.