Links: Paper | PDF with Appendix | Video | arXiv | Zhihu/知乎

MESA is a meta-learning-based ensemble learning framework for solving class-imbalanced learning problems. It is a task-agnostic general-purpose solution that is able to boost most of the existing machine learning models' performance on imbalanced data.

If you find this repository helpful in your work or research, we would greatly appreciate citations to the following paper:

@inproceedings{liu2020mesa,

title={MESA: Boost Ensemble Imbalanced Learning with MEta-SAmpler},

author={Liu, Zhining and Wei, Pengfei and Jiang, Jing and Cao, Wei and Bian, Jiang and Chang, Yi},

booktitle={Conference on Neural Information Processing Systems},

year={2020},

}

- Cite Us

- Table of Contents

- Background

- Requirements

- Usage

- Visualization and Results

- Miscellaneous

- References

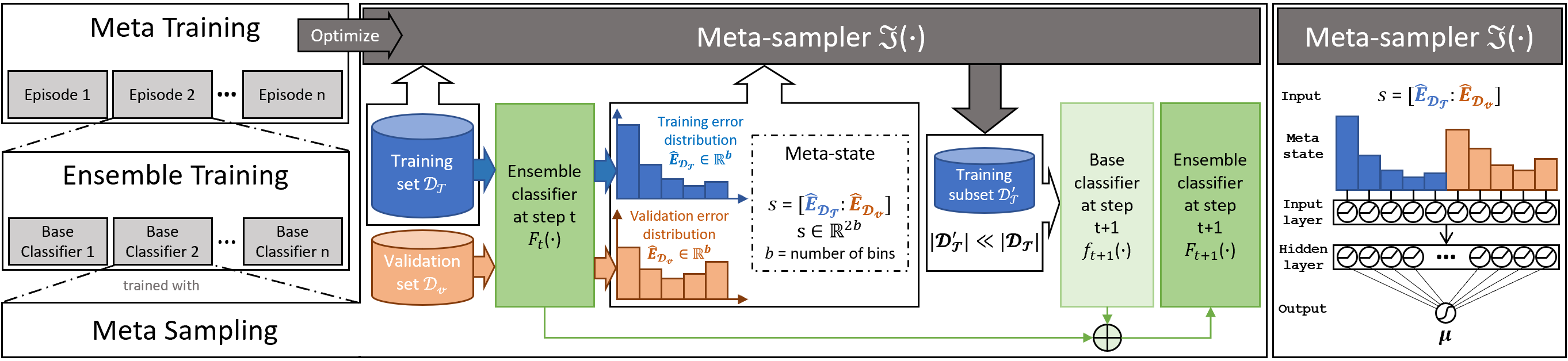

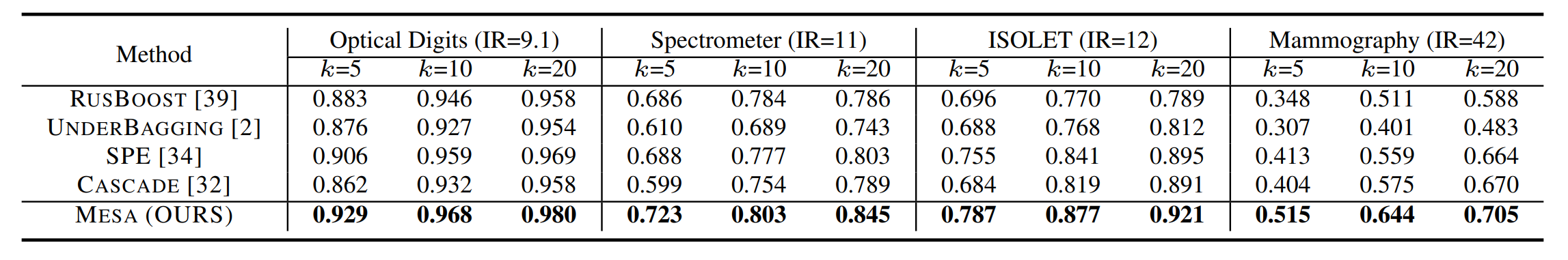

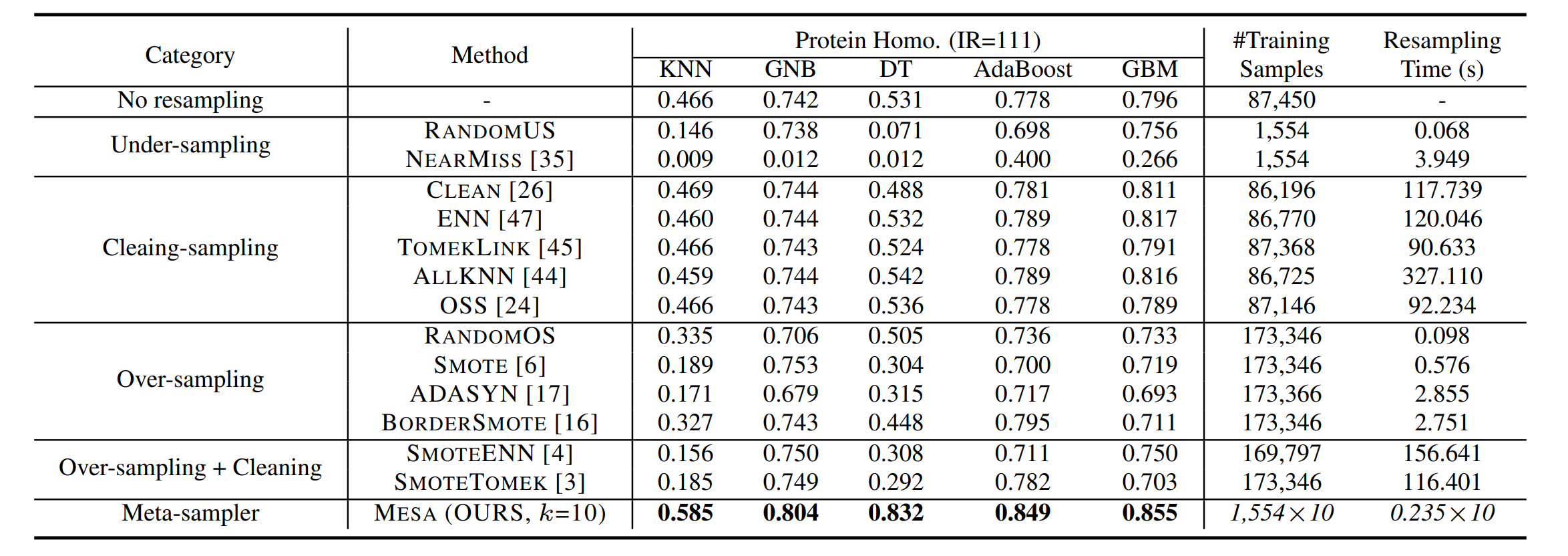

We introduce a novel ensemble imbalanced learning (EIL) framework named MESA. It adaptively resamples the training set in iterations to get multiple classifiers and forms a cascade ensemble model. MESA directly learns a parameterized sampling strategy (i.e., meta-sampler) from data to optimize the final metric beyond following random heuristics. It consists of three parts: meta sampling as well as ensemble training to build ensemble classifiers, and meta-training to optimize the meta-sampler.

The figure below gives an overview of the MESA framework.

Here are some personal thoughts on the advantages and disadvantages of MESA. More discussions are welcome!

Pros:

- 🍎 Wide compatiblilty.

We decoupled the model-training and meta-training process in MESA, making it compatible with most of the existing machine learning models. - 🍎 High data efficiency.

MESA performs strictly balanced under-sampling to train each base-learner in the ensemble. This makes it more data-efficient than other methods, especially on highly skewed data sets. - 🍎 Good performance.

The sampling strategy is optimized for better final generalization performance, we expect this can provide us with a better ensemble model. - 🍎 Transferability.

We use only task-agnostic meta-information during meta-training, which means that a meta-sampler can be directly used in unseen new tasks, thereby greatly reducing the computational cost brought about by meta-training.

Cons:

- 🍏 Meta-training cost.

Meta-training repeats the ensemble training process multiple times, which can be costly in practice (By shrinking the dataset used in meta-training, the computational cost can be reduced at the cost of minor performance loss). - 🍏 Need to set aside a separate validation set for training.

The meta-state is formed by computing the error distribution on both the training and validation sets. - 🍏 Possible unstable performance on small datasets.

Small datasets may cause the obtained error distribution statistics to be inaccurate/unstable, which will interfere with the meta-training process.

Main dependencies:

- Python (>=3.5)

- PyTorch (=1.0.0)

- Gym (>=0.17.3)

- pandas (>=0.23.4)

- numpy (>=1.11)

- scikit-learn (>=0.20.1)

- imbalanced-learn (=0.5.0, optional, for baseline methods)

To install requirements, run:

pip install -r requirements.txtNOTE: this implementation requires an old version of PyTorch (v1.0.0). You may want to start a new conda environment to run our code. The step-by-step guide is as follows (using torch-cpu for an example):

conda create --name mesa python=3.7.11conda activate mesaconda install pytorch-cpu==1.0.0 torchvision-cpu==0.2.1 cpuonly -c pytorchpip install -r requirements.txtThese commands should help you to get ready for running mesa. If you have any further questions, please feel free to open an issue or drop me an email.

A typical usage example:

# load dataset & prepare environment

args = parser.parse_args()

rater = Rater(args.metric)

X_train, y_train, X_valid, y_valid, X_test, y_test = load_dataset(args.dataset)

base_estimator = DecisionTreeClassifier()

# meta-training

mesa = Mesa(

args=args,

base_estimator=base_estimator,

n_estimators=10)

mesa.meta_fit(X_train, y_train, X_valid, y_valid, X_test, y_test)

# ensemble training

mesa.fit(X_train, y_train, X_valid, y_valid)

# evaluate

y_pred_test = mesa.predict_proba(X_test)[:, 1]

score = rater.score(y_test, y_pred_test)Running main.py

Here is an example:

python main.py --dataset Mammo --meta_verbose 10 --update_steps 1000You can get help with arguments by running:

python main.py --helpoptional arguments:

# Soft Actor-critic Arguments

-h, --help show this help message and exit

--env-name ENV_NAME

--policy POLICY Policy Type: Gaussian | Deterministic (default:

Gaussian)

--eval EVAL Evaluates a policy every 10 episode (default:

True)

--gamma G discount factor for reward (default: 0.99)

--tau G target smoothing coefficient(τ) (default: 0.01)

--lr G learning rate (default: 0.001)

--lr_decay_steps N step_size of StepLR learning rate decay scheduler

(default: 10)

--lr_decay_gamma N gamma of StepLR learning rate decay scheduler

(default: 0.99)

--alpha G Temperature parameter α determines the relative

importance of the entropy term against the reward

(default: 0.1)

--automatic_entropy_tuning G

Automaically adjust α (default: False)

--seed N random seed (default: None)

--batch_size N batch size (default: 64)

--hidden_size N hidden size (default: 50)

--updates_per_step N model updates per simulator step (default: 1)

--update_steps N maximum number of steps (default: 1000)

--start_steps N Steps sampling random actions (default: 500)

--target_update_interval N

Value target update per no. of updates per step

(default: 1)

--replay_size N size of replay buffer (default: 1000)

# Mesa Arguments

--cuda run on CUDA (default: False)

--dataset N the dataset used for meta-training (default: Mammo)

--metric N the metric used for evaluate (default: aucprc)

--reward_coefficient N

--num_bins N number of bins (default: 5). state-size = 2 *

num_bins.

--sigma N sigma of the Gaussian function used in meta-sampling

(default: 0.2)

--max_estimators N maximum number of base estimators in each meta-

training episode (default: 10)

--meta_verbose N number of episodes between verbose outputs. If 'full'

print log for each base estimator (default: 10)

--meta_verbose_mean_episodes N

number of episodes used for compute latest mean score

in verbose outputs.

--verbose N enable verbose when ensemble fit (default: False)

--random_state N random_state (default: None)

--train_ir N imbalance ratio of the training set after meta-

sampling (default: 1)

--train_ratio N the ratio of the data used in meta-training. set

train_ratio<1 to use a random subset for meta-training

(default: 1)

Running mesa-example.ipynb

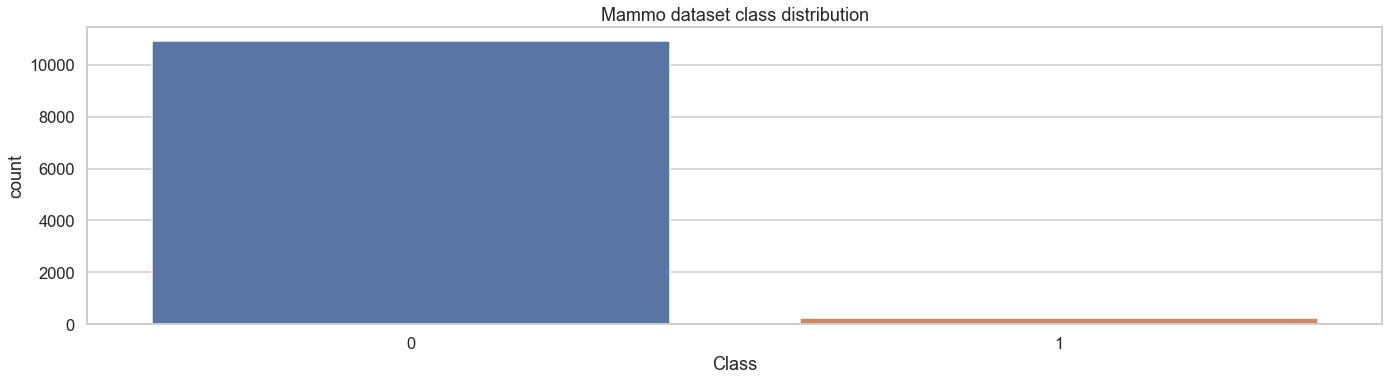

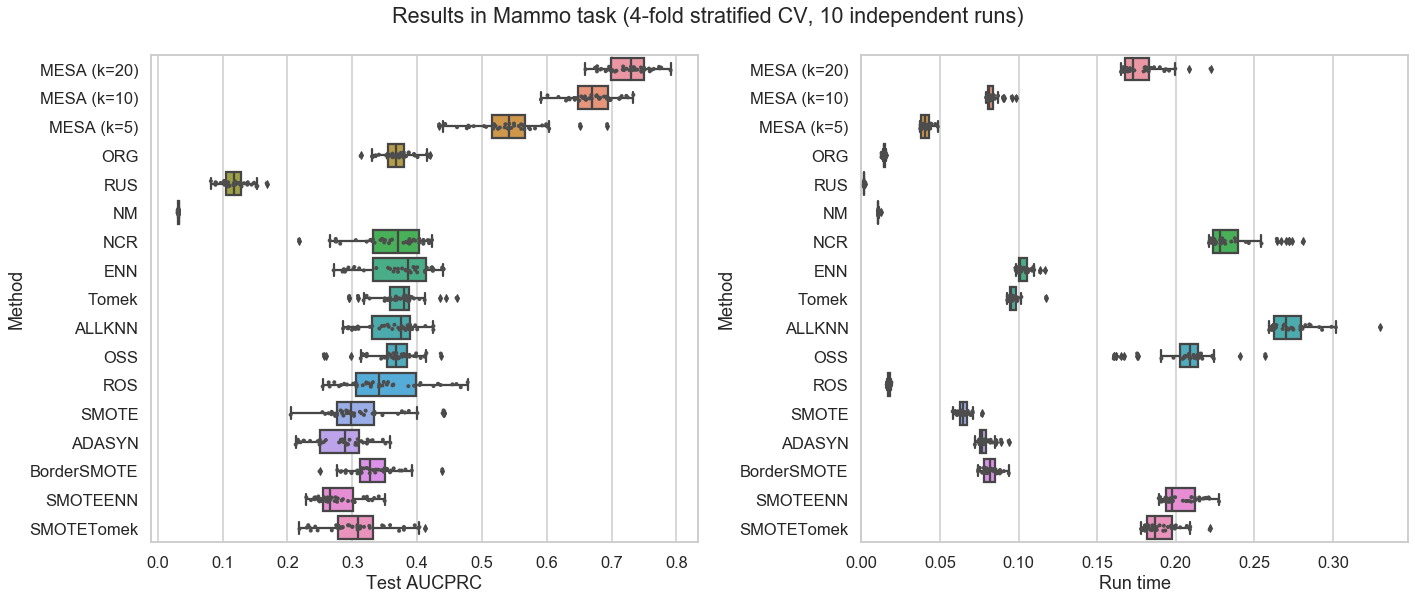

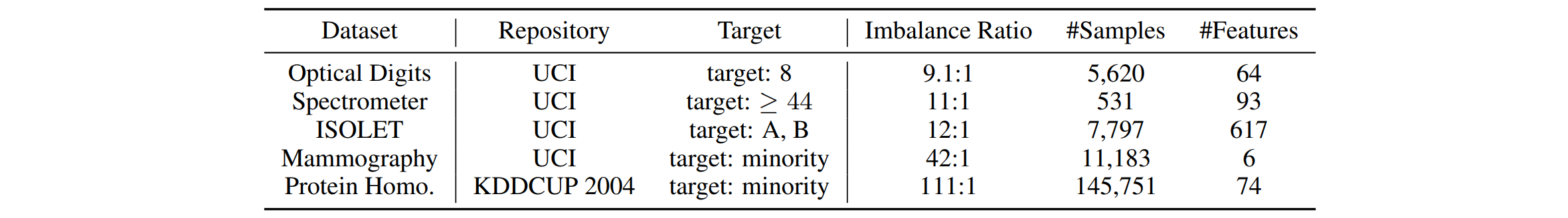

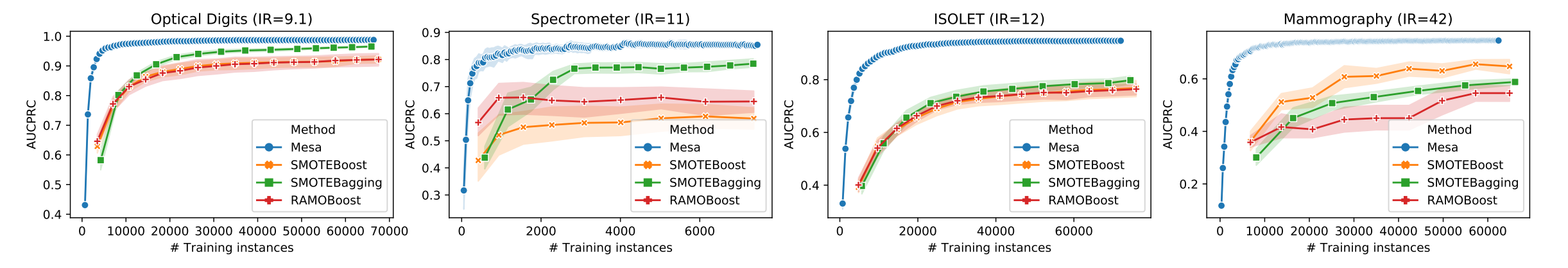

We include a highly imbalanced dataset Mammography (#majority class instances = 10,923, #minority class instances = 260, imbalance ratio = 42.012) and its variants with flip label noise for quick testing and visualization of MESA and other baselines. You can use mesa-example.ipynb to quickly:

- conduct a comparative experiment

- visualize the meta-training process of MESA

- visualize the experimental results of MESA and other baselines

Please check mesa-example.ipynb for more details.

From mesa-example.ipynb

Check out our previous work Self-paced Ensemble (ICDE 2020).

It is a simple heuristic-based method, but being very fast and works reasonably well.

This repository contains:

- Implementation of MESA

- Implementation of 7 ensemble imbalanced learning baselines

SMOTEBoost[1]SMOTEBagging[2]RAMOBoost[3]RUSBoost[4]UnderBagging[5]BalanceCascade[6]SelfPacedEnsemble[7]

- Implementation of 11 resampling imbalanced learning baselines [8]

NOTE: The implementations of the above baseline methods are based on imbalanced-algorithms and imbalanced-learn.

| # | Reference |

|---|---|

| [1] | N. V. Chawla, A. Lazarevic, L. O. Hall, and K. W. Bowyer, Smoteboost: Improving prediction of the minority class in boosting. in European conference on principles of data mining and knowledge discovery. Springer, 2003, pp. 107–119 |

| [2] | S. Wang and X. Yao, Diversity analysis on imbalanced data sets by using ensemble models. in 2009 IEEE Symposium on Computational Intelligence and Data Mining. IEEE, 2009, pp. 324–331. |

| [3] | Sheng Chen, Haibo He, and Edwardo A Garcia. 2010. RAMOBoost: ranked minority oversampling in boosting. IEEE Transactions on Neural Networks 21, 10 (2010), 1624–1642. |

| [4] | C. Seiffert, T. M. Khoshgoftaar, J. Van Hulse, and A. Napolitano, Rusboost: A hybrid approach to alleviating class imbalance. IEEE Transactions on Systems, Man, and Cybernetics-Part A: Systems and Humans, vol. 40, no. 1, pp. 185–197, 2010. |

| [5] | R. Barandela, R. M. Valdovinos, and J. S. Sanchez, New applications´ of ensembles of classifiers. Pattern Analysis & Applications, vol. 6, no. 3, pp. 245–256, 2003. |

| [6] | X.-Y. Liu, J. Wu, and Z.-H. Zhou, Exploratory undersampling for class-imbalance learning. IEEE Transactions on Systems, Man, and Cybernetics, Part B (Cybernetics), vol. 39, no. 2, pp. 539–550, 2009. |

| [7] | Zhining Liu, Wei Cao, Zhifeng Gao, Jiang Bian, Hechang Chen, Yi Chang, and Tie-Yan Liu. 2019. Self-paced Ensemble for Highly Imbalanced Massive Data Classification. 2020 IEEE 36th International Conference on Data Engineering (ICDE). IEEE, 2020, pp. 841-852. |

| [8] | Guillaume Lemaître, Fernando Nogueira, and Christos K. Aridas. Imbalanced-learn: A python toolbox to tackle the curse of imbalanced datasets in machine learning. Journal of Machine Learning Research, 18(17):1–5, 2017. |

Thanks goes to these wonderful people (emoji key):

Zhining Liu 🤔 💻 |

This project follows the all-contributors specification. Contributions of any kind welcome!