A General Regret Bound of Preconditioned Gradient Method for DNN Training

Hongwei Yong, Ying Sun and Lei Zhang

While adaptive learning rate methods, such as Adam, have achieved remarkable improvement in optimizing Deep Neural Networks (DNNs), they consider only the diagonal elements of the full preconditioned matrix. Though the full-matrix preconditioned gradient methods theoretically have a lower regret bound, they are impractical for use to train DNNs because of the high complexity. In this paper, we present a general regret bound with a constrained full-matrix preconditioned gradient, and show that the updating formula of the preconditioner can be derived by solving a cone-constrained optimization problem. With the block-diagonal and Kronecker-factorized constraints, a specific guide function can be obtained. By minimizing the upper bound of the guide function, we develop a new DNN optimizer, termed AdaBK. A series of techniques, including statistics updating, dampening, efficient matrix inverse root computation, and gradient amplitude preservation, are developed to make AdaBK effective and efficient to implement. The proposed AdaBK can be readily embedded into many existing DNN optimizers, e.g., SGDM and AdamW, and the corresponding SGDM_BK and AdamW_BK algorithms demonstrate significant improvements over existing DNN optimizers on benchmark vision tasks, including image classification, object detection and segmentation.

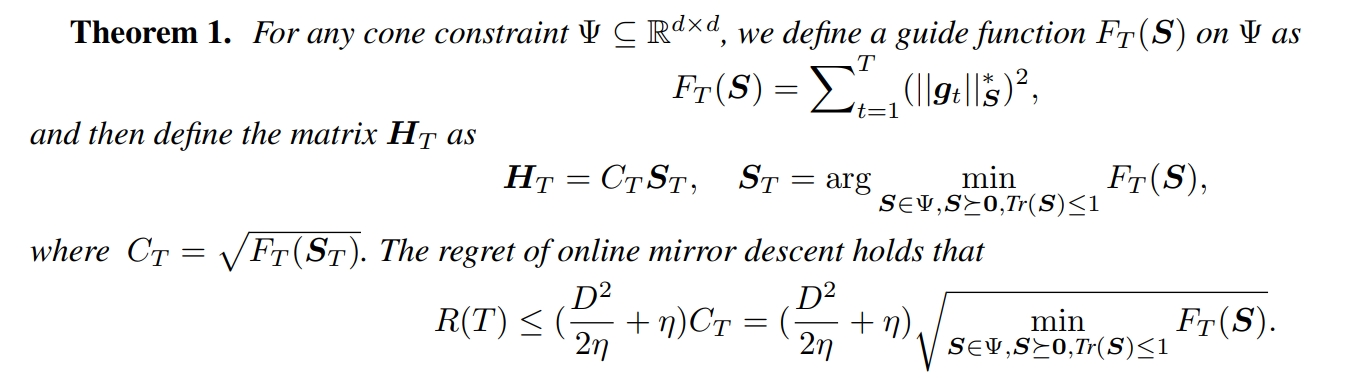

We propose a general regret bound theorem for general constrained preconditioned gradient descent methods:

- AdaBK algorithm According the proposed general regret bound theorem, a specific guide function can be obtained with the block-diagonal and Kronecker-factorized constraints for training DNNs. And then we can obtain the AdaBK algorithm by minimazing the the guide function as follows:

- SGDM_BK and AdamW_BK algorithm The proposed AdaBK can be embedded into many existing DNN optimizers, e.g., SGDM and AdamW, and the corresponding SGDM_BK and AdamW_BK algorithms and we also develop a series of techniques, including statistics updating, dampening, efficient matrix inverse root computation, and gradient amplitude preservation to make AdaBK effective and efficient in training DNNs. The SGDM_BK and AdamW_BK are shown as follows:

@inproceedings{Hongwei2023AdaBK,

title={A General Regret Bound of Preconditioned Gradient Method for DNN Training},

author={Hongwei Yong, Ying Sun and Lei Zhang},

booktitle={IEEE conference on computer vision and pattern recognition},

year={2023}

}