The repository is

-

A PyTorch library that provides some continual learning baseline algorithms on the vision-language continual pretraining benchmark P9D dataset.

-

PyTorch implement for ICCV23 paper of "CTP: Towards Vision-Language Continual Pretraining via Compatible Momentum Contrast and Topology Preservation".

Vision-Language Pretraining (VLP) has shown impressive results on diverse downstream tasks by offline training on large-scale datasets. Regarding the growing nature of real-world data, such an offline training paradigm on ever-expanding data is unsustainable, because models lack the continual learning ability to accumulate knowledge constantly. However, most continual learning studies are limited to uni-modal classification and existing multi-modal datasets cannot simulate continual non-stationary data stream scenarios.

To support the study of Vision-Language Continual Pretraining (VLCP), we first contribute a comprehensive and unified benchmark dataset P9D which contains over one million product image-text pairs from 9 industries. The data from each industry as an independent task supports continual learning and conforms to the real-world long-tail nature to simulate pretraining on web data.

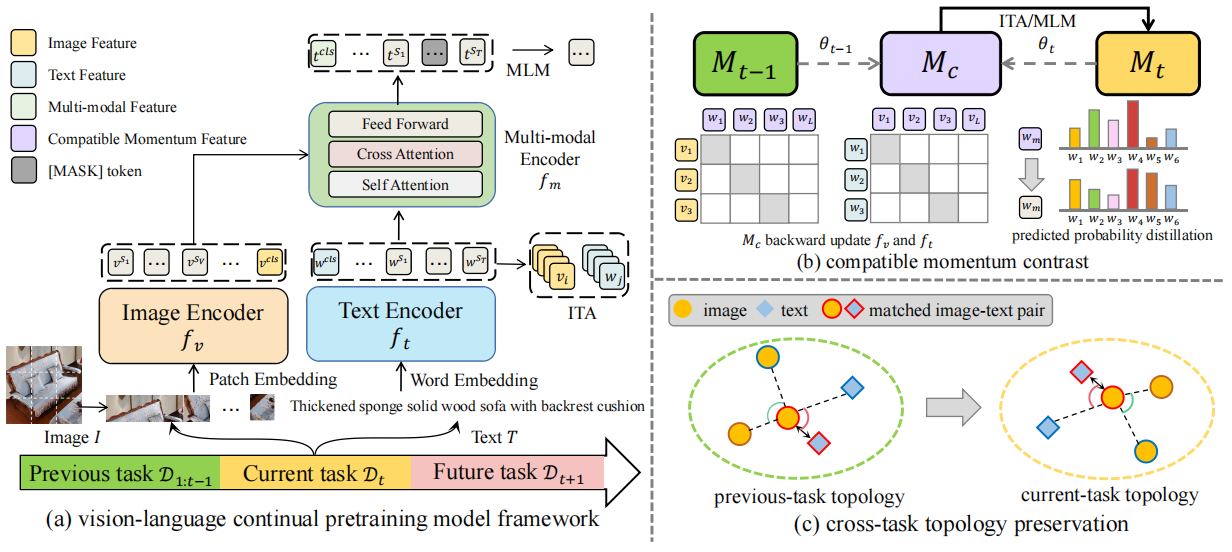

We comprehensively study the characteristics and challenges of VLCP, and propose a new algorithm: Compatible momentum contrast with Topology Preservation, dubbed CTP. The compatible momentum model absorbs the knowledge of the current and previous-task models to flexibly update the modal feature. Moreover, Topology Preservation transfers the knowledge of embedding across tasks while preserving the flexibility of feature adjustment.

- Python=3.7.15

- PyTorch=1.11.0

- Nvidia Driver Version=470.82.01

- CUDA Version=11.4

The detailed dependencies can refer to requirements.txt.

pip install -r requirements.txt

The detailed introduction of each baseline method can refer to the appendix of our paper or the corresponding raw paper.

Besides, the train folder provides the reimplemented codes on the vision-language continual pretraining task. Meanwhile, we also provide the training log of all methods as supplementary.

-

SeqF: Learning approach which learns each task incrementally while not using any data or knowledge from previous tasks. -

JointT: Learning approach which has access to all data from previous tasks and serves as an upper bound baseline.

-

SI: Continual learning through synaptic intelligence. arxiv | ICML 2017 | code -

MAS: Memory aware synapses: Learning what (not) to forget. arxiv | ECCV 2018 | code -

EWC: Overcoming catastrophic forgetting in neural networks. arxiv | PNAS 2017 -

LWF: Learning without Forgetting. arxiv | TPAMI 2017 -

AFEC: AFEC: Active Forgetting of Negative Transfer in Continual Learning. arxiv | NeurIPS 2021 | code -

RWalk: Riemannian Walk for Incremental Learning: Understanding Forgetting and Intransigence. arxiv | ECCV 2018 | code

ER: Continual learning with tiny episodic memories. arxiv | codeMoF: (Mean-of-Feature). The exemplar sampling methods used in ICARL.Kmeans: Use online k-Means to estimate the k centroids in feature space and update the exemplar buffer. It is documented in arxiv as a comparative sampling method.ICARL: Incremental Classifier and Representation Learning. arxiv | CVPR 2017 | codeLUCIR: Learning a Unified Classifier Incrementally via Rebalancing. CVPR 2019 | code

The details of P9D dataset can be found in this repository.

The model weights of each method are too large. For example, each baseline method has model weights obtained from 8 tasks (2.3G*8=18.4G). As an alternative, we provide the training log of all baseline methods in the default and reversed task order.

Meanwhile, we provide the download links of CTP and CTP_ER model weights which are trained in the default task order. The link to Google Driver only has the model weights of the final task, but the link to Baidu Netdisk has the model weights of each task.

| training log | model weights | |

|---|---|---|

| Google Driver | Here | Here |

| Baidu Netdisk | Here | Here |

- Modify the file paths of the dataset in

configs/base_seqF.yamlto your path. - Modify the LOG_NAME and OUT_DIR in

shell/seq_xxx.shto your storage path.xxxrepresents the name of the method. - Change the current path to the

shellfolder, and run the corresponding scriptsseq_xxx.sh.cd /shell/ sh seq_xxx.sh - The corresponding training log will be written in the

loggerfolder.

- Modify the LOG_NAME and OUT_DIR in

eval.shto the storage path of the trained model. - Run the evaluation script

eval.sh.sh eval.sh

If this codebase is useful to you, please cite our work:

@article{zhu2023ctp,

title={CTP: Towards Vision-Language Continual Pretraining via Compatible

Momentum Contrast and Topology Preservation},

author={Hongguang Zhu and Yunchao Wei and Xiaodan Liang and Chunjie Zhang and Yao Zhao},

journal={Proceedings of the IEEE International Conference on Computer Vision},

year={2023},

}

If you have any questions, please feel free to contact me: [email protected] or [email protected].

- Li, Junnan, et al. "Align before Fuse: Vision and Language Representation Learning with Momentum Distillation." NeurIPS. 2021.

- Masana, Marc, et al. "Class-Incremental Learning: Survey and Performance Evaluation on Image Classification." TPAMI. 2023.

- Zhou, Dawei, et al. "PyCIL: a Python toolbox for class-incremental learning." SCIENCE CHINA Information Sciences. 2023.

- Hong, Xiaopeng, et al. "An Incremental Learning, Continual Learning, and Life-Long Learning Repository". Github repository

- Wang, Liyuan, et al. "A Comprehensive Survey of Continual Learning: Theory, Method and Application". arxiv 2023