ArcNerf is a framework consist of many state-of-the-art NeRF-based methods with useful functionality on novel view rendering and object extraction.

The framework is highly modular, which allows you to modify any component in the pipeline and develop your own algorithm easily.

It is developed by TencentARC Lab.

[2023.02] We provide HDR-NeRF implementation and benchmark on HDR-Real dataset. We also provide a simple guidance for adding new algorithm/datasets to the pipeline. See guidance

In the recent few months, many frameworks working on common NeRF-based pipeline have been proposed:

(And a very nice start-up company Luma.ai is working on NeRF based real-time rendering on 3d scene.)

All those amazing works are trying to bring those state-of-the-art NeRF-based methods together into a complete, modular framework that is easy to change any of the components and conduct experiment quickly.

Toward the same goal, we are working on those fields could make this project helpful to the community:

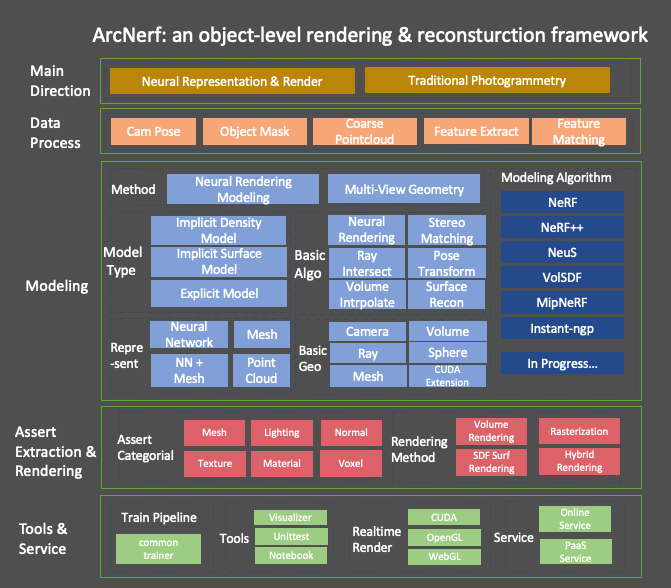

- Here is the framework overview. Notice that not all the designed feature have been implemented in this framework(eg. Traditional MVS Branch). We are working on extending it in the coming future.

- Highly modular design of pipeline:

- Every field is seperated, and you can plug in any new developed module under the framework. Those fields can be easily controlled by the config files and modified without harming others.

- We provide both sdf model, background model for modeling object and background as well, which are not commonly provided in other repo.

-

Based on this pipeline, we can easily extend original nerf/neus model for:

- NeRF with pruning volume, with freq embed

- NeRF with hashGrid embed

- NeRF with hashGrid embed + volume pruning -> NGP model

- NeuS with pruning volume, with freq embed

- NeuS with hashGrid embed

- NeuS with hashGrid embed + volume pruning -> NeuS-NGP model

-

Plug-in the modules like volume pruning makes the speed faster and generate better result. See expr for more detail.

-

foreground_model and background_model are separate well. They each bound the modeling in in-out area by sphere, volume and other geometry structure. It is suitable for common daily captured video. which provide high quality object mesh and background rendering at the same time

-

Unified dataset and benchmark:

- We split the dataset following on official repo, and all methods are running under the same settings for fair comparison.

- We also make unittests for the datasets and you are easy to check whether the configs on the data is correct.

-

Many useful functionality are provided:

- Mesh extraction on Density Model or SDF Model. (We are still working on incorporating better extraction functions to collect Assets for Modern Graphic Engine)

- Colmap preparation on your own capture data.

- surface rendering on the sdf model

- plentiful geometry functions implemented in torch backend.

- For other functions on the trainer and logging, please ref doc.

-

Docs and Code:

- All the functions are with detailed docs on its usage, and the operation are commented with its tensor size, which makes you easy to understand the change of components.

- We have implemented helpful geometry function on mesh/rays/sphere/volume/cam_pose in torch(some in CUDA extension). It could be useful for you in other 3D-related projects as well.

- We also provide our experiments note on our trails.

-

Tests and Visual helpers:

- We have developed an interactive visualizer to easily tests the correctness of our geometry function. It is compatible with torch/np arrays.

- We have written a lots of unittest on the geometry and modelling functions. Take a look, and you will be easy to understand how to use the visualizer for checking your own implementation.

We are still working on many other helpful functions for better rendering and extraction, please ref todo for more details.

Bring issues to us if you have any suggestion!

Get the repo by git clone https://github.com/TencentARC/ArcNerf --recursive

- Install libs by

pip install -r requirements.txt. - Install the customized ops by

sh scripts/install_ops.sh. (Only forvolume sampling/pruning, etc) - Install tiny-cuda-nn modules by

sh scripts/install_tinycudann.sh. (Only forfusemlp/hashgrid encode, etc)

We test on env with:

- GPU: NVIDIA-A100 with CUDA 11.1 (Lower version may harm the

tinycudannmodule). - cmake: 3.21.3 (>=3.21)

- gcc: 8.3.1 (>=5.4)

- python: 3.8.5 (>=3.7)

- torch: 1.9.1

Colmap is used to estimate camera locations and sparse point cloud. It will let you run the algorithm on your own data.

Install under their instruction.

Two branch simplenerf/simplengp contains the model for vanilla nerf and instant-ngp only with less complicated model design.

- Download and prepare public datasets ref to instruction.

- If you use you own captured data,

scripts/data_process.shwill help you extract the frames and estimate the camera.

Train by python train.py --configs configs/default.yaml --gpu_ids 0.

--gpu_ids -1will usecpu, which is good for you to debug the code in local IDE like pycharm line by line without a GPU device.- for more details on the

config, go to default.yaml for more details. - add

--resume path/to/modelcan resume training from checkpoints. Model will be saved periodically to forbid unpredictable error. - For more detail of the training pipeline, visit common_trainer and trainer.

Eval by python evaluate.py --configs configs/eval.yaml --gpu_ids 0. You can set your target model by --model_pt path/to/model.

Inference makes customized rendering video and extract mesh output.

Run by python inference.py --configs configs/eval.yaml --gpu_ids 0. You can set your target model by --model_pt path/to/model.

Some notebooks are provided for you to understand what is happening for inference and how to use our visualizer. Go to notebook for more details.

All the datasets inherit the same data class for ray generation, img/mask preparation. What you need to do in a new class is to read image/camera poses under different mode split. The details are here.

We support customized data by Capture dataset. You can record a clip of video around the object and run the pre-process

script under scripts/data_process.sh. Notice that colmap results is highly correlated to your filming status. A clear, stable

video with full angle towards the object could bring more accurate result.

We put all the dataset configs under conf, and you can check the result by running the unittest

tests/tests_arcnerf/tests_dataset/tests_any_dataset.py and see the results.

For any dataset that we have provided, you should check the configs like this to ensure the setting are correct.

See benchmark for details, and expr for some trails we have conducted and some problems we have meet.

All image with white-bkg, same as the eval in vanilla NeRF.

| chair | drums | ficus | hotdog | lego | materials | mic | ship | avg | ||

|---|---|---|---|---|---|---|---|---|---|---|

| nerf | 33.30 | 25.11 | 30.47 | 36.73 | 32.86 | 29.87 | 33.24 | 28.70 | 31.285 | |

| nerf(paper) | 33.00 | 25.01 | 30.13 | 36.18 | 32.54 | 29.62 | 32.91 | 28.65 | 31.043 | |

| ngp | 34.88 | 25.50 | 30.55 | 36.92 | 35.38 | 29.12 | 34.80 | 28.39 | 31.942 | |

| ngp(paper) | 34.28 | 25.70 | 33.13 | 36.99 | 36.12 | 29.35 | 35.67 | 30.61 | 32.731 |

- ngp paper reports PSNR with black background, which is higher than using white background.

The models are highly modular and are in level-structure. You are free to modify components at each level by configs, or easily develop new algorithm and plug it in the desired place.

For more detail on the structure of model class, visit model and understand each component of it.

We implement plentiful geometry functions in torch under geometry. The operation are batch-based, and their correctness are check under unittests.

You can see the doc for more details and know what is support.

We make a offline interactive 3d visualizer in plotly backend. All the geometry components

in numpy tensor could be easily plugin the visualizer. It is compatible to torch-template 3d

projects and helpful for you to debug your implementation of the geometric functions.

We provide a notebook showing the example of usage. You can ref the doc for more details.

There is also another repo contain this visualizer. Please go to ArcVis if you find it is helpful.

We have made many unittests for checking the geometry function and models. See doc to know how to test and get visual results.

We suggest to you make your own unittests if you are developing new algorithms to ensure correctness.

Comments in code are also helpful for you to learn how to use the function and the change of tensor size.

We use our own training pipeline, which provides many customized functionality. It is modular and easy to add/modify and part of the training pipeline.

We have another repo common_trainer. Or you can ref the doc for more information.

Thanks to the very powerful nerfstudio viewer, we adopt arcnerf to it

and it can easily show the training and eval result of model from this project.

To use it in training, you can follow train_cfgs to add

the viewer confs in viewer_cfg. You can directly start training as usually by

python train.py --configs configs/path_to_config.

After training, you can use the online viewer to visualize the result.

You can call python tools/vis_ns_viewer.py --configs configs/path_to_config --model_pt path_to_model to visualize the

dataset and rendering outputs. You can also vis the fg_model by setting --viewer.show_fg_only True.

For how to use the viewer, please visit their website at doc.

You can also add infer cams using their render panel, which export a json file.

You can then set inference.render.json_path as the file location, our pipeline will render the video with such customized cam path.

We may develop the viewer more compatible with this project in the future, for example, adding more function to object level

rendering or manipulation. Thanks to the authors of nerfstudio again!

Check LICENSE.

Please see Citation. Thanks to those amazing projects.

If you find this project useful, please consider citing:

@misc{arcnerf,

author={Yue Luo, Yan-Pei Cao},

title={arcnerf: nerf-based object/scene rendering and extraction framework },

url={https://github.com/TencentARC/arcnerf/},

year={2022},

You can contact the author by [email protected] if you need any help.