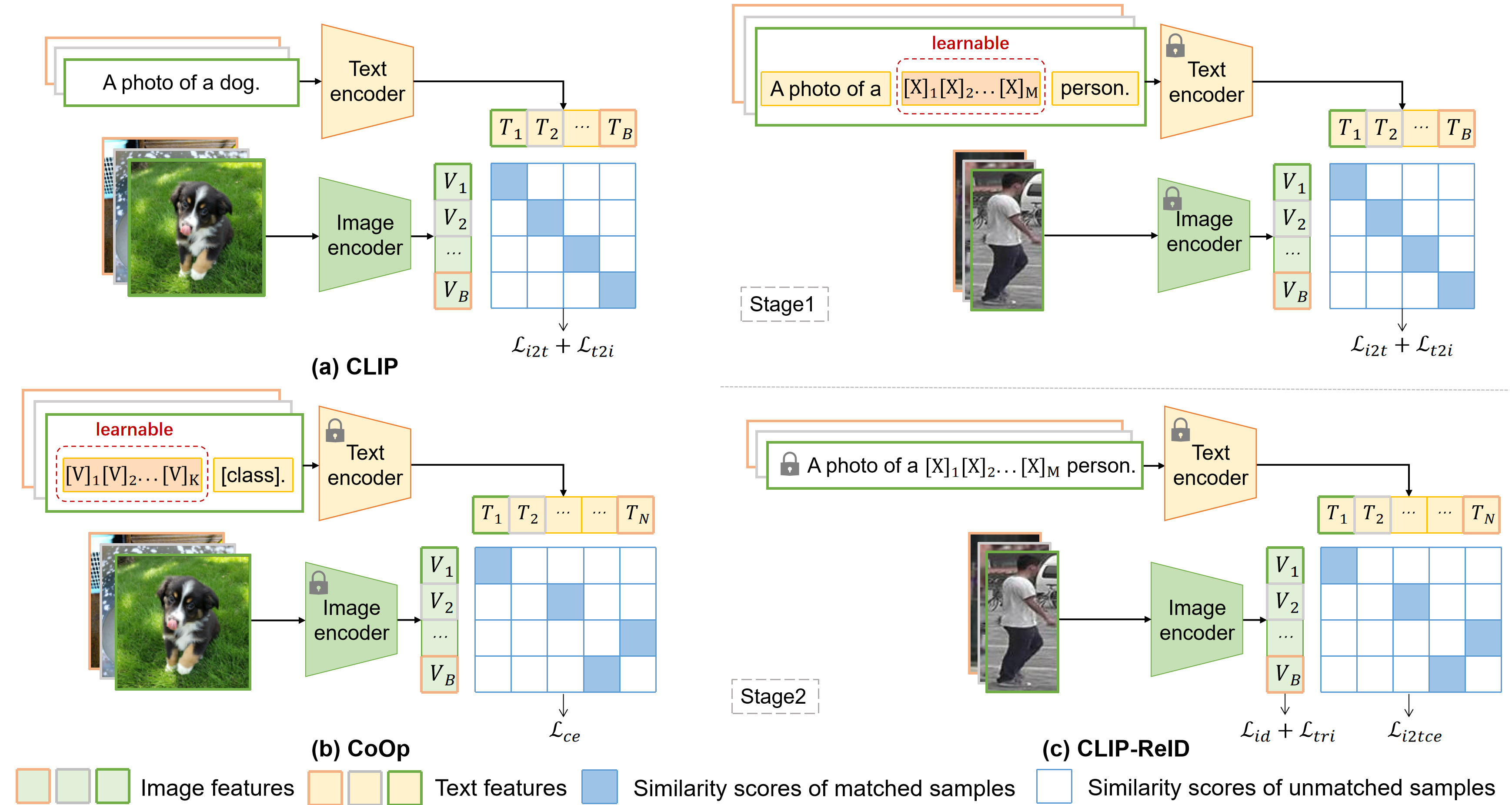

CLIP-ReID: Exploiting Vision-Language Model for Image Re-Identification without Concrete Text Labels [pdf]

conda create -n clipreid python=3.8

conda activate clipreid

conda install pytorch==1.8.0 torchvision==0.9.0 torchaudio==0.8.0 cudatoolkit=10.2 -c pytorch

pip install yacs

pip install timm

pip install scikit-image

pip install tqdm

pip install ftfy

pip install regex

Download the datasets (Market-1501, MSMT17, DukeMTMC-reID, Occluded-Duke, VehicleID, VeRi-776), and then unzip them to your_dataset_dir.

For example, if you want to run CNN-based CLIP-ReID-baseline for the Market-1501, you need to modify the bottom of configs/person/cnn_base.yml to

DATASETS:

NAMES: ('market1501')

ROOT_DIR: ('your_dataset_dir')

OUTPUT_DIR: 'your_output_dir'

then run

CUDA_VISIBLE_DEVICES=0 python train.py --config_file configs/person/cnn_base.yml

if you want to run ViT-based CLIP-ReID for MSMT17, you need to modify the bottom of configs/person/vit_clipreid.yml to

DATASETS:

NAMES: ('msmt17')

ROOT_DIR: ('your_dataset_dir')

OUTPUT_DIR: 'your_output_dir'

then run

CUDA_VISIBLE_DEVICES=0 python train_clipreid.py --config_file configs/person/vit_clipreid.yml

if you want to run ViT-based CLIP-ReID+SIE+OLP for MSMT17, run:

CUDA_VISIBLE_DEVICES=0 python train_clipreid.py --config_file configs/person/vit_clipreid.yml MODEL.SIE_CAMERA True MODEL.SIE_COE 1.0 MODEL.STRIDE_SIZE '[12, 12]'

For example, if you want to test ViT-based CLIP-ReID for MSMT17

CUDA_VISIBLE_DEVICES=0 python test_clipreid.py --config_file configs/person/vit_clipreid.yml TEST.WEIGHT 'your_trained_checkpoints_path/ViT-B-16_60.pth'

Codebase from TransReID, CLIP, and CoOp.

The veri776 viewpoint label is from https://github.com/Zhongdao/VehicleReIDKeyPointData.

| Datasets | MSMT17 | Market | Duke | Occ-Duke | VeRi | VehicleID |

|---|---|---|---|---|---|---|

| CNN-baseline | model|test | model|test | model|test | model|test | model|test | model|test |

| CNN-CLIP-ReID | model|test | model|test | model|test | model|test | model|test | model|test |

| ViT-baseline | model|test | model|test | model|test | model|test | model|test | model|test |

| ViT-CLIP-ReID | model|test | model|test | model|test | model|test | model|test | model|test |

| ViT-CLIP-ReID-SIE-OLP | model|test | model|test | model|test | model|test | model|test | model|test |

Note that all results listed above are without re-ranking.

With re-ranking, ViT-CLIP-ReID-SIE-OLP achieves 86.7% mAP and 91.1% R1 on MSMT17.

If you use this code for your research, please cite

@article{li2022clip,

title={CLIP-ReID: Exploiting Vision-Language Model for Image Re-Identification without Concrete Text Labels},

author={Li, Siyuan and Sun, Li and Li, Qingli},

journal={arXiv preprint arXiv:2211.13977},

year={2022}

}