Your intelligent ally for effortless data retrieval across documents and seamless browsing the web.

wizsearch-demo.mp4

Connects to large language models via the Ollama server.

| Platform | Demo Link | Code Link |

|---|---|---|

| Replicate 🔄 | 🔗 Demo | 💻 Code |

| OpenAI 🧠 | 🔗 Demo | 💻 Code |

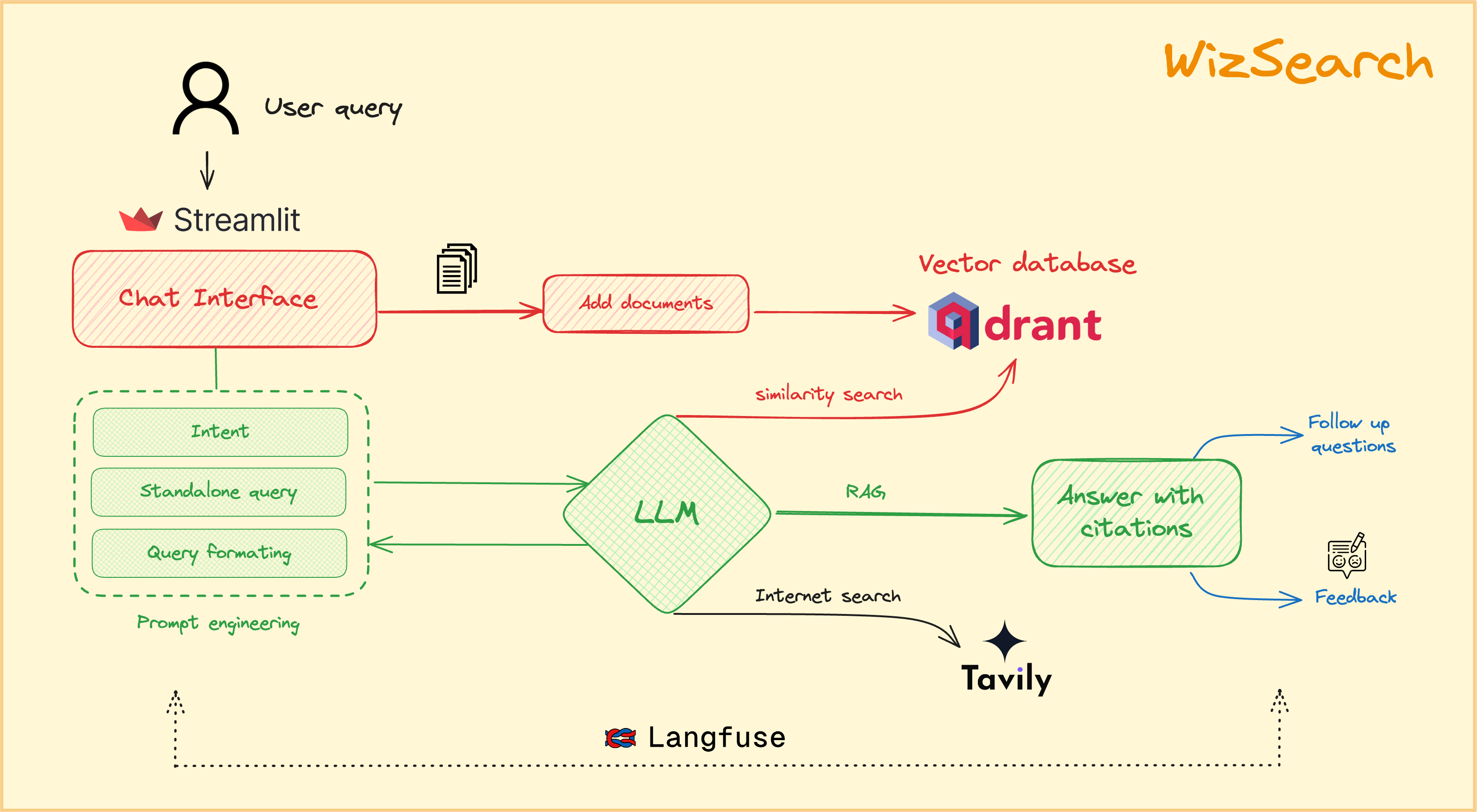

We built Wiz Search using the following components:

- LLM: Open source models like llama3, mistral, LLaVA, etc using Ollama for natural language understanding and generation.

- Embeddings: BAAI/bge-small-en-v1.5 to enhance search relevance.

- Intelligent Search: Tavily for advanced search capabilities.

- Vector Databases: Qdrant for efficient data storage and retrieval.

- Observability: Langfuse for monitoring and observability.

- UI: Streamlit for creating an interactive and user-friendly interface.

- Clone the repo

git clone https://github.com/SSK-14/WizSearch.git

- Install required libraries

- Create virtual environment

pip3 install virtualenv

python3 -m venv {your-venvname}

source {your-venvname}/bin/activate

- Install required libraries

pip3 install -r requirements.txt

- Activate your virtual environment

source {your-venvname}/bin/activate

- Set up your

secrets.tomlfile

- Copy

example.secrets.tomlintosecrets.tomland replace the keys

- Running

streamlit run app.py

Contributions to this project are welcome! If you find any issues or have suggestions for improvement, please open an issue or submit a pull request on the project's GitHub repository.

This project is licensed under the MIT License. Feel free to use, modify, and distribute the code as per the terms of the license.