This repository is for PANet and PA-EDSR introduced in the following paper

Yiqun Mei, Yuchen Fan, Yulun Zhang, Jiahui Yu, Yuqian Zhou, Ding Liu, Yun Fu, Thomas S. Huang and Humphrey Shi "Pyramid Attention Networks for Image Restoration", [Arxiv]

The code is built on EDSR (PyTorch) & RNAN and tested on Ubuntu 18.04 environment (Python3.6, PyTorch_1.1) with Titan X/1080Ti/V100 GPUs.

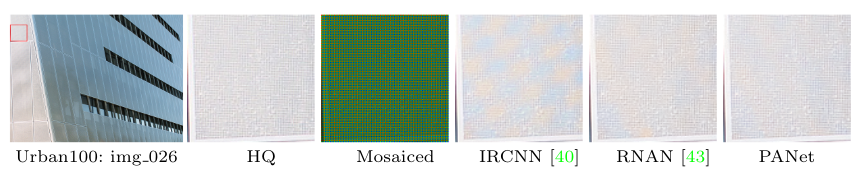

Self-similarity refers to the image prior widely used in image restoration algorithms that small but similar patterns tend to occur at different locations and scales. However, recent advanced deep convolutional neural network based methods for image restoration do not take full advantage of self-similarities by relying on self-attention neural modules that only process information at the same scale. To solve this problem, we present a novel Pyramid Attention module for image restoration, which captures long-range feature correspondences from a multi-scale feature pyramid. Inspired by the fact that corruptions, such as noise or compression artifacts, drop drastically at coarser image scales, our attention module is designed to be able to borrow clean signals from their "clean" correspondences at the coarser levels. The proposed pyramid attention module is a generic building block that can be flexibly integrated into various neural architectures. Its effectiveness is validated through extensive experiments on multiple image restoration tasks: image denoising, demosaicing, compression artifact reduction, and super resolution. Without any bells and whistles, our PANet (pyramid attention module with simple network backbones) can produce state-of-the-art results with superior accuracy and visual quality.

More details at DN_RGB.

More details at Demosaic.

More details at CAR.

More details at SR.

If you find the code helpful in your resarch or work, please cite the following papers.

@article{mei2020pyramid,

title={Pyramid Attention Networks for Image Restoration},

author={Mei, Yiqun and Fan, Yuchen and Zhang, Yulun and Yu, Jiahui and Zhou, Yuqian and Liu, Ding and Fu, Yun and Huang, Thomas S and Shi, Honghui},

journal={arXiv preprint arXiv:2004.13824},

year={2020}

}

@InProceedings{Lim_2017_CVPR_Workshops,

author = {Lim, Bee and Son, Sanghyun and Kim, Heewon and Nah, Seungjun and Lee, Kyoung Mu},

title = {Enhanced Deep Residual Networks for Single Image Super-Resolution},

booktitle = {The IEEE Conference on Computer Vision and Pattern Recognition (CVPR) Workshops},

month = {July},

year = {2017}

}

This code is built on EDSR (PyTorch), RNAN and generative-inpainting-pytorch. We thank the authors for sharing their codes.