This is the source code for our paper Spatial Structure Constraints for Weakly Supervised Semantic Segmentation

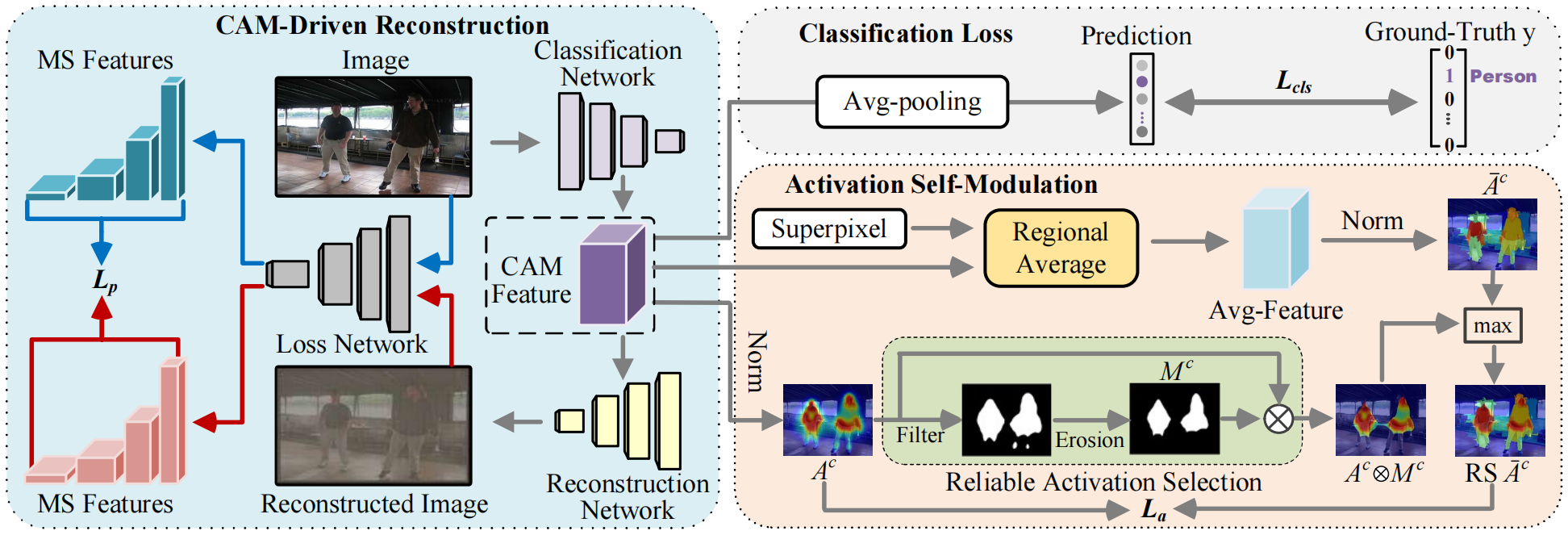

The architecture of our proposed approach is as follows

-

Install PyTorch 1.7 with Python 3 and CUDA 11.3

-

Clone this repo

git clone https://github.com/NUST-Machine-Intelligence-Laboratory/SSC.git

- Download PASCAL VOC 2012

- Download Superpixel

-

Install Python 3.8, PyTorch 1.11.0, and more in requirements.txt

-

Download ImageNet pretrained model of DeeplabV2 from pytorch . Rename the downloaded pth as "resnet-101_v2.pth" and put it into the directory './data/model_zoo/'. (This step is just to avoid directory related error.)

-

Download our generated pseudo label sem_seg and put it into the directory './data/'. (This step is just to avoid directory related error.)

-

Download our pretrained checkpoint best_ckpt.pth and put it into the directory './segmentation/'. Test the segmentation network (you need to install CRF python library (pydensecrf) if you want to test with the CRF post-processing)

cd segmentation

pip install -r requirements.txt

python main.py --test --logging_tag seg_result --ckpt best_ckpt.pth

python test.py --crf --logits_dir ./data/logging/seg_result/logits_msc --mode "val"

- Run run_sample.py (You can either mannually edit the file, or specify commandline arguments.) and gen_mask.py to obtain the pseudo-labels and confidence masks (put them into the directory './segmentation/data/' ). Our generated ones can also be downloaded from sem_seg and mask_irn .

python run_sample.py

python gen_mask.py

- Put the data and pretrained model in the corresponding directories like:

data/

--- VOC2012/

--- Annotations/

--- ImageSet/

--- JPEGImages/

--- SegmentationClass/

--- ...

--- sem_seg/

--- ****.png

--- ****.png

--- mask_irn/

--- ****.png

--- ****.png

--- model_zoo/

--- resnet-101_v2.pth

--- logging/

- Train the segmentation network

cd segmentation

python main.py -dist --logging_tag seg_result --amp