hipSYCL is a modern SYCL implementation targeting CPUs and GPUs, with a focus on leveraging existing toolchains such as CUDA or HIP. hipSYCL currently targets the following devices:

- Any CPU via OpenMP

- NVIDIA GPUs via CUDA

- using clang's CUDA toolchain

- as a library for NVIDIA's nvc++ compiler (experimental)

- AMD GPUs via HIP/ROCm

- Intel GPUs via oneAPI Level Zero and SPIR-V (highly experimental and WIP!)

hipSYCL supports compiling source files into a single binary that can run on all these backends when building against appropriate clang distributions. More information about the compilation flow can be found here.

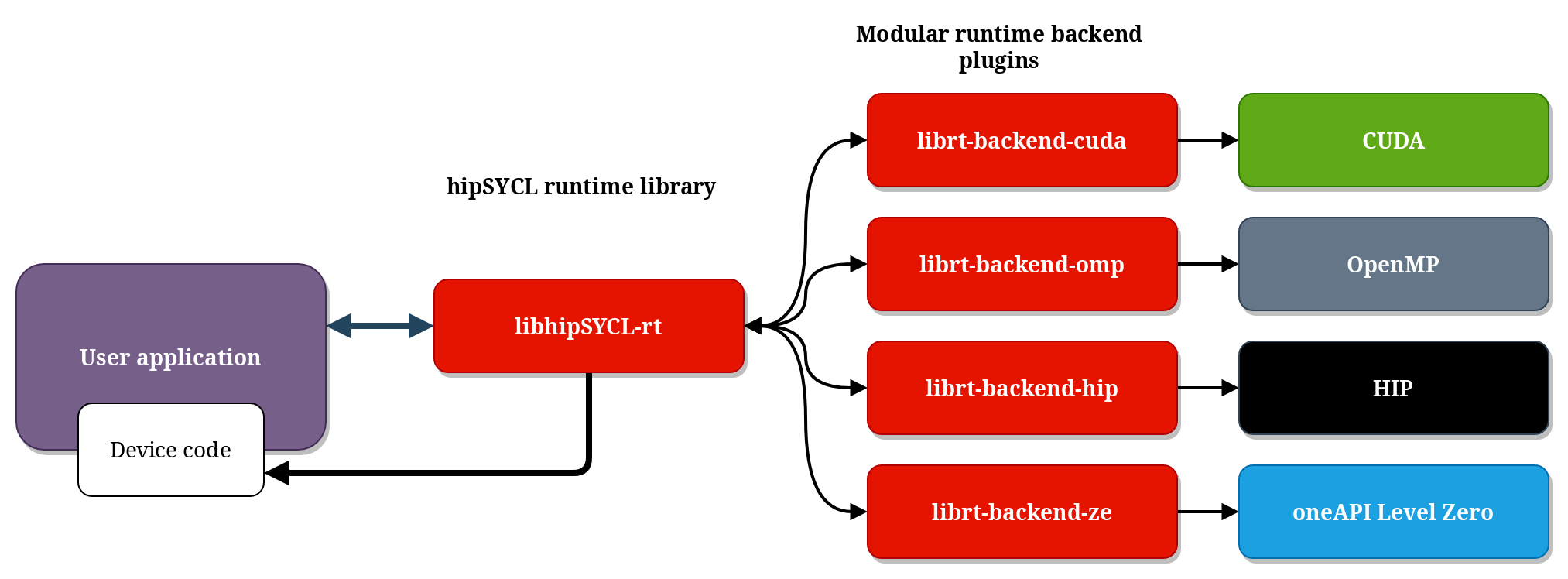

The runtime architecture of hipSYCL consists of the main library hipSYCL-rt, as well as independent, modular plugin libraries for the individual backends:

hipSYCL's compilation and runtime design allows hipSYCL to effectively aggregate multiple toolchains that are otherwise incompatible, making them accessible with a single SYCL interface.

The philosophy behind hipSYCL is to leverage such existing toolchains as much as possible. This brings not only maintenance and stability advantages, but enables performance on par with those established toolchains by design, and also allows for maximum interoperability with existing compute platforms. For example, the hipSYCL CUDA and ROCm backends rely on the clang CUDA/HIP frontends that have been augmented by hipSYCL to additionally also understand SYCL code. This means that the hipSYCL compiler can not only compile SYCL code, but also CUDA/HIP code even if they are mixed in the same source file, making all CUDA/HIP features - such as the latest device intrinsics - also available from SYCL code (details). Additionally, vendor-optimized template libraries such as rocPRIM or CUB can also be used with hipSYCL. Consequently, hipSYCL allows for highly optimized code paths in SYCL code for specific devices.

Because a SYCL program compiled with hipSYCL looks just like any other CUDA or HIP program to vendor-provided software, vendor tools such as profilers or debuggers also work well with hipSYCL.

The following image illustrates how hipSYCL fits into the wider SYCL implementation ecosystem:

While hipSYCL started its life as a hobby project, development is now led and funded by Heidelberg University. hipSYCL not only serves as a research platform, but is also a solution used in production on machines of all scales, including some of the most powerful supercomputers.

We encourage contributions and are looking forward to your pull request! Please have a look at CONTRIBUTING.md. If you need any guidance, please just open an issue and we will get back to you shortly.

If you are a student at Heidelberg University and wish to work on hipSYCL, please get in touch with us. There are various options possible and we are happy to include you in the project :-)

hipSYCL is a research project. As such, if you use hipSYCL in your research, we kindly request that you cite:

Aksel Alpay, Bálint Soproni, Holger Wünsche, and Vincent Heuveline. 2022. Exploring the possibility of a hipSYCL-based implementation of oneAPI. In International Workshop on OpenCL (IWOCL'22). Association for Computing Machinery, New York, NY, USA, Article 10, 1–12. https://doi.org/10.1145/3529538.3530005

or, depending on your focus,

Aksel Alpay and Vincent Heuveline. 2020. SYCL beyond OpenCL: The architecture, current state and future direction of hipSYCL. In Proceedings of the International Workshop on OpenCL (IWOCL ’20). Association for Computing Machinery, New York, NY, USA, Article 8, 1. DOI:https://doi.org/10.1145/3388333.3388658

(The latter is a talk and available online. Note that some of the content in this talk is outdated by now)

We gratefully acknowledge contributions from the community.

hipSYCL has been repeatedly shown to deliver very competitive performance compared to other SYCL implementations or proprietary solutions like CUDA. See for example:

- Sohan Lal, Aksel Alpay, Philip Salzmann, Biagio Cosenza, Nicolai Stawinoga, Peter Thoman, Thomas Fahringer, and Vincent Heuveline. 2020. SYCL-Bench: A Versatile Single-Source Benchmark Suite for Heterogeneous Computing. In Proceedings of the International Workshop on OpenCL (IWOCL ’20). Association for Computing Machinery, New York, NY, USA, Article 10, 1. DOI:https://doi.org/10.1145/3388333.3388669

- Brian Homerding and John Tramm. 2020. Evaluating the Performance of the hipSYCL Toolchain for HPC Kernels on NVIDIA V100 GPUs. In Proceedings of the International Workshop on OpenCL (IWOCL ’20). Association for Computing Machinery, New York, NY, USA, Article 16, 1–7. DOI:https://doi.org/10.1145/3388333.3388660

- Tom Deakin and Simon McIntosh-Smith. 2020. Evaluating the performance of HPC-style SYCL applications. In Proceedings of the International Workshop on OpenCL (IWOCL ’20). Association for Computing Machinery, New York, NY, USA, Article 12, 1–11. DOI:https://doi.org/10.1145/3388333.3388643

- Building hipSYCL against newer LLVM generally results in better performance for backends that are relying on LLVM.

- Unlike other SYCL implementations that may rely on kernel compilation at runtime, hipSYCL relies heavily on ahead-of-time compilation. So make sure to use appropriate optimization flags when compiling.

- For the CPU backend:

- Don't forget that, due to hipSYCL's ahead-of-time compilation nature, you may also want to enable latest vectorization instruction sets when compiling, e.g. using

-march=native. - Enable OpenMP thread pinning (e.g.

OMP_PROC_BIND=true). hipSYCL uses asynchronous worker threads for some light-weight tasks such as garbage collection, and these additional threads can interfere with kernel execution if OpenMP threads are not bound to cores. - Don't use

nd_rangeparallel for unless you absolutely have to, as it is difficult to map efficiently to CPUs.- If you don't need barriers or local memory, use

parallel_forwithrangeargument. - If you need local memory or barriers, scoped parallelism or hierarchical parallelism models may perform better on CPU than

parallel_forkernels usingnd_rangeargument and should be preferred. Especially scoped parallelism also works well on GPUs. - If you have to use

nd_range parallel_forwith barriers on CPU, theomp.acceleratedcompilation flow will most likely provide substantially better performance than theomp.library-onlycompilation target. See the documentation on compilation flows for details.

- If you don't need barriers or local memory, use

- Don't forget that, due to hipSYCL's ahead-of-time compilation nature, you may also want to enable latest vectorization instruction sets when compiling, e.g. using

When targeting the CUDA or HIP backends, hipSYCL just massages the AST slightly to get clang -x cuda and clang -x hip to accept SYCL code. hipSYCL is not involved in the actual code generation. Therefore any significant deviation in kernel performance compared to clang-compiled CUDA or clang-compiled HIP is unexpected.

As a consequence, if you compare it to other llvm-based compilers please make sure to compile hipSYCL against the same llvm version. Otherwise you would effectively be simply comparing the performance of two different LLVM versions. This is in particular true when comparing it to clang CUDA or clang HIP.

hipSYCL is not yet a fully conformant SYCL implementation, although many SYCL programs already work with hipSYCL.

- SYCL 2020 feature support matrix

- A (likely incomplete) list of limitations for older SYCL 1.2.1 features

- A (also incomplete) timeline showing development history

Supported hardware:

- Any CPU for which a C++17 OpenMP compiler exists

- NVIDIA CUDA GPUs. Note that clang, which hipSYCL relies on, may not always support the very latest CUDA version which may sometimes impact support for very new hardware. See the clang documentation for more details.

- AMD GPUs that are supported by ROCm

Operating system support currently strongly focuses on Linux. On Mac, only the CPU backend is expected to work. Windows support with CPU and CUDA backends is experimental, see Using hipSYCL on Windows.

In order to compile software with hipSYCL, use syclcc which automatically adds all required compiler arguments to the CUDA/HIP compiler. syclcc can be used like a regular compiler, i.e. you can use syclcc -o test test.cpp to compile your SYCL application called test.cpp with hipSYCL.

syclcc accepts both command line arguments and environment variables to configure its behavior (e.g., to select the target platform CUDA/ROCm/CPU to compile for). See syclcc --help for a comprehensive list of options.

When compiling with hipSYCL, you will need to specify the targets you wish to compile for using the --hipsycl-targets="backend1:target1,target2,...;backend2:..." command line argument, HIPSYCL_TARGETS environment variable or cmake argument. See the documentation on using hipSYCL for details.

Instructions for using hipSYCL in CMake projects can also be found in the documentation on using hipSYCL.

- hipSYCL design and architecture

- hipSYCL runtime specification

- hipSYCL compilation model

- How to use raw HIP/CUDA inside hipSYCL code to create optimized code paths

- A simple SYCL example code for testing purposes can be found here.

- SYCL Extensions implemented in hipSYCL

- Macros used by hipSYCL

- Environment variables supported by hipSYCL