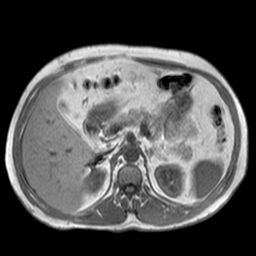

PRDNet: Medical image segmentation based on parallel residual and dilated network.

The code of train.py is borrowed from Multi-Scale-Attention, our contributions are prdnet.py, test.py and inference.py. Meanwhile, Visdom is used to visualize the training process.

Our model is tested in the following environment:

- python3.5(anaconda3)

- pytoch1.2.0

- torchvision0.4.0

- CUDA10.0

- GPU: NVIDIA 1080Ti(11G)

- Visdom

- You can download our pretrained model Best_MR2.pth from BaiduNetDisk. The extraction code is

PRDN. - Put the downloaded pretrained model in the

./modelfolder. - Run

python inference.pyto realize a quick demo.

- CHAOS dataset can be downloaded from its official website.

- The processing of the original dataset can be referred to "prepare your data".

- Once you've divided the training set, the validation set and the test set. You should place the groundtruth of the validation set and its corresponding DCM format images in the corresponding folder.

- We have placed our divided groundtruth and its corresponding DCM format images under the "Data_3D" folder. You can replace the contents with yours.

- Run

python train.py

- Prepare test set as mentioned in Training.

- Run

python test.py

If the idea of our work is useful for your research, please consider citing.

@article{PRDNet,

title = "{PRDN}et: {M}edical image segmentation based on parallel residual and dilated network",

journal = "Measurement",

year = "2020",

issn = "0263-2241",

doi = "https://doi.org/10.1016/j.measurement.2020.108661",

url = "http:https://www.sciencedirect.com/science/article/pii/S0263224120311738",

author = "Haojie Guo and Dedong Yang",

}