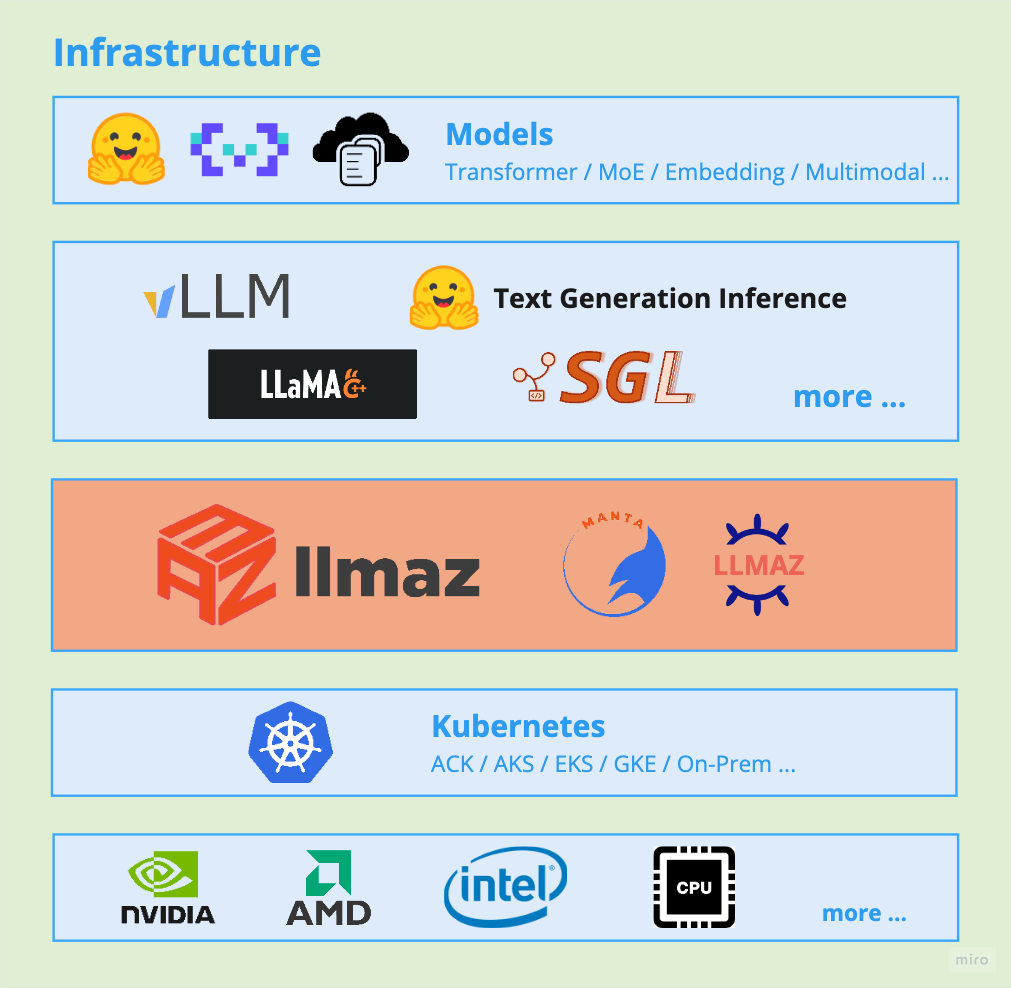

llmaz (pronounced /lima:z/), aims to provide a Production-Ready inference platform for large language models on Kubernetes. It closely integrates with the state-of-the-art inference backends to bring the leading-edge researches to cloud.

🌱 llmaz is alpha now, so API may change before graduating to Beta.

- Easy of Use: People can quick deploy a LLM service with minimal configurations.

- Broad Backends Support: llmaz supports a wide range of advanced inference backends for different scenarios, like vLLM, Text-Generation-Inference, SGLang, llama.cpp. Find the full list of supported backends here.

- Model Distribution: Out-of-the-box model cache system with Manta.

- Accelerator Fungibility: llmaz supports serving the same LLM with various accelerators to optimize cost and performance.

- SOTA Inference: llmaz supports the latest cutting-edge researches like Speculative Decoding or Splitwise(WIP) to run on Kubernetes.

- Various Model Providers: llmaz supports a wide range of model providers, such as HuggingFace, ModelScope, ObjectStores. llmaz will automatically handle the model loading, requiring no effort from users.

- Multi-hosts Support: llmaz supports both single-host and multi-hosts scenarios with LWS from day 0.

- Scaling Efficiency (WIP): llmaz works smoothly with autoscaling components like Cluster-Autoscaler or Karpenter to meet elastic demands.

Read the Installation for guidance.

Here's a toy example for deploying facebook/opt-125m, all you need to do

is to apply a Model and a Playground.

If you're running on CPUs, you can refer to llama.cpp, or more examples here.

Note: if your model needs Huggingface token for weight downloads, please run

kubectl create secret generic modelhub-secret --from-literal=HF_TOKEN=<your token>ahead.

apiVersion: llmaz.io/v1alpha1

kind: OpenModel

metadata:

name: opt-125m

spec:

familyName: opt

source:

modelHub:

modelID: facebook/opt-125m

inferenceFlavors:

- name: t4 # GPU type

requests:

nvidia.com/gpu: 1apiVersion: inference.llmaz.io/v1alpha1

kind: Playground

metadata:

name: opt-125m

spec:

replicas: 1

modelClaim:

modelName: opt-125mkubectl port-forward pod/opt-125m-0 8080:8080curl https://localhost:8080/v1/modelscurl https://localhost:8080/v1/completions \

-H "Content-Type: application/json" \

-d '{

"model": "opt-125m",

"prompt": "San Francisco is a",

"max_tokens": 10,

"temperature": 0

}'If you want to learn more about this project, please refer to develop.md.

- Gateway support for traffic routing

- Metrics support

- Serverless support for cloud-agnostic users

- CLI tool support

- Model training, fine tuning in the long-term

Join us for more discussions:

- Slack Channel: #llmaz

All kinds of contributions are welcomed ! Please following CONTRIBUTING.md.