This example provides the supporting fiels to the blog post Integrating the Elastic Stack with ArcSight SIEM - Part 3.

This provides a working example of scaling an ArcSight-Elasticsearch architecture. A full architectural description can be found in the above blog post.

Example has been tested in following versions:

- Elasticsearch 5.6

- Logstash 5.6

- Kibana 5.6

- Kafka 0.10.1.0

-

Follow the Installation & Setup Guide to install and test the Elastic Stack (you can skip this step if you have a working installation of the Elastic Stack,)

-

Run Elasticsearch & Kibana

<path_to_elasticsearch_root_dir>/bin/elasticsearch <path_to_kibana_root_dir>/bin/kibana

-

Check that Elasticsearch and Kibana are up and running.

- Open

localhost:9200in web browser -- should return status code 200 - Open

localhost:5601in web browser -- should display Kibana UI.

Note: By default, Elasticsearch runs on port 9200, and Kibana run on ports 5601. If you changed the default ports, change the above calls to use appropriate ports.

- Open

-

Download and install Logstash as described here. Do not start Logstash.

A docker-compose.yml file is provided to recreate in instance of the Elastic Stack (Elasticsearch and Kibana). This assumes:

- You have Docker Engine installed.

- Your host meets the prerequisites.

- If you are on Linux, that docker-compose is installed.

Additionally a docker file is provided for ingestion of CEF data with Logstash. This Logstash instance reads data from Kafka.

The following assumes you have a working Kafka Installation. For those using docker a Dockerfile for Kafka is provided as part of the example here.

If using the docker file described above, the following can be ignored.

Download the following files to the root installation directory of Logstash:

- logstash/pipeline/logstash.conf - Logstash config for ingesting CEF data into Elasticsearch

- logstash/pipeline/cef_template.json` - CEF template for custom mapping of fields

Unfortunately, Github does not provide a convenient one-click option to download entire contents of a subfolder in a repo. Use sample code provided below to download the required files to a local directory:

wget https://raw.githubusercontent.com/elastic/examples/master/Security%20Analytics/cef_with_kafka/logstash/pipeline/logstash.conf

wget https://raw.githubusercontent.com/elastic/examples/master/Security%20Analytics/cef_with_kafka/logstash/pipeline/cef_template.json

Start Logstash from the commnad line as described here, using the configuration file download above.

- Run the command

<installdir>\current\bin\arcsight agentsetup - Select

Yesto start thewizard mode - Select

I want to add/remove/modify ArcSight Manager destinations - Select

add new destination - Select

Event Broker (CEF Kafka) - Add the information of the Kafka server and port you used in the docker-compose for the environments variable

KAFKA_ADVERTISED_HOST_NAMEandKAFKA_ADVERTISED_PORT - Add the Topic name

cef

Download the dashboard.json file provided e.g.

wget https://raw.githubusercontent.com/elastic/examples/master/Security%20Analytics/cef_with_kafka/dashboard.json

- Access Kibana by going to

https://localhost:5601in a web browser - Connect Kibana to the

cef-*index in Elasticsearch- Click the Management tab >> Index Patterns tab >> Add New. Specify

cef-*as the index pattern name and click Create to define the index pattern with the field @timestamp

- Click the Management tab >> Index Patterns tab >> Add New. Specify

- Load sample dashboard into Kibana

- Click the Management tab >> Saved Objects tab >> Import, and select

dashboard.json

- Click the Management tab >> Saved Objects tab >> Import, and select

- Open dashboard

- Click on Dashboard**** tab and open

FW-Dashboarddashboard

- Click on Dashboard**** tab and open

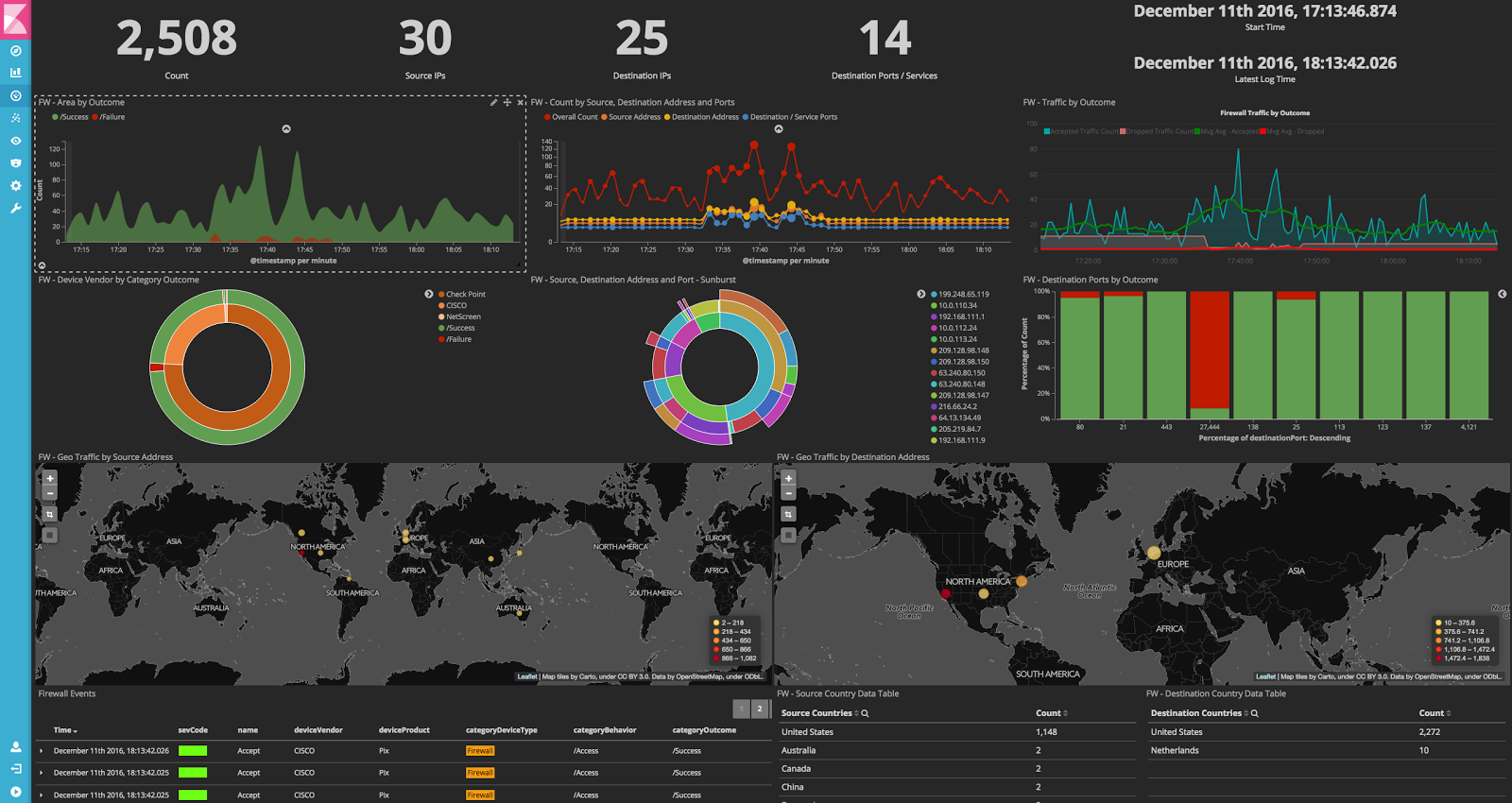

Voila! You should see the following dashboards. Enjoy!

If you found this example helpful and would like to see more such Getting Started examples for other standard formats, we would love to hear from you. If you would like to contribute examples to this repo, we'd love that too!