🔥 Nov 24: pyautogen v0.2 is released with many updates and new features compared to v0.1.1. It switches to using openai-python v1. Please read the migration guide.

🔥 Nov 11: OpenAI's Assistants are available in AutoGen and interoperatable with other AutoGen agents! Checkout our blogpost for details and examples.

🔥 Nov 8: AutoGen is selected into Open100: Top 100 Open Source achievements 35 days after spinoff.

🔥 Nov 6: AutoGen is mentioned by Satya Nadella in a fireside chat around 13:20.

🔥 Nov 1: AutoGen is the top trending repo on GitHub in October 2023.

🎉 Oct 03: AutoGen spins off from FLAML on Github and has a major paper update.

🎉 Aug 16: Paper about AutoGen on arxiv. 📚 Cite paper.

🎉 Mar 29: AutoGen is first created in FLAML.

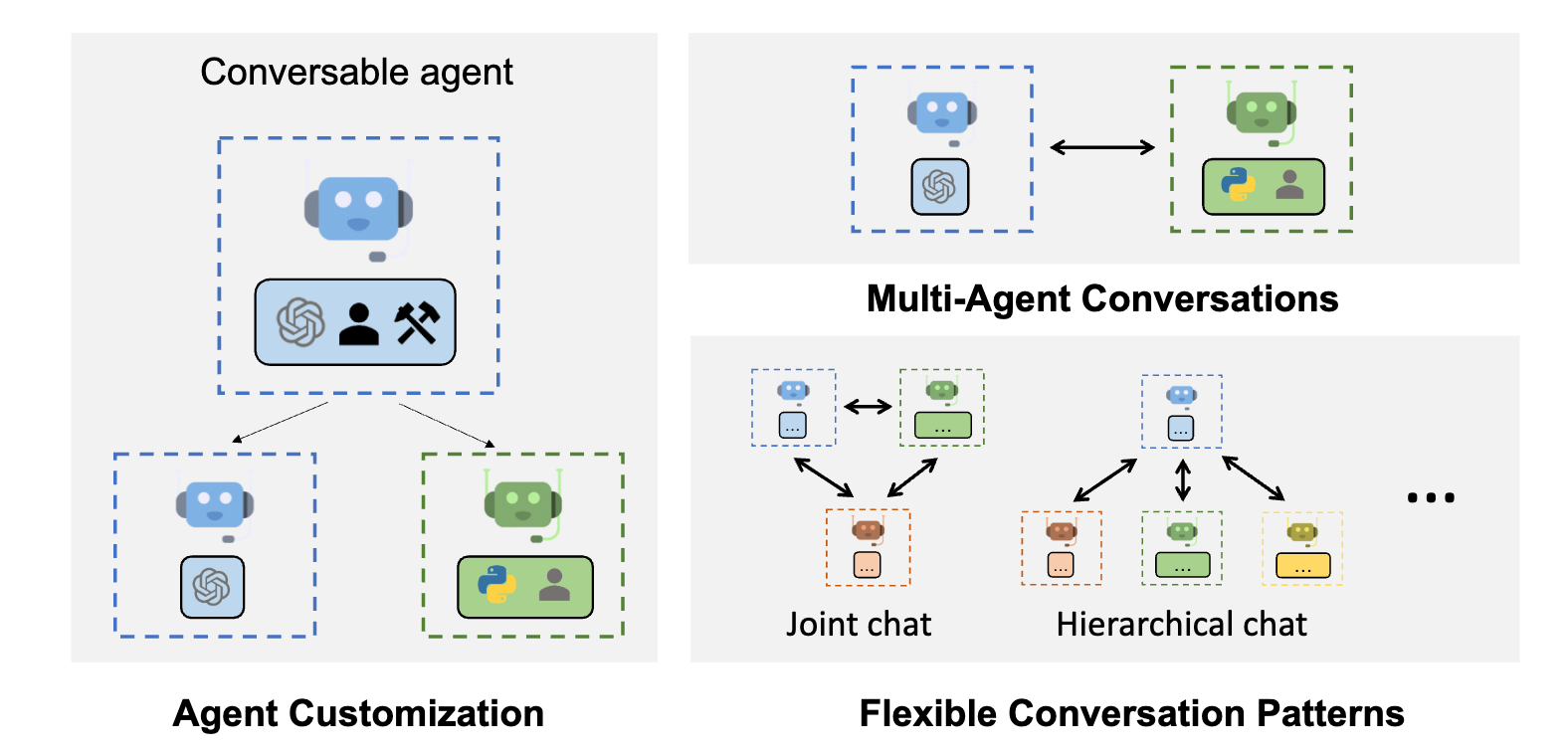

AutoGen is a framework that enables the development of LLM applications using multiple agents that can converse with each other to solve tasks. AutoGen agents are customizable, conversable, and seamlessly allow human participation. They can operate in various modes that employ combinations of LLMs, human inputs, and tools.

- AutoGen enables building next-gen LLM applications based on multi-agent conversations with minimal effort. It simplifies the orchestration, automation, and optimization of a complex LLM workflow. It maximizes the performance of LLM models and overcomes their weaknesses.

- It supports diverse conversation patterns for complex workflows. With customizable and conversable agents, developers can use AutoGen to build a wide range of conversation patterns concerning conversation autonomy, the number of agents, and agent conversation topology.

- It provides a collection of working systems with different complexities. These systems span a wide range of applications from various domains and complexities. This demonstrates how AutoGen can easily support diverse conversation patterns.

- AutoGen provides enhanced LLM inference. It offers utilities like API unification and caching, and advanced usage patterns, such as error handling, multi-config inference, context programming, etc.

AutoGen is powered by collaborative research studies from Microsoft, Penn State University, and the University of Washington.

The easiest way to start playing is

-

Click below to use the GitHub Codespace

-

Copy OAI_CONFIG_LIST_sample to ./notebook folder, name to OAI_CONFIG_LIST, and set the correct configuration.

-

Start playing with the notebooks!

AutoGen requires Python version >= 3.8, < 3.12. It can be installed from pip:

pip install pyautogenMinimal dependencies are installed without extra options. You can install extra options based on the feature you need.

Find more options in Installation.

For code execution, we strongly recommend installing the Python docker package and using docker.

For LLM inference configurations, check the FAQs.

For GUI configurations, check the GUI docs.

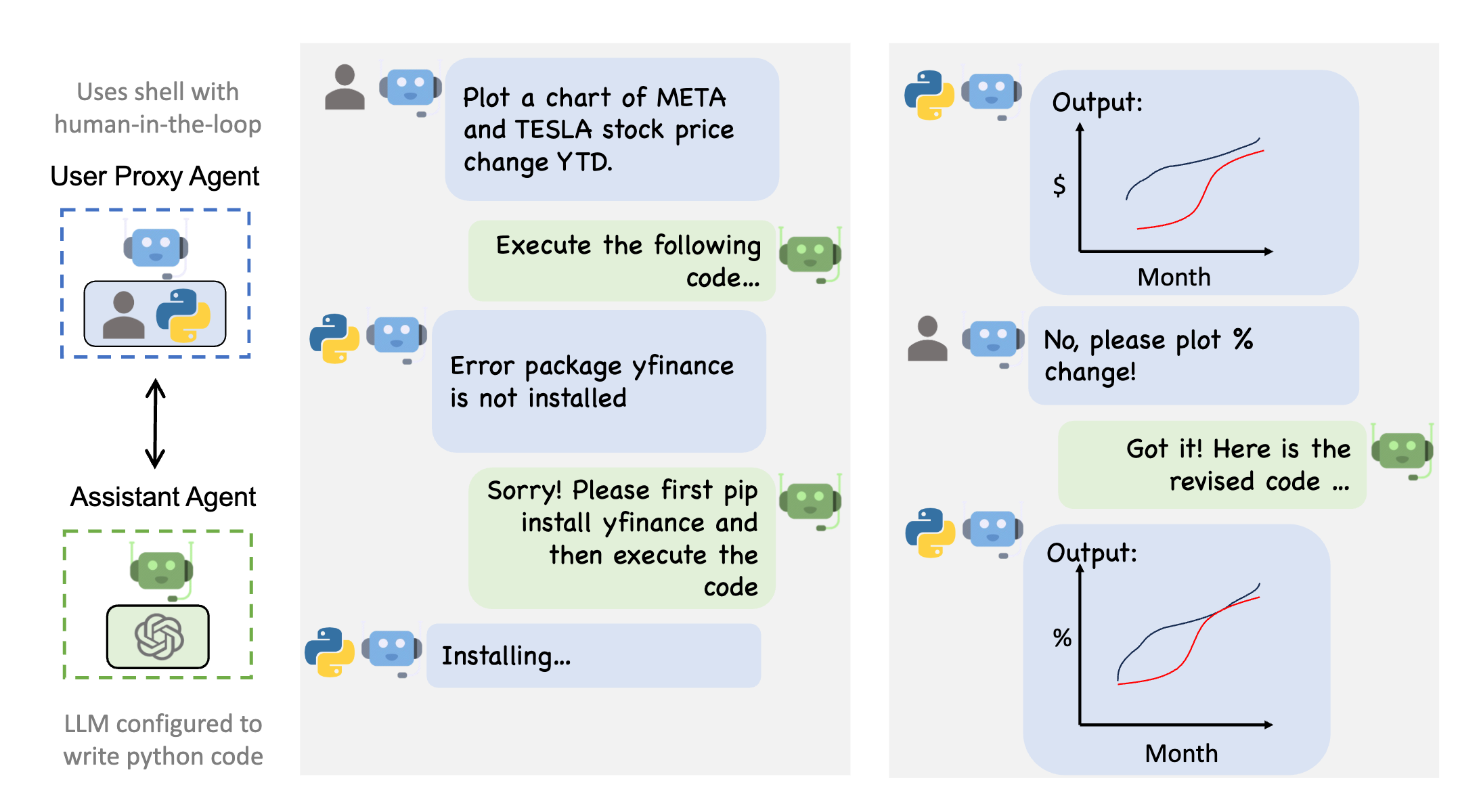

Autogen enables the next-gen LLM applications with a generic multi-agent conversation framework. It offers customizable and conversable agents that integrate LLMs, tools, and humans. By automating chat among multiple capable agents, one can easily make them collectively perform tasks autonomously or with human feedback, including tasks that require using tools via code.

Features of this use case include:

- Multi-agent conversations: AutoGen agents can communicate with each other to solve tasks. This allows for more complex and sophisticated applications than would be possible with a single LLM.

- Customization: AutoGen agents can be customized to meet the specific needs of an application. This includes the ability to choose the LLMs to use, the types of human input to allow, and the tools to employ.

- Human participation: AutoGen seamlessly allows human participation. This means that humans can provide input and feedback to the agents as needed.

For example,

from autogen import AssistantAgent, UserProxyAgent, config_list_from_json

# Load LLM inference endpoints from an env variable or a file

# See https://microsoft.github.io/autogen/docs/FAQ#set-your-api-endpoints

# and OAI_CONFIG_LIST_sample

config_list = config_list_from_json(env_or_file="OAI_CONFIG_LIST")

# You can also set config_list directly as a list, for example, config_list = [{'model': 'gpt-4', 'api_key': '<your OpenAI API key here>'},]

assistant = AssistantAgent("assistant", llm_config={"config_list": config_list})

user_proxy = UserProxyAgent("user_proxy", code_execution_config={"work_dir": "coding"})

user_proxy.initiate_chat(assistant, message="Plot a chart of NVDA and TESLA stock price change YTD.")

# This initiates an automated chat between the two agents to solve the taskThis example can be run with

python test/twoagent.pyAfter the repo is cloned.

The figure below shows an example conversation flow with AutoGen.

Please find more code examples for this feature.

Autogen also helps maximize the utility out of the expensive LLMs such as ChatGPT and GPT-4. It offers enhanced LLM inference with powerful functionalities like caching, error handling, multi-config inference and templating.

You can find detailed documentation about AutoGen here.

In addition, you can find:

-

Research, blogposts around AutoGen, and Transparency FAQs

@inproceedings{wu2023autogen,

title={AutoGen: Enabling Next-Gen LLM Applications via Multi-Agent Conversation Framework},

author={Qingyun Wu and Gagan Bansal and Jieyu Zhang and Yiran Wu and Beibin Li and Erkang Zhu and Li Jiang and Xiaoyun Zhang and Shaokun Zhang and Jiale Liu and Ahmed Hassan Awadallah and Ryen W White and Doug Burger and Chi Wang},

year={2023},

eprint={2308.08155},

archivePrefix={arXiv},

primaryClass={cs.AI}

}

@inproceedings{wang2023EcoOptiGen,

title={Cost-Effective Hyperparameter Optimization for Large Language Model Generation Inference},

author={Chi Wang and Susan Xueqing Liu and Ahmed H. Awadallah},

year={2023},

booktitle={AutoML'23},

}

@inproceedings{wu2023empirical,

title={An Empirical Study on Challenging Math Problem Solving with GPT-4},

author={Yiran Wu and Feiran Jia and Shaokun Zhang and Hangyu Li and Erkang Zhu and Yue Wang and Yin Tat Lee and Richard Peng and Qingyun Wu and Chi Wang},

year={2023},

booktitle={ArXiv preprint arXiv:2306.01337},

}

This project welcomes contributions and suggestions. Most contributions require you to agree to a Contributor License Agreement (CLA) declaring that you have the right to, and actually do, grant us the rights to use your contribution. For details, visit https://cla.opensource.microsoft.com.

If you are new to GitHub here is a detailed help source on getting involved with development on GitHub.

When you submit a pull request, a CLA bot will automatically determine whether you need to provide a CLA and decorate the PR appropriately (e.g., status check, comment). Simply follow the instructions provided by the bot. You will only need to do this once across all repos using our CLA.

This project has adopted the Microsoft Open Source Code of Conduct. For more information, see the Code of Conduct FAQ or contact [email protected] with any additional questions or comments.

Microsoft and any contributors grant you a license to the Microsoft documentation and other content in this repository under the Creative Commons Attribution 4.0 International Public License, see the LICENSE file, and grant you a license to any code in the repository under the MIT License, see the LICENSE-CODE file.

Microsoft, Windows, Microsoft Azure, and/or other Microsoft products and services referenced in the documentation may be either trademarks or registered trademarks of Microsoft in the United States and/or other countries. The licenses for this project do not grant you rights to use any Microsoft names, logos, or trademarks. Microsoft's general trademark guidelines can be found at https://go.microsoft.com/fwlink/?LinkID=254653.

Privacy information can be found at https://privacy.microsoft.com/en-us/

Microsoft and any contributors reserve all other rights, whether under their respective copyrights, patents, or trademarks, whether by implication, estoppel, or otherwise.