XuanPolicy is an open-source ensemble of Deep Reinforcement Learning (DRL) algorithm implementations.

We call it as Xuan-Ce (玄策) in Chinese. "Xuan" means incredible and magic box, "Ce" means policy.

DRL algorithms are sensitive to hyper-parameters tuning, varying in performance with different tricks, and suffering from unstable training processes, therefore, sometimes DRL algorithms seems elusive and "Xuan". This project gives a thorough, high-quality and easy-to-understand implementation of DRL algorithms, and hope this implementation can give a hint on the magics of reinforcement learning.

We expect it to be compatible with multiple deep learning toolboxes (torch, tensorflow, and mindspore), and hope it can really become a zoo full of DRL algorithms.

This project is supported by Peng Cheng Laboratory.

- Vanilla Policy Gradient - PG [Paper]

- Phasic Policy Gradient - PPG [Paper] [Code]

- Advantage Actor Critic - A2C [Paper] [Code]

- Soft actor-critic based on maximum entropy - SAC [Paper] [Code]

- Soft actor-critic for discrete actions - SAC-Discrete [Paper] [Code]

- Proximal Policy Optimization with clipped objective - PPO-Clip [Paper] [Code]

- Proximal Policy Optimization with KL divergence - PPO-KL [Paper] [Code]

- Deep Q Network - DQN [Paper]

- DQN with Double Q-learning - Double DQN [Paper]

- DQN with Dueling network - Dueling DQN [Paper]

- DQN with Prioritized Experience Replay - PER [Paper]

- DQN with Parameter Space Noise for Exploration - NoisyNet [Paper]

- DQN with Convolutional Neural Network - C-DQN [Paper]

- DQN with Long Short-term Memory - L-DQN [Paper]

- DQN with CNN and Long Short-term Memory - CL-DQN [Paper]

- DQN with Quantile Regression - QRDQN [Paper]

- Distributional Reinforcement Learning - C51 [Paper]

- Deep Deterministic Policy Gradient - DDPG [Paper] [Code]

- Twin Delayed Deep Deterministic Policy Gradient - TD3 [Paper][Code]

- Parameterised deep Q network - P-DQN [Paper]

- Multi-pass parameterised deep Q network - MP-DQN [Paper] [Code]

- Split parameterised deep Q network - SP-DQN [Paper]

- Independent Q-learning - IQL [Paper] [Code]

- Value Decomposition Networks - VDN [Paper] [Code]

- Q-mixing networks - QMIX [Paper] [Code]

- Weighted Q-mixing networks - WQMIX [Paper] [Code]

- Q-transformation - QTRAN [Paper] [Code]

- Deep Coordination Graphs - DCG [Paper] [Code]

- Independent Deep Deterministic Policy Gradient - IDDPG [Paper]

- Multi-agent Deep Deterministic Policy Gradient - MADDPG [Paper] [Code]

- Counterfactual Multi-agent Policy Gradient - COMA [Paper] [Code]

- Multi-agent Proximal Policy Optimization - MAPPO [Paper] [Code]

- Mean-Field Q-learning - MFQ [Paper] [Code]

- Mean-Field Actor-Critic - MFAC [Paper] [Code]

- Independent Soft Actor-Critic - ISAC

- Multi-agent Soft Actor-Critic - MASAC [Paper]

- Multi-agent Twin Delayed Deep Deterministic Policy Gradient - MATD3 [Paper]

The library can be run at Linux, Windows, MacOS, and Euler OS, etc.

Before installing XuanPolicy, you should install Anaconda to prepare a python environment.

After that, create a terminal and install XuanPolicy by the following steps.

Step 1: Create and activate a new conda environment (python>=3.7 is suggested):

conda create -n xuanpolicy python=3.7

conda activate xuanpolicy

step 2: Install the library:

pip install xuanpolicy

This command does not include the dependencies of deep learning toolboxes. To install the XuanPolicy with

deep learning tools, you can type pip install xuanpolicy[torch] for PyTorch, pip install xuanpolicy[tensorflow]

for TensorFlow, pip install xuanpolicy[mindspore] for MindSpore, and pip install xuanpolicy[all] for all dependencies.

Note: Some extra packages should be installed manually for further usage.

import xuanpolicy as xp

runner = xp.get_runner(agent_name='dqn', env_name='toy_env/CartPole-v0', is_test=False)

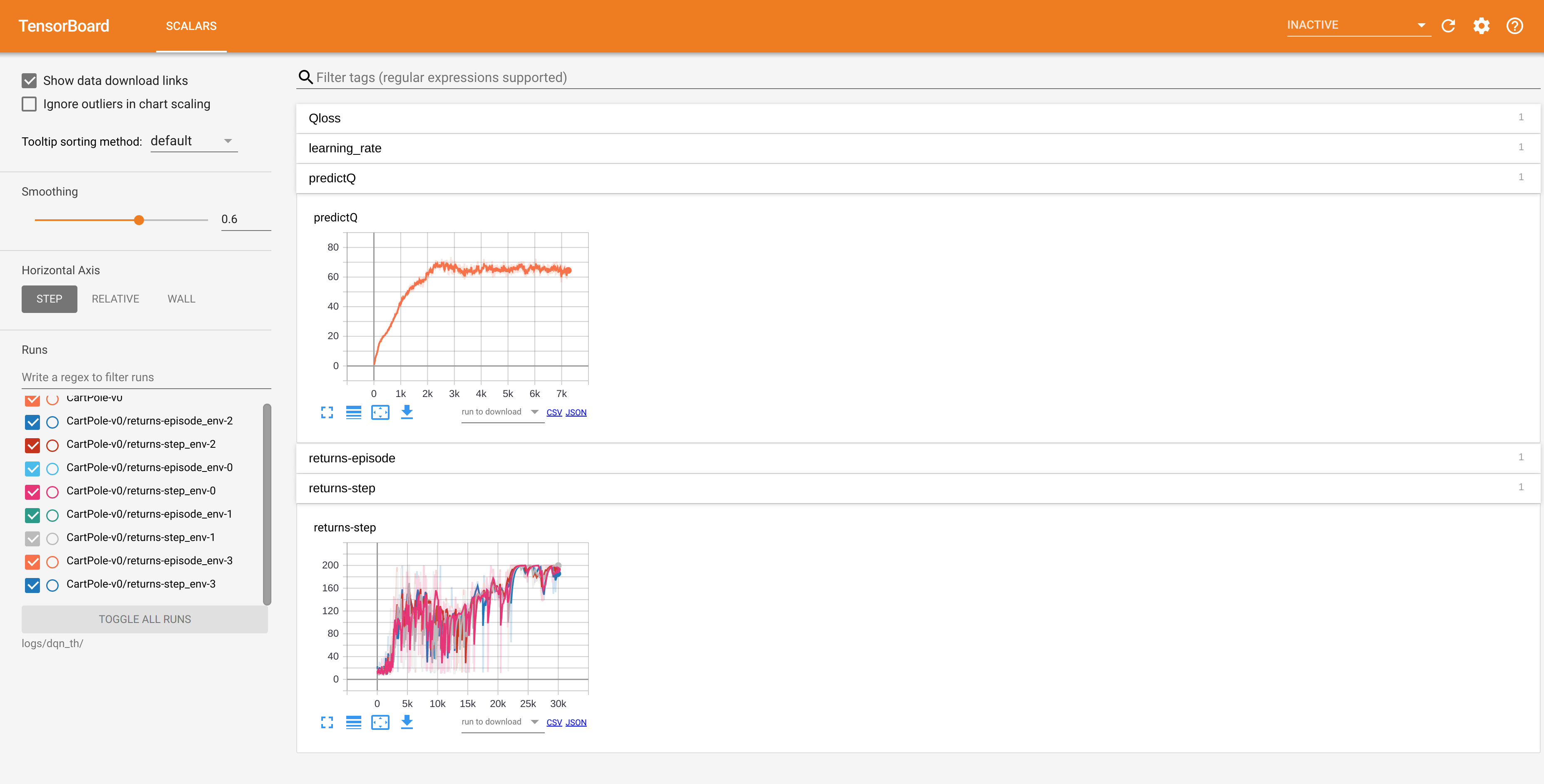

runner.run()You can use tensorboard to visualize what happened in the training process. After training, the log file will be automatically generated in the directory ".results/" and you should be able to see some training data after running the command.

$ tensorboard --logdir ./logs/

If everything going well, you should get a similar display like below.

To visualize the training scores, training times and the performance, you need to initialize the environment as

env = MonitorVecEnv(DummyVecEnv(...))

then, after training terminated, two extra files "xxx.npy" and "xxx.gif" will be generated in the "./results/" directory. The "xxx.npy" record the scores and clock time for each episode in training. But we haven't provided a plotter.py to draw the curves for this.

@article{XuanPolicy2023,

author = {Wenzhang Liu, Wenzhe Cai, Kun Jiang, and others},

title = {XuanPolicy: A Comprehensive Deep Reinforcement Learning Library},

year = {2023}

}